Blind Spot Audit

Spot Fraud in your approved Customers

Runs On Your Cloud

No Data Sharing

No Contract Required

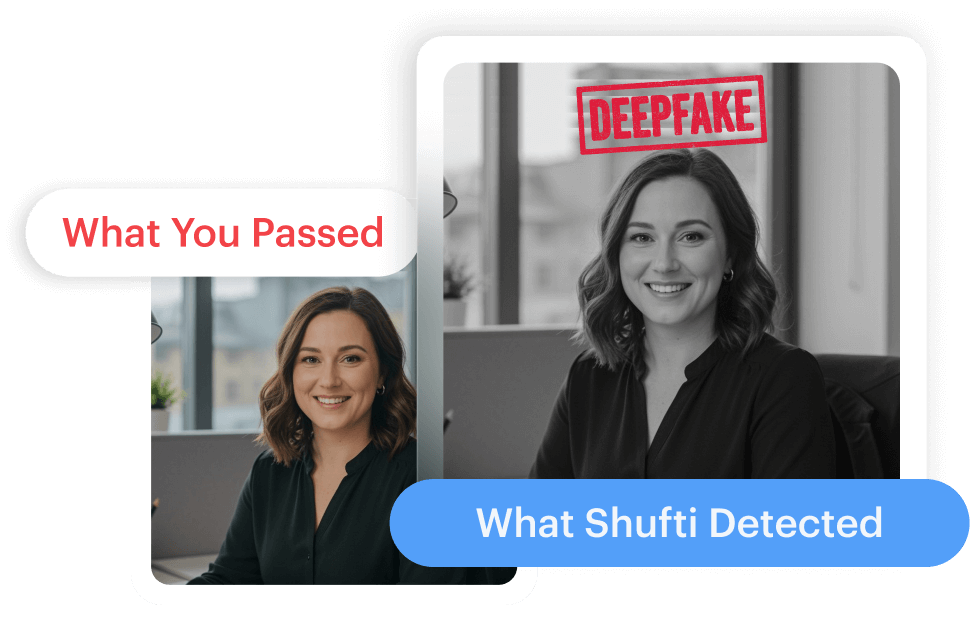

Deepfake Detector

Check where deepfake IDs slipped

through your stack.

Runs On Your Cloud

No Data Sharing

No Contract Required

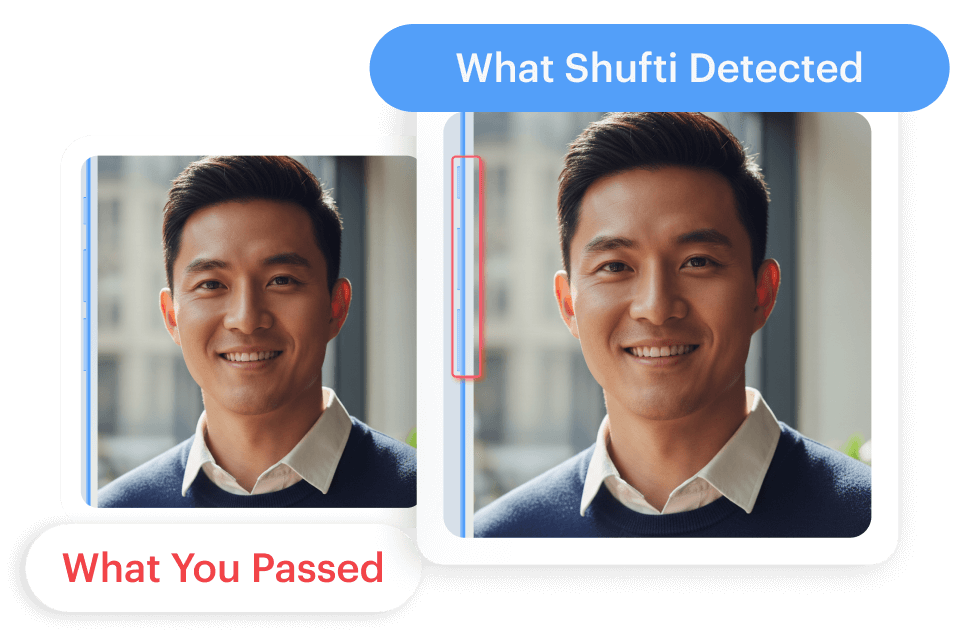

Liveness Detection

Find the replay gaps in your passed

liveness checks.

Runs On Your Cloud

No Data Sharing

No Contract Required

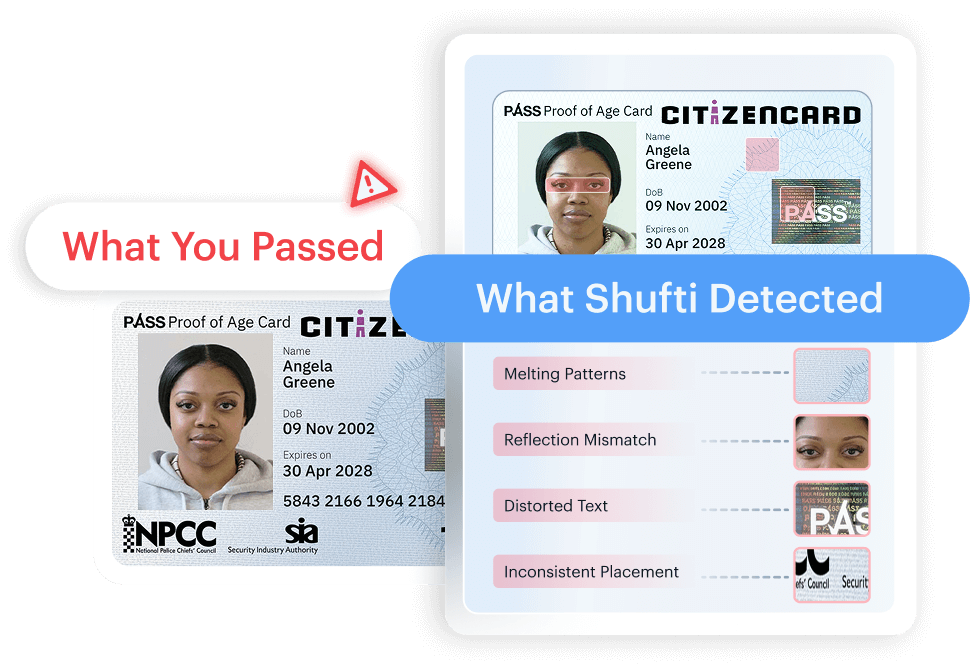

Document Deepfake Detection

Spot synthetic documents hiding in

verified users.

Runs On Your Cloud

No Data Sharing

No Contract Required

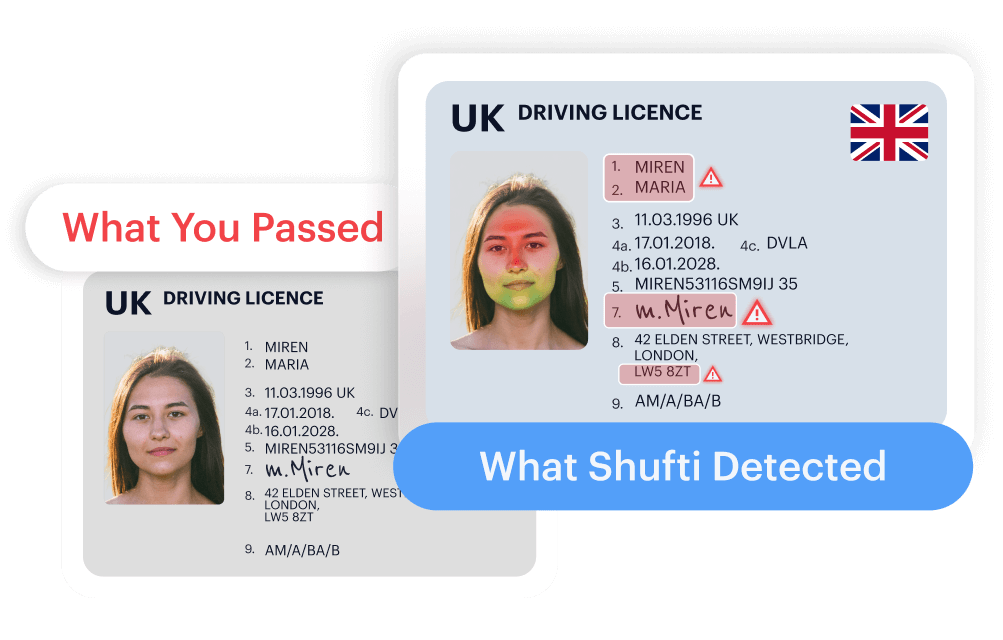

Document Originality Detection

Stop fake documents before they pass.

Runs On Your Cloud

No Data Sharing

No Contract Required

Introducing Blind Spot Audit. Spot AI-generated forgeries with advanced document analysis.

Run Now on AWS

Introducing Deepfake Detector. Detect deepfakes with precision your stack has missed.

Run Now on AWS

Introducing Liveness Detection. Detect spoofs with technology built for sophisticated fraud.

Run Now on AWS

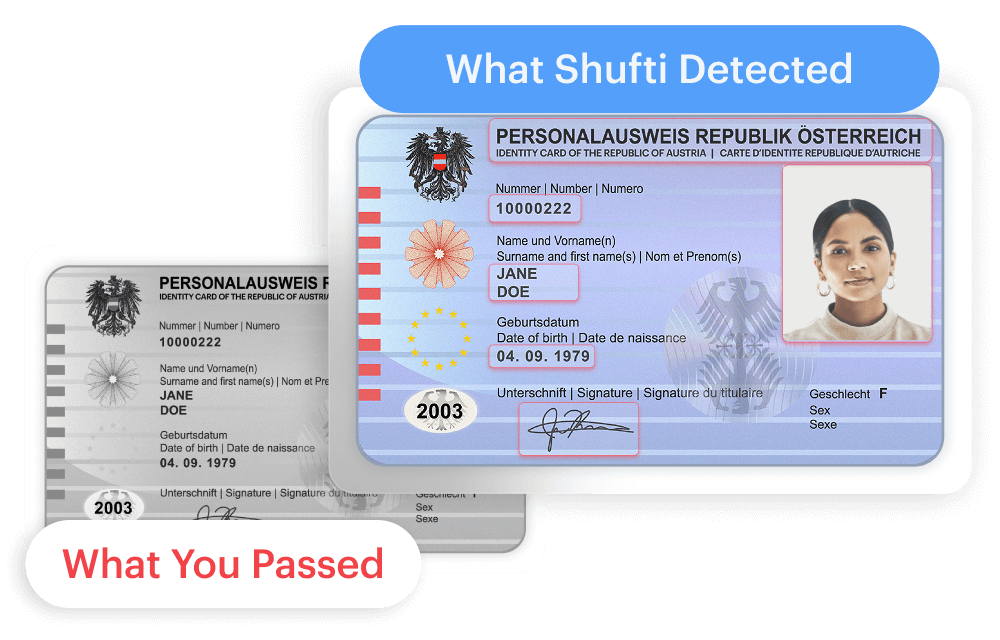

Introducing Document Deepfake Detection. Spot AI-generated forgeries with advanced document analysis.

Run Now on AWS

Introducing Document Deepfake Detection

Spot AI-generated forgeries with advanced document analysis.

Run NowIntroducing Document Originality Detection. Verify document authenticity before your next audit.

Run Now on AWS

Introducing Document Originality Detection

Verify document authenticity before your next audit.

Run Now

Products

User Onboarding

Business Onboarding

Authentication

Monitoring & Compliance

Solutions

What we do

Frauds We Prevent

Industries

Role

Platform

Explore

Knowledge Hub

Blind Spot Audit Suite

Media Room

Developers

Support

us

216.73.216.185

No posts found.

Explore our ROI Calculator

Secure your business, achieve compliance, and accelerate growth effortlessly with our cutting-edge digital identity verification solution

ROI CalculatorGet the Shufti Newsletter

Stay ahead of the curve with fresh takes on the latest identity innovations.

Take the next steps to better security.

Contact us

Get in touch with our experts. We'll help you find the perfect solution for your compliance and security needs.

Contact us Explore Now

Explore Now