Australia Investigates Tech Giants Over Weak Age Verification

Australia’s eSafety Commissioner has opened formal investigations into Facebook, Instagram, Snapchat, TikTok, and YouTube for potential violations of the country’s under-16 social media ban. Communications Minister Anika Wells confirmed in late March 2026 that the government is moving from compliance monitoring to enforcement, with decisions on legal action expected by mid-2026.

Five Platforms Under Investigation After Compliance Report

The Online Safety Amendment (Social Media Minimum Age) Act 2024 took effect on December 10, 2025, requiring age-restricted social media platforms to take “reasonable steps” to prevent Australians under 16 from creating or maintaining accounts. Platforms that fail to comply face civil penalties of up to AUD $49.5 million per breach.

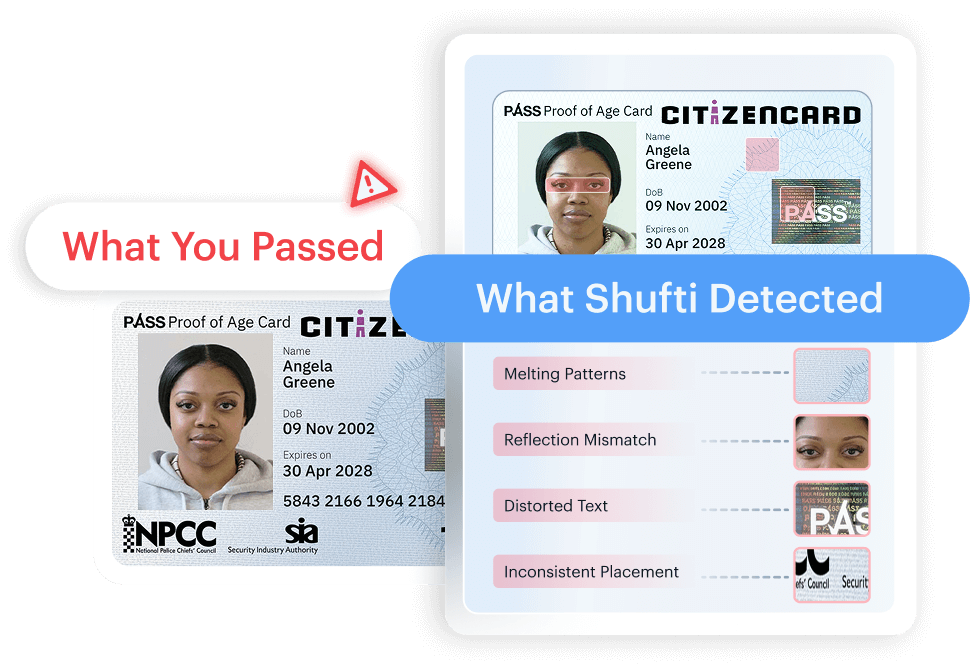

The eSafety Commissioner’s first compliance report, released in late March 2026, found that all five platforms under investigation had gaps in their age-assurance systems. According to the report, platforms were allowing users who had declared themselves under 16 to undergo repeated age-assurance checks until they secured a favourable result. The commissioner characterized this practice of giving users “limitless chances” to pass facial estimation scans as a failure of the law’s intent.

Most Underage Users Still Have Active Accounts

A government-commissioned survey of 898 parents, conducted in late January 2026, found that while account ownership among 8 to 15-year-olds dropped from 49.7% to 31.3% after the ban, roughly 7 in 10 children who had accounts before December 10 still held them on Facebook, Instagram, Snapchat, and TikTok. About half retained accounts on YouTube, according to the same survey. Platforms have collectively removed more than five million underage accounts since the law took effect, but the survey data suggests these removals have not kept pace with the scale of underage usage.

What Went Wrong with Age Assurance Implementation

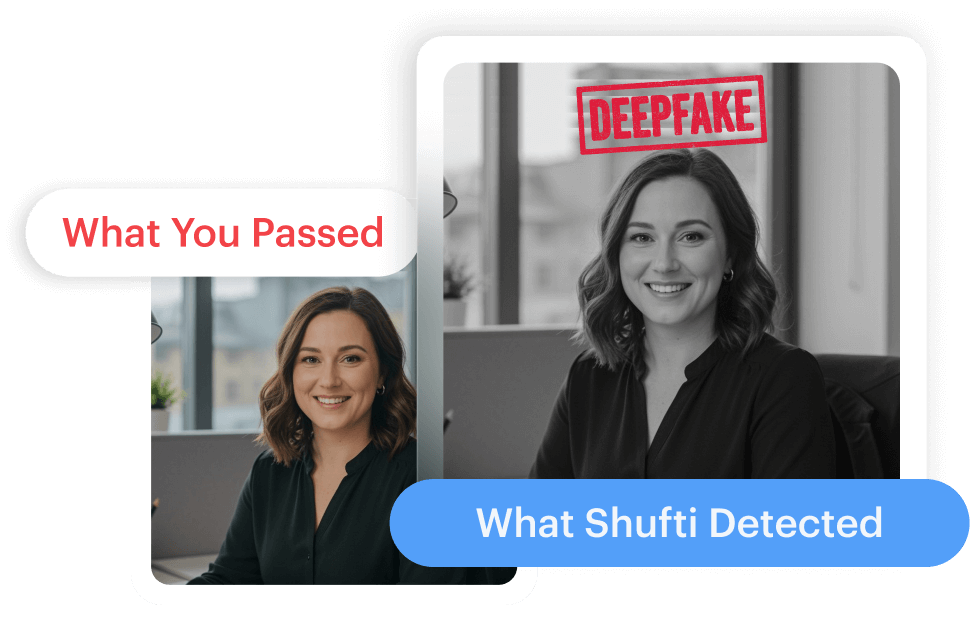

The eSafety Commissioner’s findings point to a structural problem with how platforms have implemented age checks. Rather than deploying verification systems capable of reliably blocking minors at account creation, platforms have relied on low-confidence methods like facial age estimation with no limit on retry attempts. When a user declared their age as under 16, some platforms responded by prompting them to “correct” their age through these same low-confidence checks, effectively coaching minors through the bypass.

This approach treats age assurance as a checkbox rather than a functional barrier. The law requires “reasonable steps,” but a system that allows unlimited retries on a probabilistic check does not meet that standard in practice.

Building Verification That Withstands Scrutiny

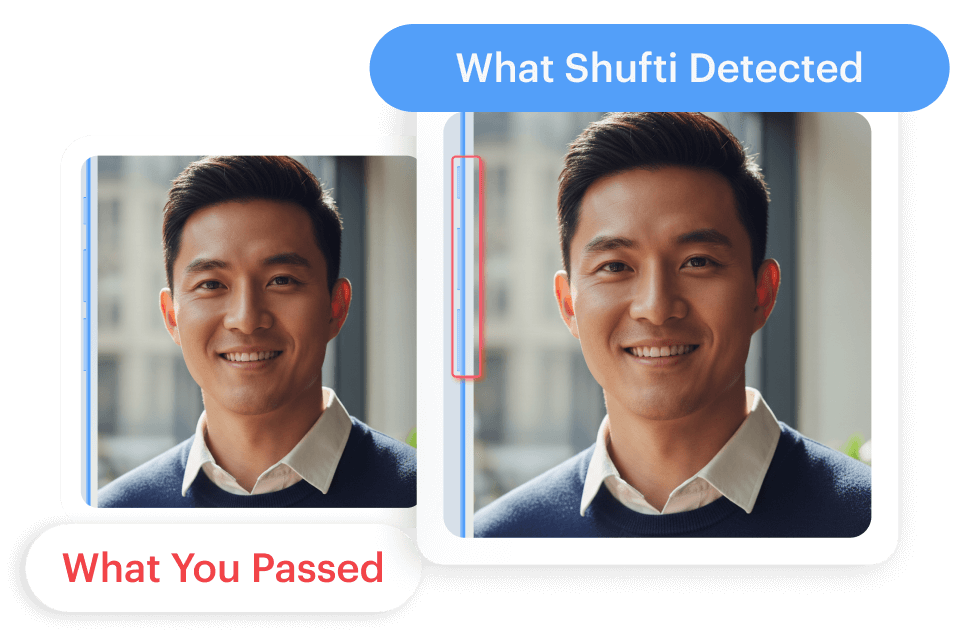

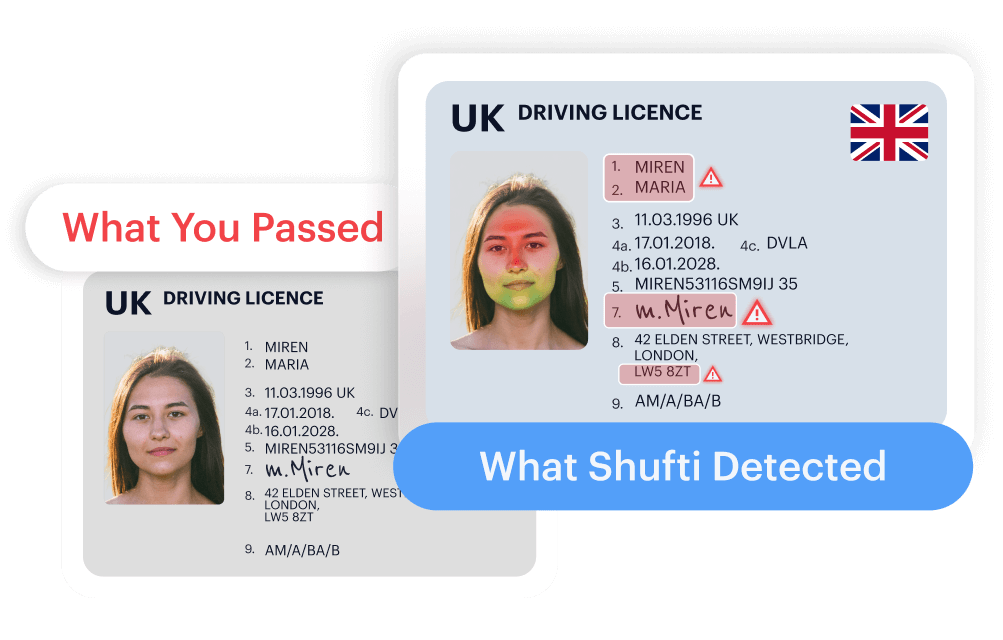

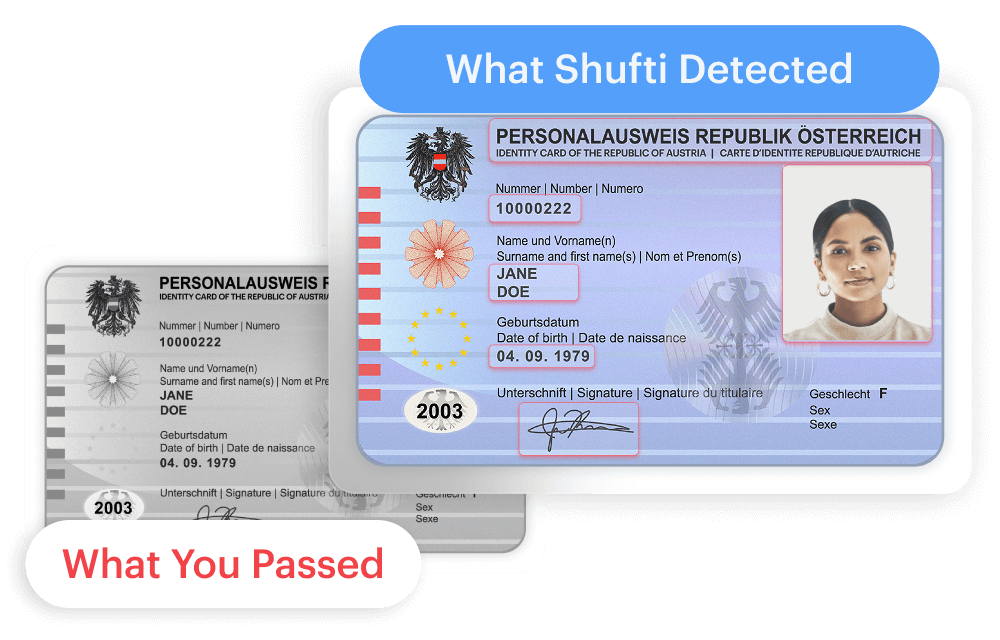

Platforms operating under Australia’s social media age restrictions need verification infrastructure that goes beyond single-layer estimation. Effective age assurance combines document verification, biometric analysis, and liveness detection to produce high-confidence results on the first attempt, without relying on repeated checks that minors can game.

Shufti’s age verification solution pairs document-based identity checks with facial biometric age estimation across 230+ countries, delivering results in under 15 seconds. The platform holds KJM certification in Germany, one of the strictest age verification accreditations in Europe, and supports the layered verification approach that Australia’s eSafety Commissioner has indicated platforms should adopt. Companies navigating these requirements can request a demo to evaluate the platform against their compliance needs.

Explore Now

Explore Now