Synthetic Identity Fraud Could Reach $58.3B: Are Deepfakes Driving a Most Expensive Industry Blind Spot?

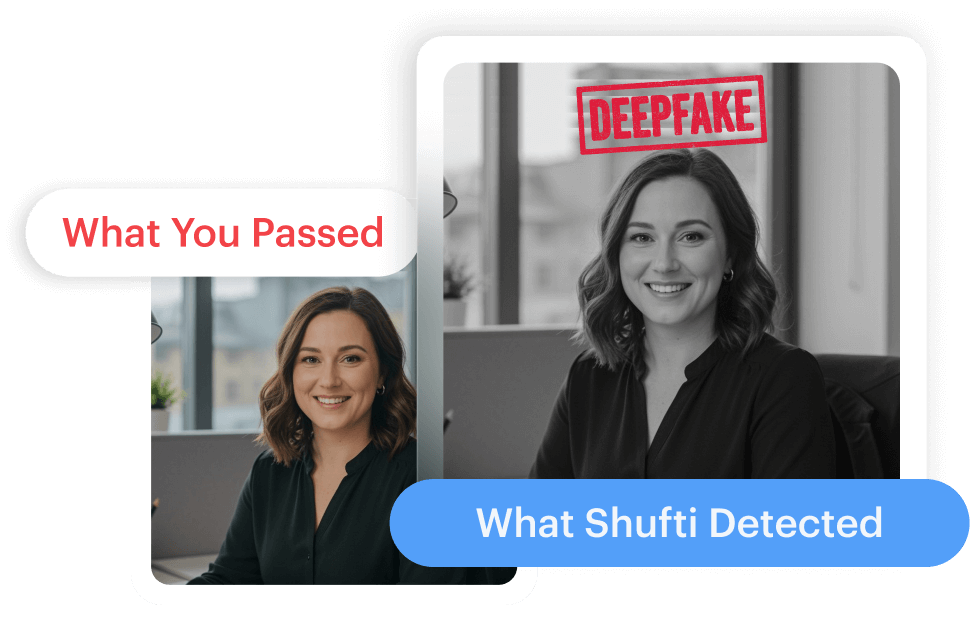

London, United Kingdom, March 17, 2026– Your deepfake detection solution may be giving you confidence for the wrong reason.

Across many digital onboarding and KYC software deployments, deepfake detection capabilities are often considered validated based on laboratory performance and controlled datasets, predictable inputs, and clean capture environments. But real-world identity verification rarely looks like that.

As per Juniper Research, the cost of synthetic identity fraud for financial institutions is projected to surge 153% over the next five years, rising from an estimated $23 billion in 2025 to $58.3 billion by 2030. The acceleration is not just about volume. Generative AI has made synthetic media realistic enough to survive the compression, re-encoding, and bandwidth limitations that define real-world KYC capture. Attackers are now optimizing manipulated media specifically to pass through live verification conditions, not just to fool the human eye.

The Lab-to-Production Gap Is Widening

Most deepfake detection models are developed and benchmarked against controlled datasets with predictable inputs, clean lighting, and consistent device quality. Live identity verification rarely looks like that.

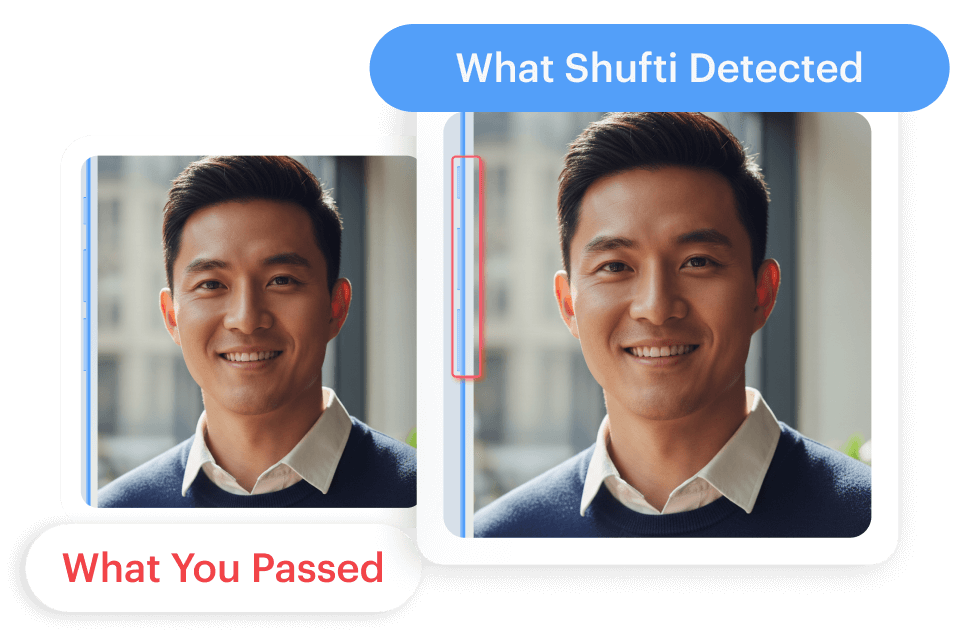

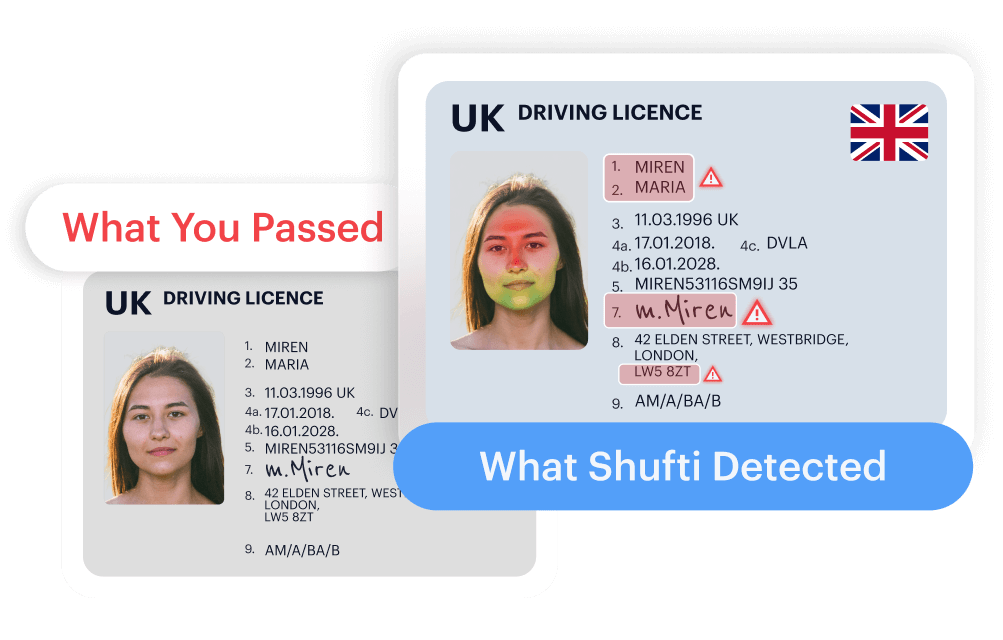

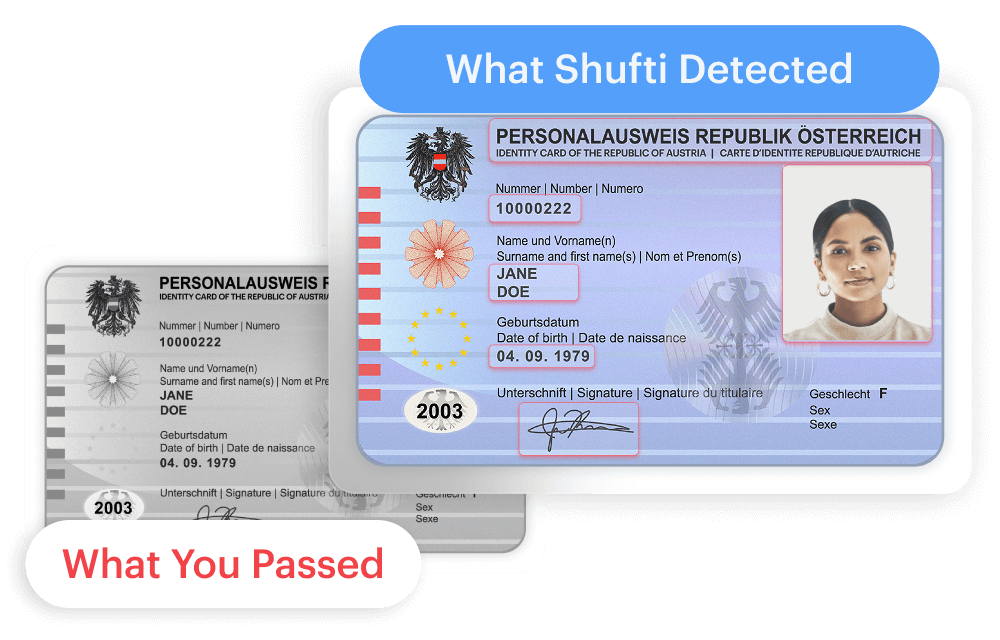

In production, verification media is compressed, screenshots are re-uploaded, lighting shifts, and device cameras range from flagship to five years old. Fine textures and natural sensor noise, the very signals many detection models depend on, are stripped away before the model ever sees the input.

The result is a growing disconnect between headline accuracy scores and actual deployment performance. Vendor claims vary widely depending on datasets, generator types, capture conditions, and undisclosed false acceptance and false rejection rate settings. A model that scores well in evaluation may behave very differently when confronted with unfamiliar demographics, degraded media, or injection-based attacks.

For banks, PSPs, BNPL providers, and regulated platforms, the downstream cost of that gap is tangible: manual review queues grow, false negatives rise, verification costs climb, and regulatory exposure increases.

Why It Matters for Payments and Fintech Leaders?

Deepfake risk is no longer a niche concern for identity teams. It directly touches onboarding conversion, account recovery, payment authorization, chargeback rates, and dispute operations.

When verification thresholds are tightened to compensate for unreliable detection, legitimate customers face friction, abandonment increases, and growth slows. When thresholds are loosened, sophisticated attacks pass through degraded media channels undetected.

This is the “trust tax” that deepfakes impose on every digital transaction, and most organizations have not yet accounted for it in their operating models.

What Needs to Change: A Practical Framework?

Addressing deepfake risk requires a structural shift, not just a tool upgrade. Based on the patterns emerging across regulated industries, five priorities stand out.

First, detection must be validated against production conditions, not benchmarks alone. Models should be stress-tested against compressed, re-encoded, and device-variable media before being considered deployment-ready.

Second, no single forensic signal should be treated as decisive. Compression strips fine textures, adds block noise and aliasing, and pushes single-cue models toward reliability breakpoints. Layered, multi-signal evaluation, where independent authenticity checks must align before risk is flagged, is materially more resilient.

Third, liveness verification needs to evolve beyond basic checks. Passive and active liveness mechanisms should be robust enough to handle both live capture and uploaded verification flows across image and video journeys, without creating unnecessary friction for legitimate users.

Fourth, organizations need continuous model adaptation. Deepfake generation techniques evolve weekly. Detection systems that are trained once and deployed statically will drift out of effectiveness faster than most teams realize.

Fifth, the industry needs shared standards for evaluating deepfake detection under real-world KYC conditions. Today, there is no unified framework, which means institutions are comparing vendor claims that were measured under incompatible conditions.

Within this broader shift, Shufti, the global identity verification provider, has developed a deepfake detection architecture designed specifically for real-world KYC verification environments. The system follows a scenario-based, production-first, threat-informed approach to fraud prevention.

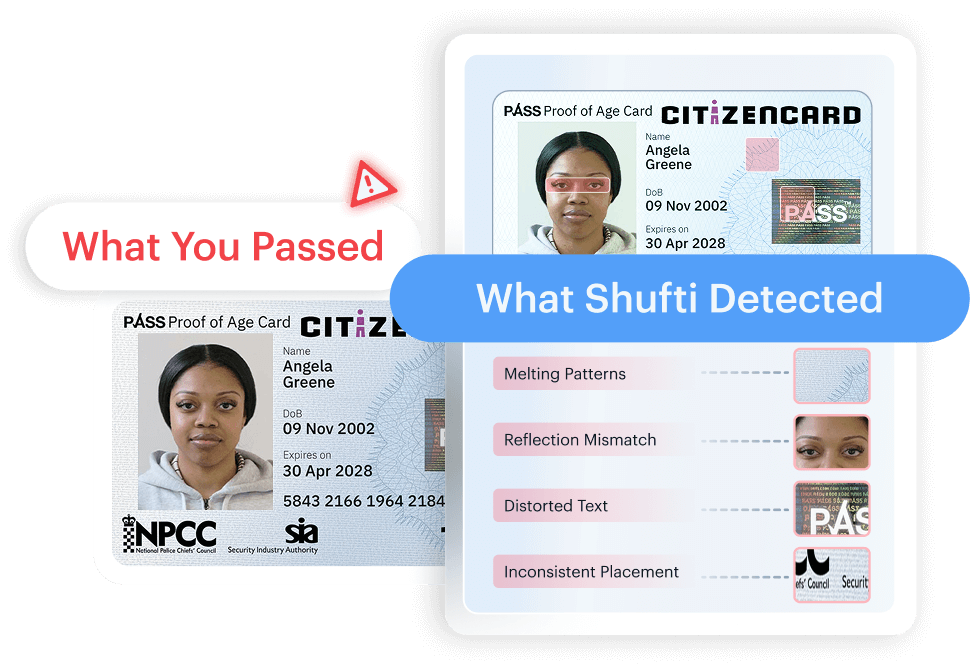

Its in-house technology supports continuous monitoring of emerging fraud techniques and iterative model updates. The system applies a multi-layer forensic evaluation model in which several independent authenticity hypotheses are analyzed before deepfake risk is flagged.

Shufti’s whitepaper outlines how these principles are operationalized through what it calls a Seven Gates Framework, a structured forensic evaluation model where seven independent authenticity hypotheses, spanning biometric structure, AI signature detection, compression history, frequency analysis, texture realism, robustness under degradation, and pixel-level coherence, must align before deepfake risk is flagged.

The approach is designed to sustain detection performance under media degradation and emerging attack methods rather than relying on any single cue that may weaken under real-world conditions.

Shufti’s platform supports both live capture and uploaded verification, with passive and active liveness certified at iBeta Level 2 with a 0% false acceptance rate, completing single-frame deepfake analysis in under one second.

Questions Worth Answering Before the Next Board Review

As deepfake-enabled fraud accelerates, payments, fintechs, and PSP leaders must take clear positions on decisions that directly impact growth, risk, and customer experience.

How much onboarding friction can the business absorb before conversion drops—and how does that compare to the true cost of fraud leakage?

Are deepfake detection vendors being evaluated on real-world performance, or on controlled benchmarks that fail under compression, re-encoding, and device variability?

How resilient is the current detection stack to degraded media and evolving attack methods, and how quickly can it adapt?

Can institutions continue to defend against deepfake threats in isolation, or is shared threat intelligence necessary despite privacy and liability trade-offs?

These are not theoretical questions. They are operational ones, and the answers will shape how fintechs manage trust for the next decade.

Read Shufti’s full technical whitepaper on production-calibrated deepfake detection to find answers or assess performance against your current verification setup. Visit Shufti or request a demo to explore the approach further.

Media Person:

Neliswa Mncube

[email protected]

Explore Now

Explore Now