How to Address Low Quality Phone Images Challenge for Identity Verification

- 01 Core Problem: KYC Obligations are Expanding at a Faster Rate than the Infrastructure that Implements them

- 02 How Low-Quality Images Impact Business and Revenue?

- 03 How to Address Low-Quality Input Challenges in Identity Verification?

- 04 Closing the Low-Quality Input Gap for Engineering, Compliance, and Risk

- 05 Shufti: Redefining Identity Verification for Imperfect Inputs

Smartphones have become the preferred medium for shopping, banking, socializing, and entertainment, driven by the growing demand for speed and accessibility. As a result, organizations are increasingly shifting toward remote service delivery, with the financial sector leading this transformation. This evolution has accelerated the adoption of remote and mobile identity verification, replacing traditional physical checks to meet modern expectations for scalability and convenience.

However, it reveals a considerable gap in the emerging markets. Despite a high adoption rate of smartphones, a significant number of users still rely on low-end phones that do not have a wide range of imaging capabilities.

Analysis from the 2025 DHS Remote Identity Verification Rally demonstrated that low-resolution devices introduce sensor noise, JPEG compression artifacts, and color inconsistencies. These issues can elevate extraction errors by as much as 18%.

This is further complicated where low bandwidth networks require heavy compression, which removes critical and fine visual information during upload.

Conventional digital identity verification systems were initially designed to operate in controlled settings where inputs are of high quality. In practice, however, such systems can be challenged by the blurred, distorted, and inconsistent data on a large scale.

This creates a gap between what the systems expect and the actual input IDV systems get, influenced by real-world conditions. Importantly, the degradation of images is not random; it follows predictable patterns influenced by device hardware and bandwidth limitations.

It implies that the biases introduced into AI models are institutional and, more importantly, can be remedied. This extends to technical factors such as noise distribution, compression-related artifacts, and device-specific variations, which can distort feature extraction and impact model performance, but can be mitigated through better data preprocessing and model optimization techniques.

This leads to observable bias in verification results, especially between the regions and demographics.

Core Problem: KYC Obligations are Expanding at a Faster Rate than the Infrastructure that Implements them

KYC and identity checking requirements are tightening across most countries around the world, posing a compliance challenge. Although regulators require a high level of confidence in identity tests and trusted biometric authentication, the quality of input data differs greatly in different regions.

Businesses face heightened compliance risks, as authorities increasingly scrutinize verification results. Achieving regulatory compliance amidst these challenges is now essential, with accuracy being a critical requirement.

How Low-Quality Images Impact Business and Revenue?

Operational challenges stemming from low-quality images cause an increase in manual reviews, drive up Customer Acquisition Costs, and lead to higher rates of onboarding abandonment. All of these factors have a direct impact on revenue growth and market expansion.

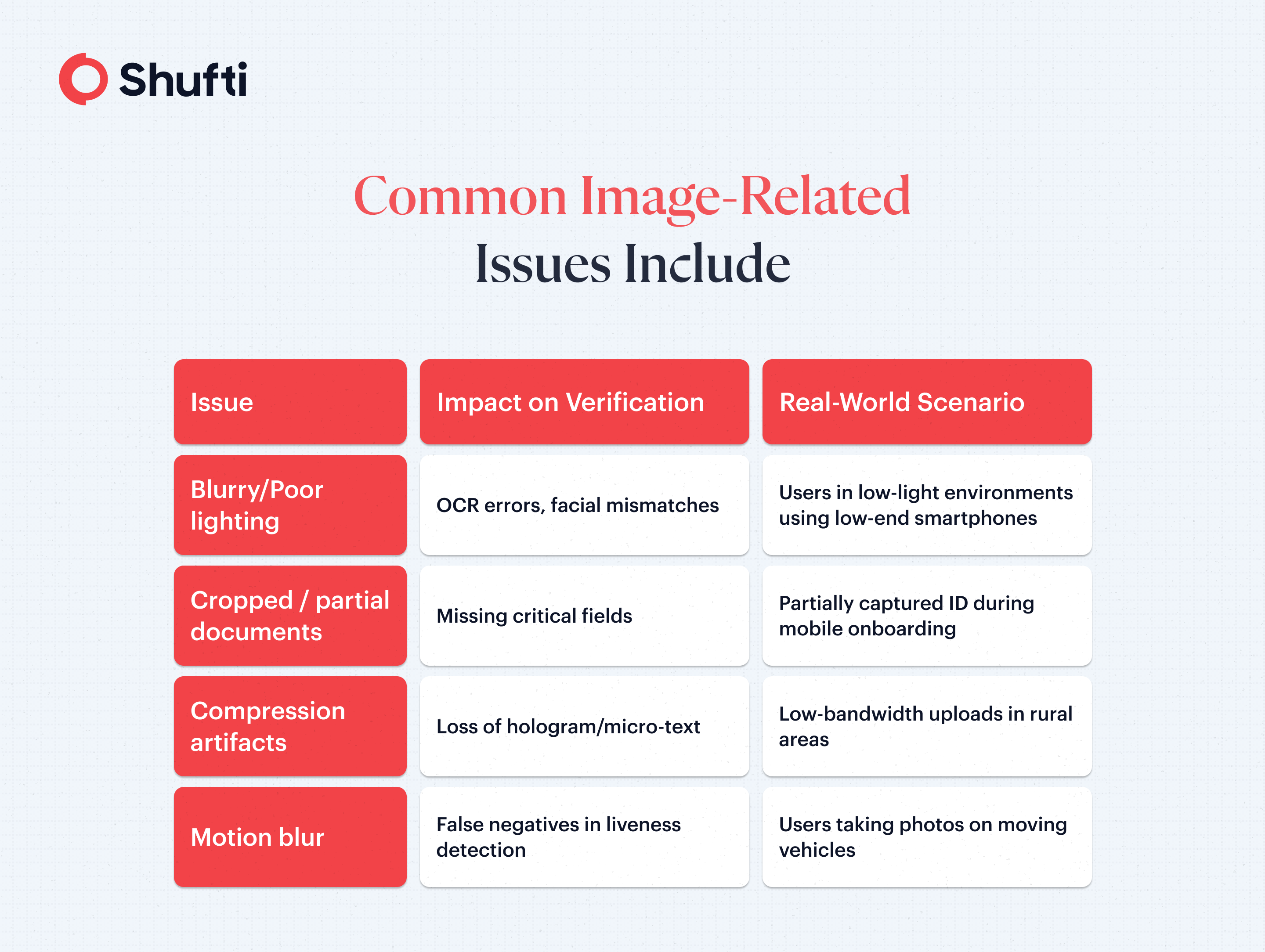

In mobile identity verification and remote identity verification, unstable image capture presents various technical failure points.

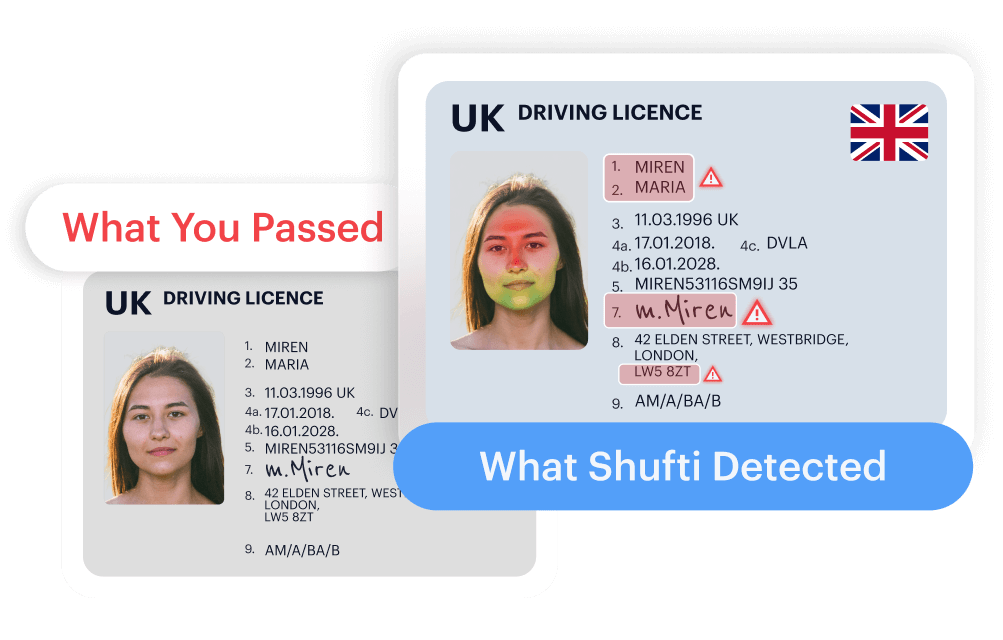

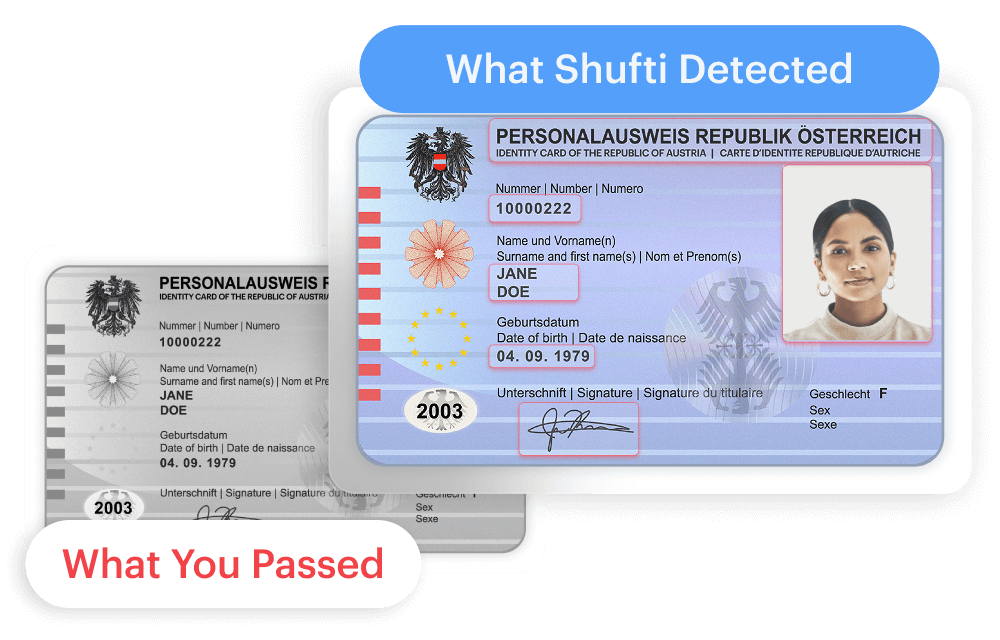

Research also examines the reasons that these challenges continue to exist. Optical Character Recognition struggles when fine details, such as edges and microtext, are degraded because high-frequency image information is reduced through compression, blur, or low-resolution capture.

The problem is enhanced by device and environment constraints. The sensors used in low-resolution cameras and limited dynamic range usually lead to over- or under-exposure of the images. Moreover, the absence of guided capture mechanisms denies the users the opportunity to get any form of corrective feedback on how to achieve better results.

System-level impact is immediate and measurable:

- OCR errors lead to inaccurate data extraction

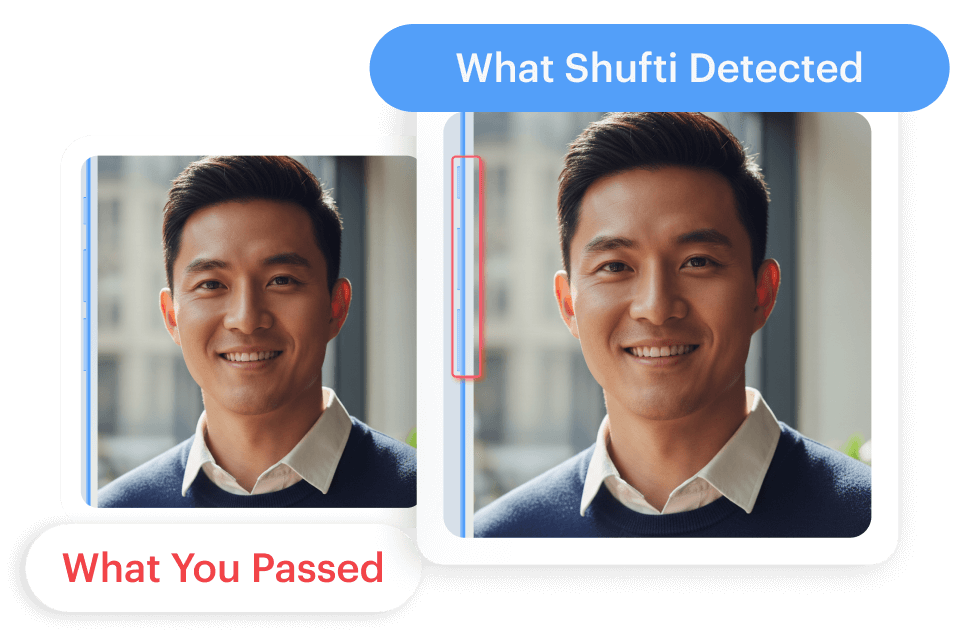

- Facial mismatches affect biometric verification outcomes

- False negatives increase, triggering unnecessary rejections

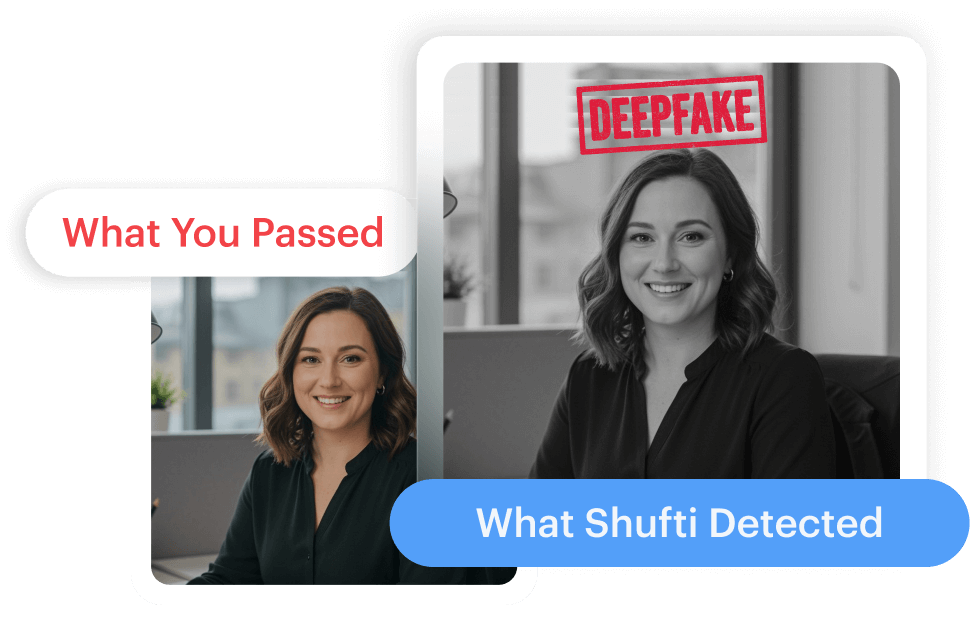

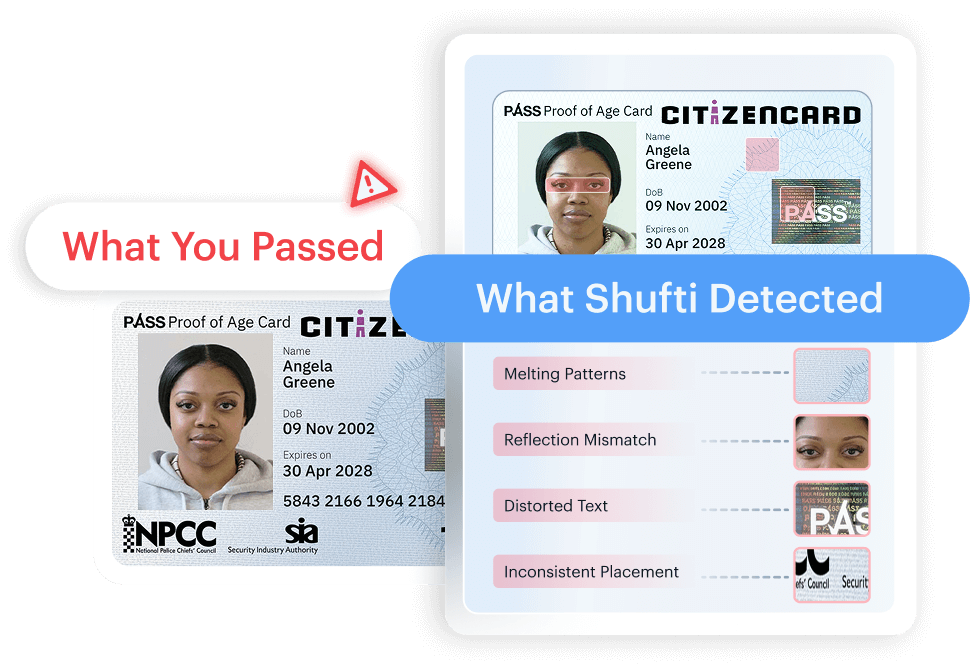

The problem complicates even more when a system fails to distinguish between an actual low-quality scan and a tampered or manipulated image. This circumstance undermines forensic indicators and increases the chances of fraud. As a result, organizations are grappling with increased manual review costs, a higher rate of onboarding drop-offs, and a greater exposure to synthetic identity fraud.

How to Address Low-Quality Input Challenges in Identity Verification?

To address verification failures, organizations need to reconsider the core approach to system design. Instead of demanding perfect inputs, they should develop digital ID verification systems that operate effectively even under less-than-perfect conditions.

This approach is based on a combination of targeted technical strategies that help coordinate input variability across all stages of the process.

AI-Powered Image Enhancement & Preprocessing

Advanced preprocessing techniques improve input usability before analysis. Deblurring, denoising, and super-resolution methods restore degraded visual signals, while contrast normalization enhances readability. It has been confirmed by research that reconstruction of lost frequency content directly enhances the accuracy of OCR and biometric verification.

Adaptive OCR & Document Recognition

Contemporary OCR systems need to be trained using noisy and compressed data. Context-aware extraction allows to reconstruct partially visible or obscure fields and guarantees the integrity of the data even in the case of an incomplete input.

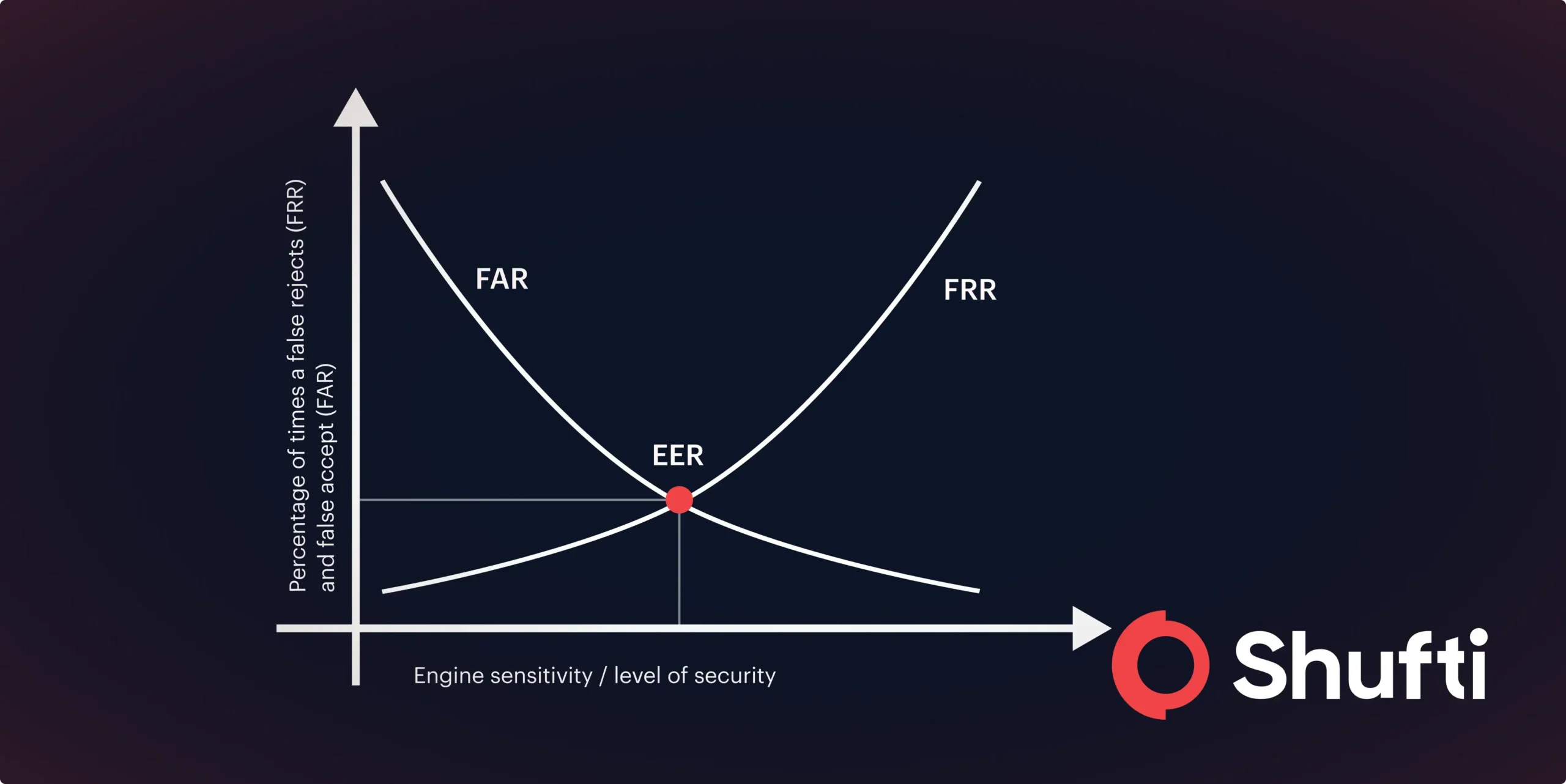

Robust Biometric Verification

The facial recognition systems need low-resolution input optimization and device variability. Multi-frame analysis and probabilistic matching enhance noise resistance, whereas liveness detection has to work in low frame rates and under poor lighting.

Guided User Capture (UX Layer)

Lighting, positioning, and focus prompts are real-time and minimize errors in capture. Variability at the source of mobile identity verification flows is reduced by automated capture at predetermined quality thresholds.

Multi-Layered Verification Approach

A combination of document checks, biometrics, and database validation decreases reliance on any of the degraded signals. This layered approach strengthens decision accuracy.

Collectively, the measures contribute to higher success rates of verification, lower false rejections, and the ensured onboarding of the emerging markets.

Closing the Low-Quality Input Gap for Engineering, Compliance, and Risk

Specialized preprocessing techniques such as AI-based deblurring, denoising, super-resolution, and contrast normalization can be used to improve low-quality images to extract important visual details and analyze them. The use of noisy datasets to train OCR and biometric models makes them resilient as well.

Businesses need to embrace a risk-based, multi-layered verification strategy to ensure they are able to deal with regulatory compliance in the absence of consistent inputs. This involves document checks, biometrics, and database checks coupled with varying thresholds according to the region to ensure accuracy without undue rejections.

The lack of adaptation may cause serious consequences, such as the risk of fraud, the persistent problem of onboarding, high operational expenses, and higher regulatory control. Ultimately, systems that cannot process low-quality inputs might have difficulties scaling, so they cannot potentially grow in new markets where such conditions are standard.

Shufti: Redefining Identity Verification for Imperfect Inputs

Shufti enables reliable identity verification in real-world conditions by addressing inconsistent input quality.

Its AI models are trained on diverse low-quality data and supported by advanced preprocessing to handle noise and compression.

With lightweight SDKs for low-bandwidth environments and robust biometric verification, Shufti improves accuracy, ensures compliance, and streamlines onboarding while strengthening fraud prevention.

Book a Demo and discover how Shufti helps you verify identities anywhere, regardless of image quality.

Frequently Asked Questions

How can you verify identities from low-quality phone images?

Verify identities from low-quality phone images using AI models trained on noisy data, combined with preprocessing techniques that enhance clarity and extract reliable features.

Can AI verify identity documents with poor-quality photos?

Yes, AI can verify identity documents from poor-quality photos by correcting distortions, reducing noise, and matching key data points against trusted databases.

How to automate KYC verification from smartphone uploads?

Automate KYC verification from smartphone uploads by integrating AI-driven OCR, biometric face matching, and real-time validation into a seamless mobile workflow.

What is the best AI-powered ID verification solution for emerging markets?

The best AI-powered ID verification solutions for emerging markets are those optimized for low bandwidth, trained on diverse data, and capable of handling inconsistent image quality.

Explore Now

Explore Now