6 Lessons for Strengthening Videoident Against Deepfake Fraud

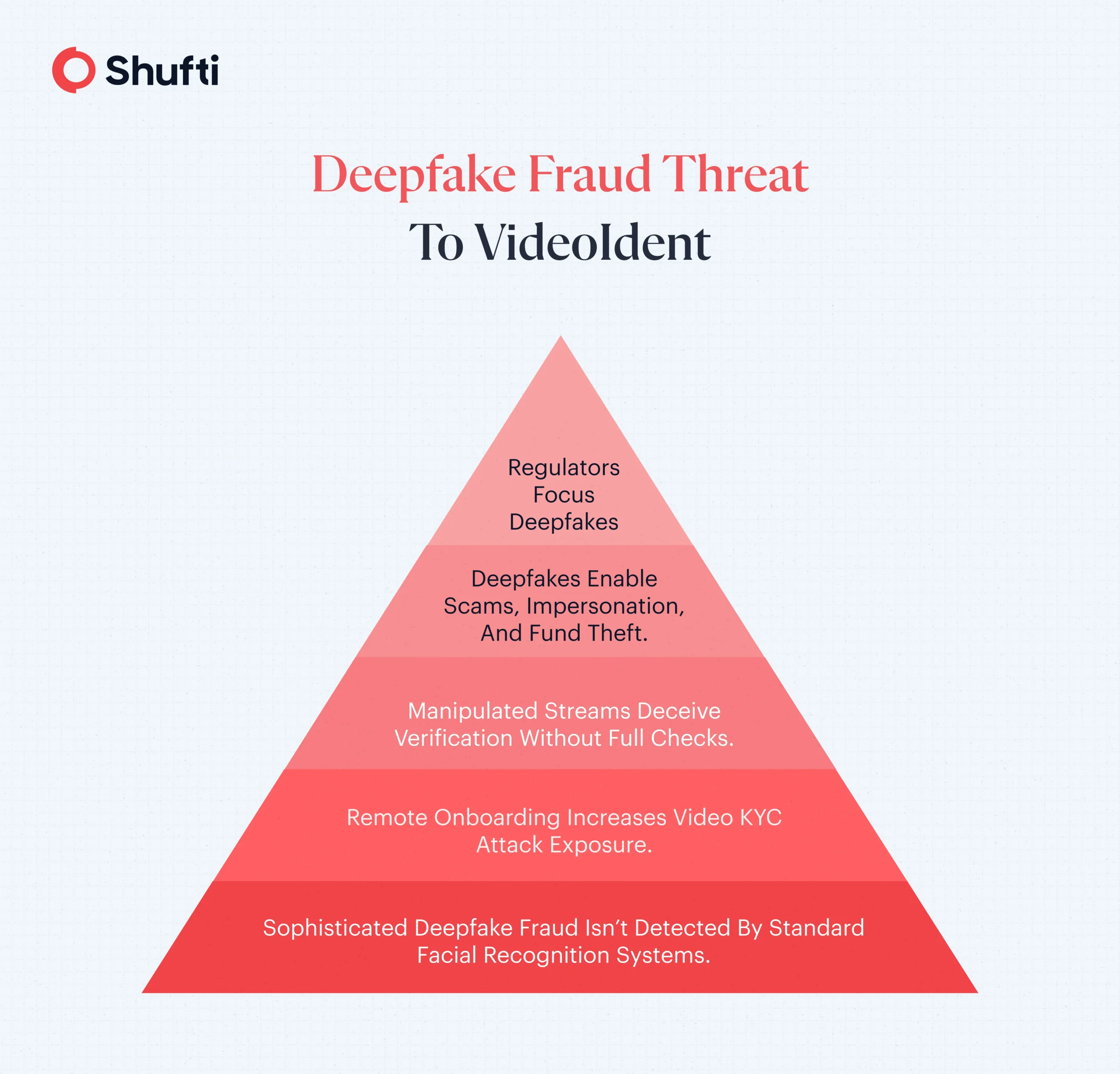

Deepfake-related attacks now represent a measurable portion of fraud cases. Data shows deepfake methods accounted for 6.5% of all fraud attacks, which represented an increase from 2022. This makes deepfake-related fraud one of the fastest-growing threats to digital identities. In 2026, VideoIdent is a mainstream option for remote onboarding and step-up verification, especially where higher assurance is required. Digital wallets, neobanks, crypto platforms, and even telecom services use videoident to onboard their customers in a remote setting.

How Deepfakes Exploit VideoIdent?

While this process is fast, the emergence of deepfakes poses a major threat to video KYC. Fraudsters create realistic fake-looking videos or audio mimicking real users, bypassing traditional liveness checks. Typically, the techniques used by fraudsters are:

- Face swapping

- Voice cloning

- Video injection attacks

- Virtual camera spoofing

The surge in deepfake fraud has made regulators more aware than ever. Banks using traditional video KYC methods and processes struggle with advanced deepfake fraud. Modern technology that made remote video verification has also made identity fraud more sophisticated.

Why VideoIdent Needs a Hybrid Model Against Deepfake Threats

Fraudsters have advanced their tactics and moved beyond traditional methods; they no longer need stolen documents or impersonators. They now possess the ability to produce facial simulations that appear genuine, which enable them to replicate human speech patterns and develop complete virtual identities that function effectively during video interactions.

Deepfakes demonstrate severe security weaknesses because of their impact on methods businesses use for establishing identity, conducting liveness detection, and assessing trustworthiness. These issues go beyond simple technical problems because they demonstrate essential truths that will change digital authentication methods in the future. A hybrid model for videoident combines human expertise and AI technologies to detect and prevent remote identity verification from deepfake threats. The upcoming stage requires developing new solutions together with increased expertise to address the current identity verification challenges.

1. Identity can no longer be proven by facial similarity alone

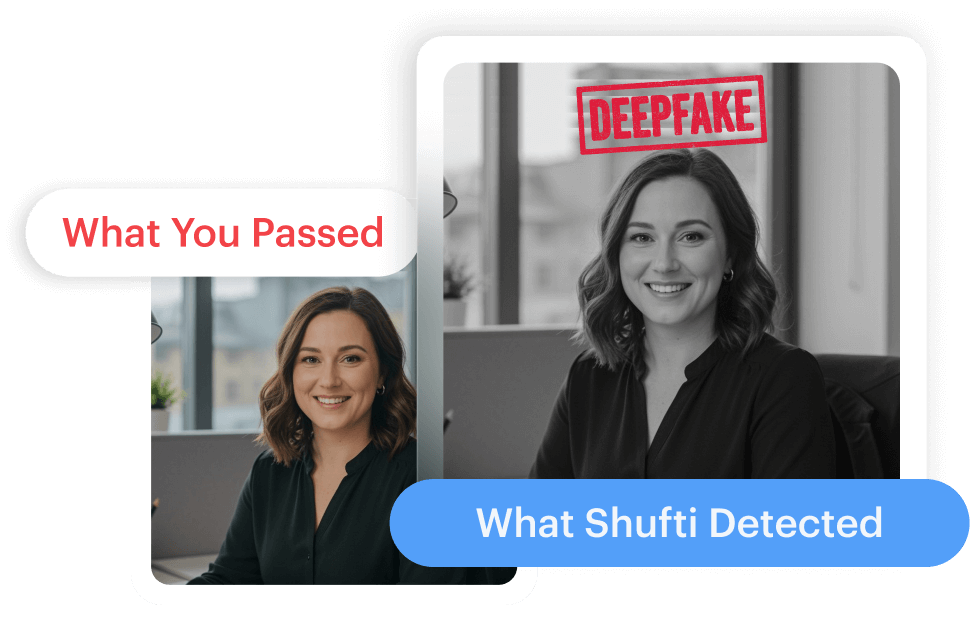

Matching a user’s face to an ID was once enough. Today, it isn’t. Deepfake technology can generate faces that resemble real individuals convincingly. Just looking at the camera does not prove that a real person is behind it.

Identity should not depend only on appearance. Behavior, context, consistency in sessions, and risk signals also matter. How a person reacts during verification, how their data matches across different systems, and whether their actions are consistent over time are just as important as their appearance. Trust shifts from relying on facial recognition to understanding identity through intelligence.

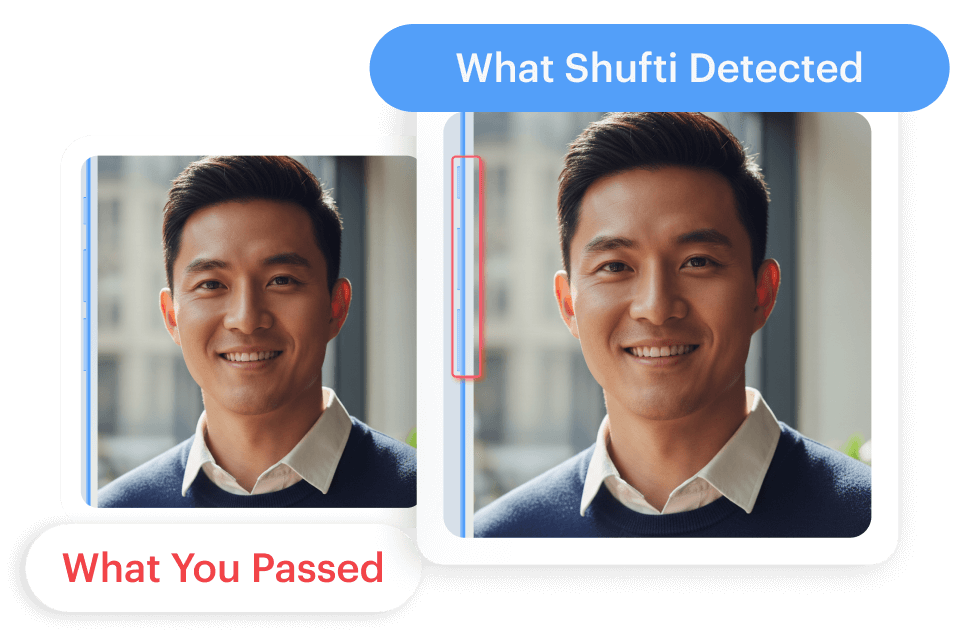

2. Basic blink tests are not enough

Basic blink tests do not work well against advanced face swaps, and hence require mandating multi-frame analysis of micromotion and skin texture anomalies that deepfakes are unable to copy. Verifying presence cannot rely solely on choreography. To truly ensure liveness, we must look beyond performance and examine biological indicators. Today, conducting multi-frame analysis of micro-expressions, subtle changes in skin texture, and spontaneous reactions has become essential.

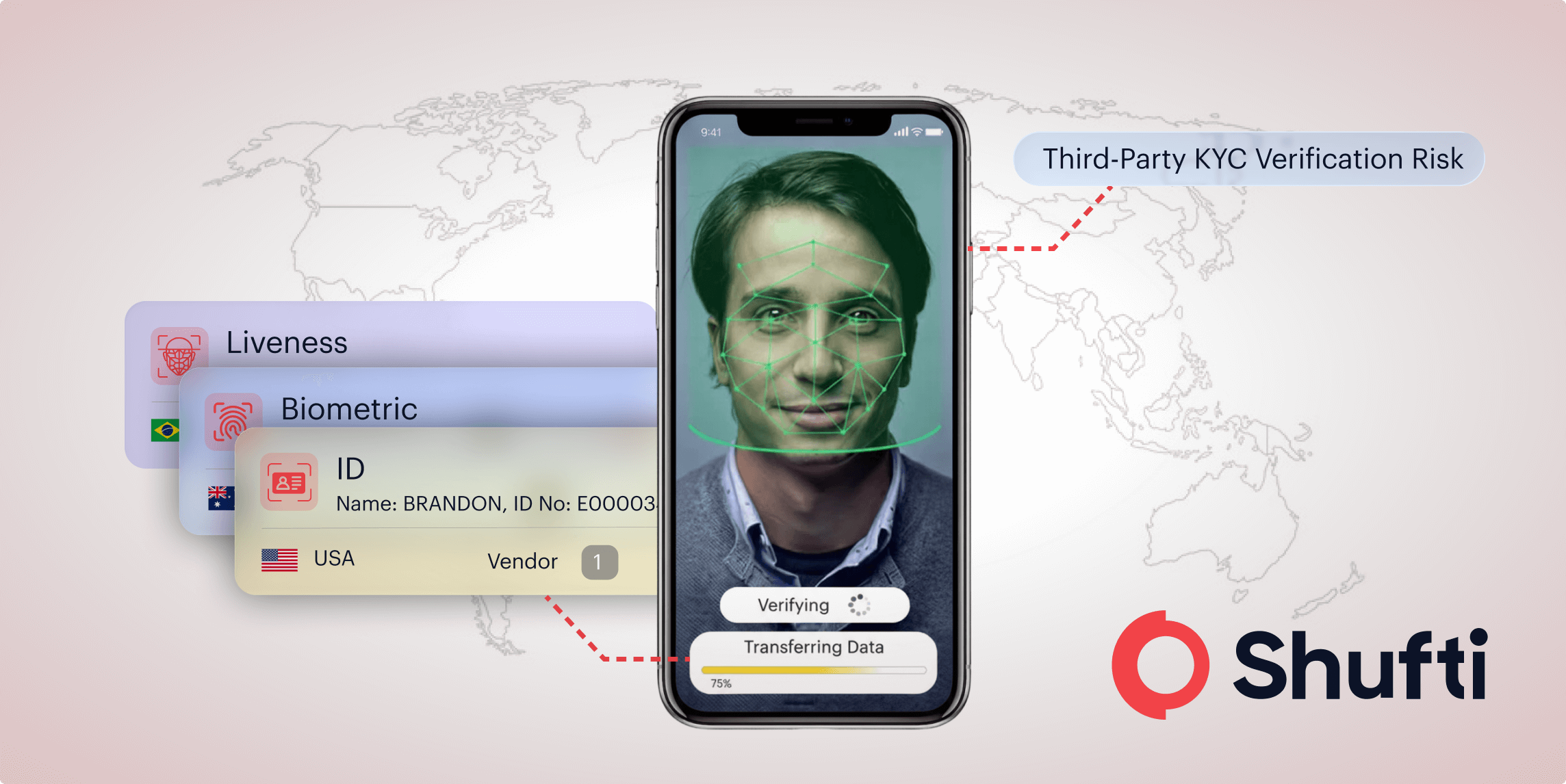

3. Deepfake fraud now enters through injected video streams

Fraud can hide not just in faces, but also in cameras and video sessions. Attackers use virtual cameras and fake streams to get around normal checks. They can edit videos or replay previous sessions to trick Video KYC systems.

To solve this problem, verifying the video source is crucial. Session integrity controls and device risk signals help reduce injection and replay risk. Implementing a hybrid model that combines AI-assisted face matching, liveness detection, document verification, alongside agent-led presence monitoring, and obtaining user consent enhances security and ensures a reliable verification process. If the camera is not reliable, the whole system fails. Therefore, keeping the session secure is just as important as verifying a person’s identity.

4. Visual trust collapses when audio tells a different story

A credible appearance alone is insufficient if the voice is manipulated. Deepfake attacks frequently integrate both video and audio elements to deceive verification systems.

It’s essential to synchronize lip movements with real-time speech patterns and to monitor for any unnatural resonance. Any desynchronization or anomalies should be flagged, as discrepancies between visual and audio cues can expose fraudulent activity.

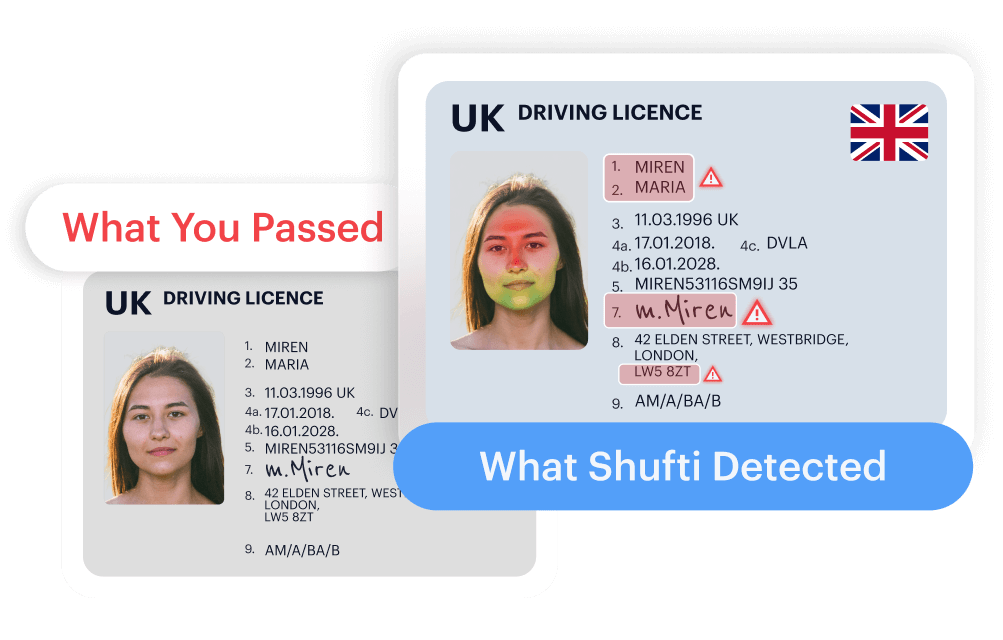

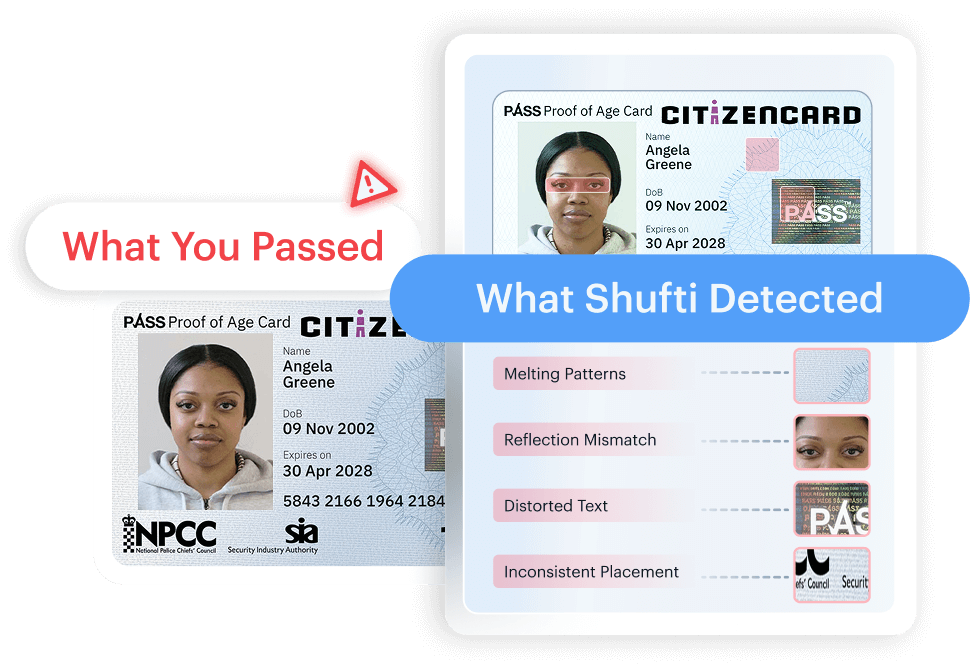

5. A single verification method cannot sustain trust

Relying solely on one method of verification is not enough since any single control can fail or be compromised. A video session may prove the presence of a real human, but building trust requires the use of multiple verification layers throughout that interaction.

This includes a trained expert monitoring the call, real-time liveness and deepfake detection running in the background, and document authenticity checks against official databases. When these methods work together, the chances of fraud are significantly reduced. Security is no longer based on one action, but on the combined strength of multiple signals.

Effective identity verification requires multiple layers, including:

- Facial biometrics

- Behavioral signals (head pose, eye motion, gestures)

- Document authenticity

- Device and network intelligence

When these layers intersect, anomalies stand out. Trust is not captured in one interaction. It is assembled across systems.

6. Static verification cannot keep pace with evolving fraud

Static defenses are falling behind as deepfake technology continues to evolve quickly. To tackle this issue, organizations should run red-team simulations every three months. These should use updated AI generators and incorporate new findings to keep up with potential threats expected by 2026.

How Shufti Empowers Businesses with Secure VideoIdent Solution Amidst Evolving Deepfake Threats?

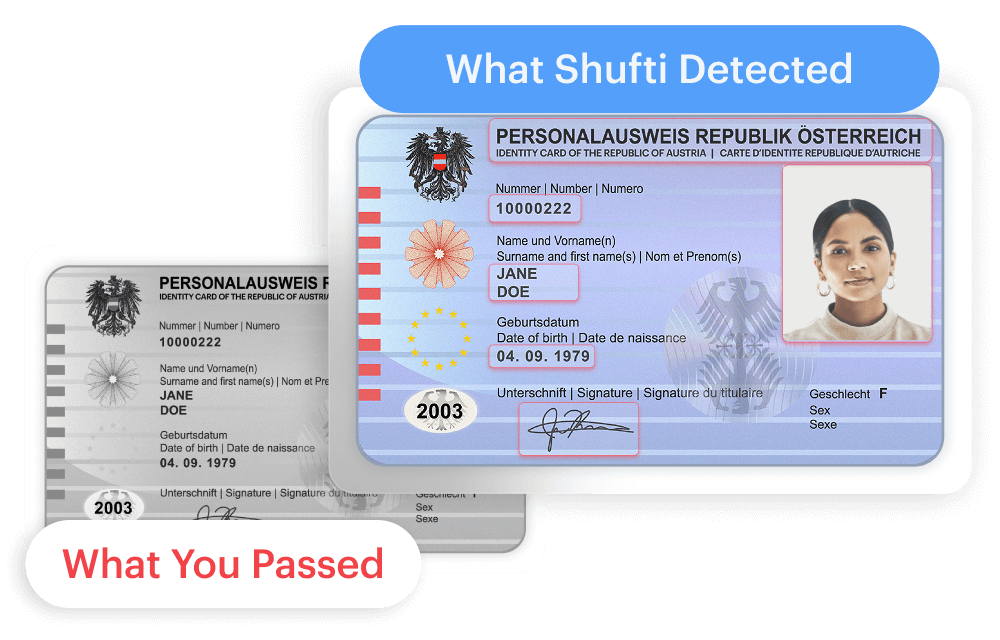

Deepfake-driven impersonation raises the assurance bar for remote onboarding and step-up verification. Shufti VideoIdent is a hybrid identity verification solution that combines live, guided video sessions with configurable checks, including face, document, address verification, AML screening, consent capture, and MFA, producing decision-ready outcomes and evidence that can support compliance reviews.

Shufti’s Videoident is engineered to comply with stringent jurisdictional regulations while providing enhanced digital convenience.

Request a demo to experience a unified and flexible Videoident journey.

Explore Now

Explore Now