Deepfake-as-a-Service (DaaS): A Growing Threat to Identity Verification Systems

- 01 Deepfake Threats Are Forcing a Global Rethink of Identity Verification Compliance

- 02 Beyond Impersonation: The Rise of Synthetic Identity Fraud

- 03 How Deepfake Attacks Bypass Modern KYC Controls?

- 04 Why Many Solutions Struggle to Detect Deepfakes with Higher Accuracy?

- 05 From Deepfake Detection to Continuous Identity Assurance

- 06 The Economics of Deepfake-as-a-Service

- 07 Governance, Audit Readiness, and AI Risk Management

- 08 The Cost of Inaction: Why Organizations Must Act Before Deepfake Attacks Escalate Further

- 09 How Shufti Strengthens Identity Resilience Against Deepfake Threats?

Deepfake-as-a-Service (DaaS) is rapidly changing the economics of identity fraud. Tools that once required advanced technical expertise are now packaged into accessible services that allow fraud actors to generate realistic face swaps, cloned voices, and synthetic identities on demand.

The deeper concern for financial institutions and digital platforms is not only the availability of these tools but also the pace at which they are improving. The deepfakes used in fraud attempts a few months from now will be more convincing than the synthetic media seen today, while many identity verification systems not designed to detect AI-driven impersonation are already struggling to identify manipulated identities and spoofed biometrics.

As a result, DaaS is exposing structural weaknesses in identity verification workflows that rely on static document checks or one-time onboarding verification.

Deepfake Threats Are Forcing a Global Rethink of Identity Verification Compliance

Deepfake threats are revealing structural vulnerabilities in the current KYC and AML systems, especially those that have document-based identity verification or a single-time onboarding verification.

A risk-based approach has long been an emphasis of regulators. The Financial Action Task Force, in its Digital Identity Guidance, emphasizes the need to monitor continuously and maintain high levels of identity assurance. In the same manner, financial regulatory authorities like FinCEN and the FCA still emphasize greater due diligence and transaction monitoring requirements on high-risk relationships.

Deepfakes have made compliance with such mandates even more complex because they challenge whether a firm can still trust those signals it previously used to make risk-based decisions.

There are three significant changes that are being driven by this regulatory environment:

- Increased requirements in biometric authentication and liveness checks

- Greater focus on constant monitoring outside of the onboarding

- Greater scrutiny of identity governance and audit readiness

Resilience is not only associated with compliance alignment. Deepfake social engineering is able to circumvent systems that comply with regulatory minimums but do not adapt intelligently.

Beyond Impersonation: The Rise of Synthetic Identity Fraud

Deepfake fraud has been connected with voice cloning or manipulated videos. Nevertheless, the greater danger spills over to synthetic identity fraud.

Fraud actors now combine:

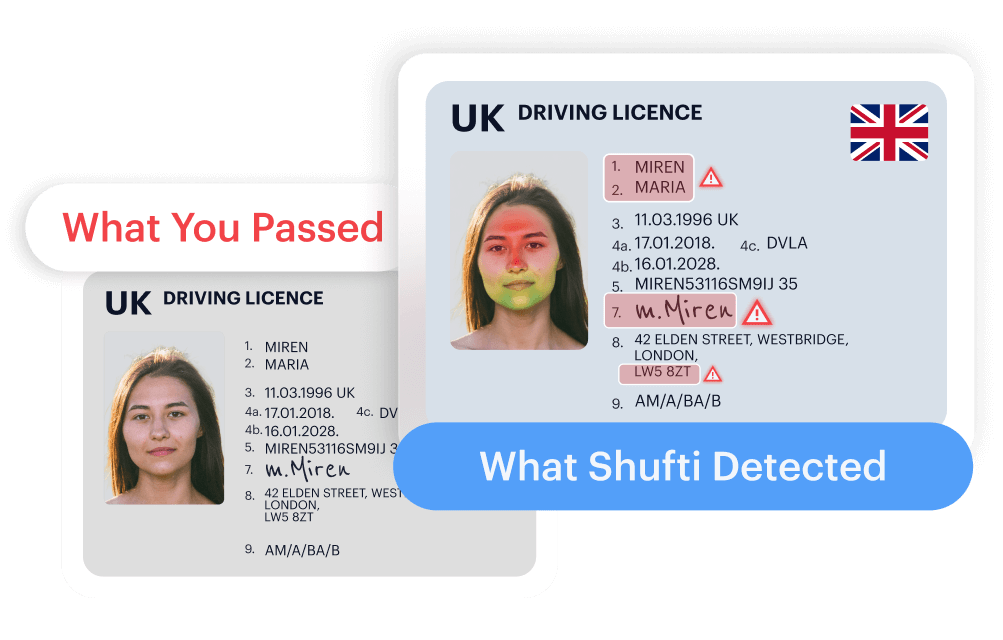

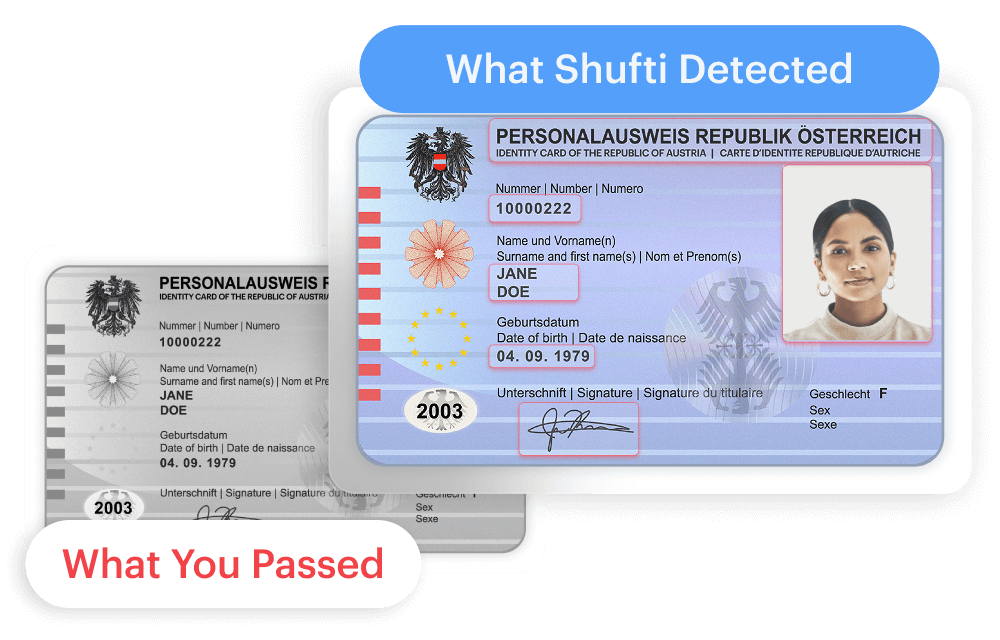

- Fake identity papers created by AI.

- Fabricated biometric data

- Stolen but fragmented personal information

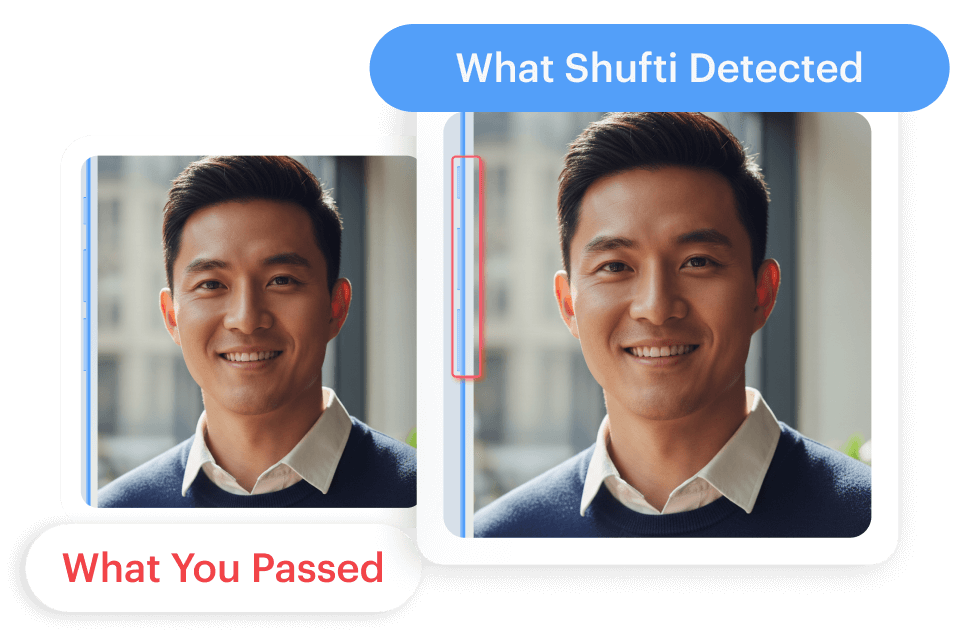

- AI-generated selfies designed to pass facial recognition

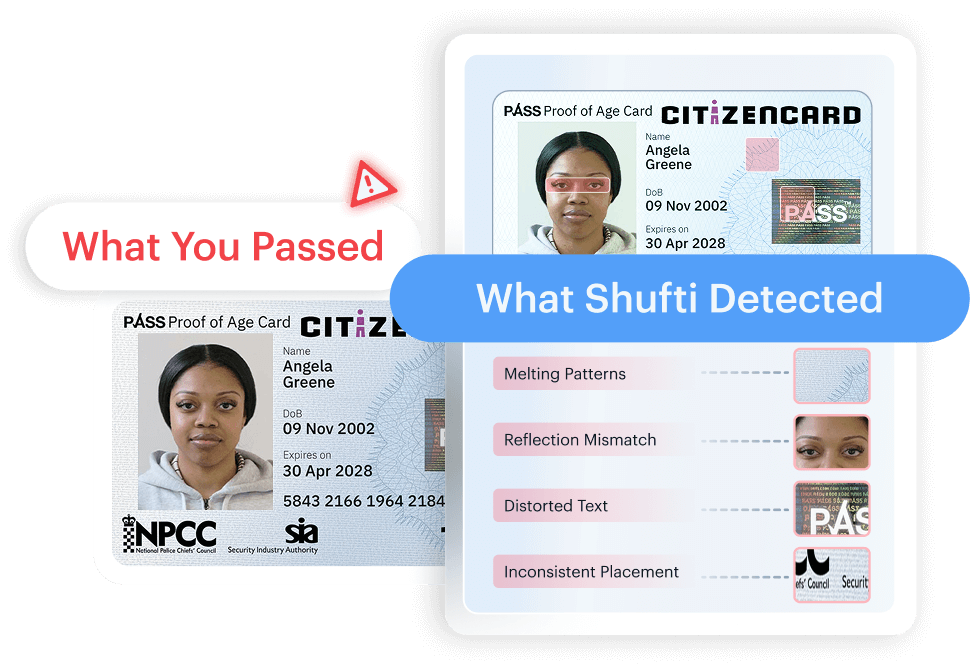

This generates identities that seem valid on multiple levels of verification, including impersonation of real-life people as well as fully fictitious ones who can pass onboarding validation.

How Deepfake Attacks Bypass Modern KYC Controls?

The fraud associated with Deepfakes can target every stage of the digital identity lifecycle

- Onboarding Stage: The first weak point is onboarding. A genuine-looking synthetic document is supported by a manipulated selfie that resembles the photo on the ID.

- Authentication Phase: The voice and video deepfakes are aimed at account recovery and step-up authentication.

- Transaction Stage: The approvals are made to high-value transfers and changes of beneficiaries based on manipulated video confirmations or spoofed executive messages.

- Ongoing Monitoring: Conventional transaction surveillance focuses on financial anomalies rather than identity anomalies. Account takeover can result in behavioral drift, which cannot be detected without integrating identity risk signals.

The result is not a single failure point but a systemic breakdown across onboarding, authentication, and monitoring workflows.

Why Many Solutions Struggle to Detect Deepfakes with Higher Accuracy?

Deepfakes social engineering bypasses automatic systems and human judgment. The traditional models of one-time verification and liveness detection cannot compete with more advanced AI-generated identities, exposing operational teams. During approvals under time constraints, operational teams are susceptible to realistic voice impersonations.

On-the-fly distinction between genuine and AI-generated communications is still technically challenging. Institutions have to strike a balance between fraud prevention and customer experience, a process that makes it further difficult to deploy aggressive controls.

These vulnerabilities directly translate into a loss of money, exposure to enforcement, and loss of customer trust. The enforcement practices in recent years have shown that failure to control identity can frequently result in regulatory fines and reputational losses.

From Deepfake Detection to Continuous Identity Assurance

Deepfake detection service is now an essential part of fraudulent tactics. Nevertheless, being detected is not enough to resolve the issue.

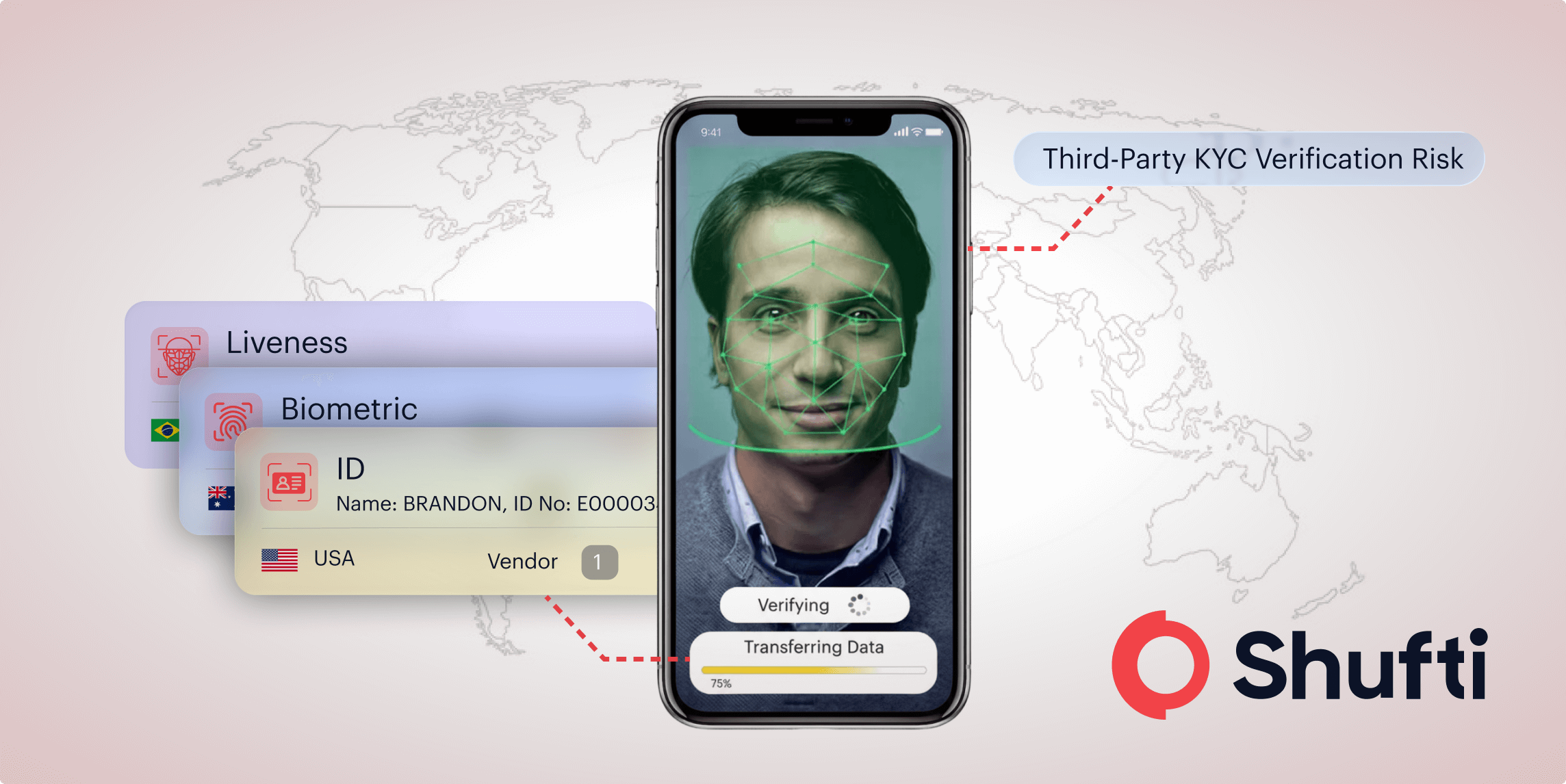

The use of AI vs AI defense systems in modern IDV strategies is becoming more reliant on machine learning models identifying artifacts and patterns based on evolving deepfakes. The verification layers include:

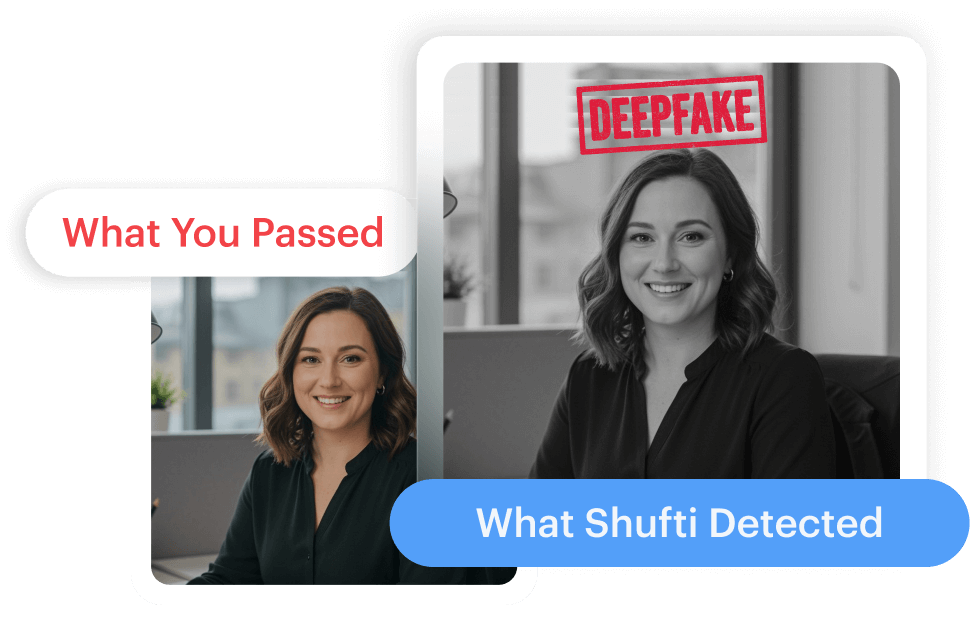

- AI-Powered Behavioral & Biometric Analysis: Detects micro-movements, facial depth signals, and subtle voice inconsistencies that indicate synthetic manipulation.

- Document Authentication: Validates format authenticity and data integrity

- Device Intelligence: Identifies unusual devices or environmental signals.

- Behavioral Analytics: Tracks the trends and activities of transactions and user behavior.

- Out-of-Band Verification (OOBV): Confirms high-risk actions through independent communication channels, reducing reliance on a single potentially compromised interaction.

With a combination of constant surveillance and real-time risk scoring, institutions can prevent advanced social engineering attacks, such as voice and video deepfakes, in real time. Adaptive authentication dynamically modifies the verification requirements based on the identified anomalies, and the changing threats are addressed throughout the identity lifecycle.

The Economics of Deepfake-as-a-Service

The rise of subscription-based deepfake services has made it easier for fraudsters to enter the scene.

With automation streamlining processes, the costs of operations decrease, while the frequency of attacks increases, resulting in deepfake incidents becoming more prevalent and scalable.

As a result, financial institutions are now up against agile adversaries capable of rapid innovation. To keep up, defensive systems need to evolve at a similar pace.

Governance, Audit Readiness, and AI Risk Management

As AI-driven fraud grows, regulators are paying closer attention to model governance and accountability. Therefore, identity verification providers must demonstrate:

- Transparent decision logic

- Bias monitoring and fairness controls

- Audit trails for identity decisions

- Documented model validation processes

Any institution that uses opaque detection tools may face regulatory checks by the government in case they are unsure of how identity judgments were reached. Deepfake defense should therefore be in line with other AI governance expectations.

The Cost of Inaction: Why Organizations Must Act Before Deepfake Attacks Escalate Further

The loss of money, trust, and reputation is all a consequence of ignoring the threat of deepfakes in digital onboarding. Intelligent identity verification systems trained to contain and detect evolving threats like deepfakes are the need of the hour.

Deepfakes that pass through verification systems appear later in the form of creditors who’ll never pay back, fraud rings that can’t be tracked, and mule accounts that help criminals in laundering their illegal wealth. Most critically, customer trust deteriorates when identity systems fail to protect interactions.

How Shufti Strengthens Identity Resilience Against Deepfake Threats?

Identity systems designed for static verification are struggling to detect AI-based impersonation at scale. Banking institutions and digital platforms require identity verification systems capable of detecting biometric manipulation, validating documents, and monitoring identity risk signals beyond onboarding.

Shufti enables organizations to strengthen identity assurance through AI-powered document verification, biometric authentication, and liveness detection designed to identify synthetic identities and impersonation attempts.

Organizations can request a demo to explore how Shufti supports secure onboarding and continuous identity verification.

Explore Now

Explore Now