How to Choose Deepfake Detection Software for Your Business

- 01 How Deepfake Detection Software Works: A Look at the Technology Layers

- 02 What Features to Look for in Deepfake Detection Software?

- 03 Integrating Deepfake Detection into Your Verification Workflow

- 04 What Are the Leading Capabilities to Look for in Enterprise Deepfake Detection Tools?

- 05 Experience Deepfake Detection That Matters

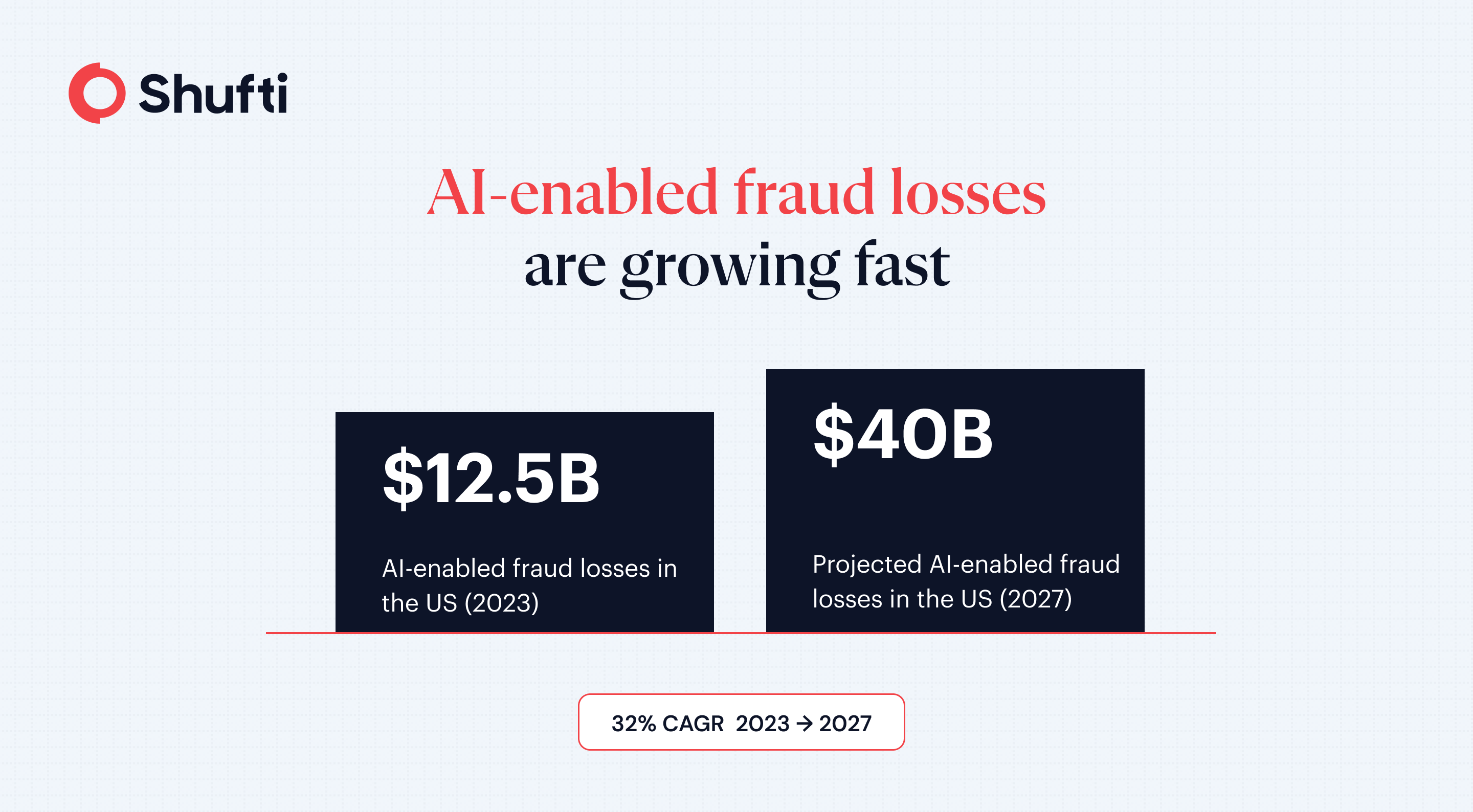

Every month, fraud teams discover accounts that were opened using AI-generated faces. These are not crude photo substitutions. They are GAN-synthesised identities that clear pixel-level liveness checks and pass basic document comparison. Deloitte’s Center for Financial Services projects gen AI-enabled fraud losses in the US could reach $40 billion by 2027, up from $12.3 billion in 2023. For businesses running remote onboarding, the question is no longer whether deepfakes will reach your pipeline. It is whether your detection stack will catch them.

Deepfake detection software analyses submitted facial images or video to identify synthetic or AI-manipulated media. This guide covers what to look for, from the detection layers that matter to the certifications and deployment requirements that separate an adequate solution from a production-ready one.

How Deepfake Detection Software Works: A Look at the Technology Layers

Detection Technology Layers

The weakest approaches rely on pixel-level image analysis alone. GAN-synthesised faces are trained to defeat that class of detector, so production-grade systems operate across multiple independent layers.

Frequency-domain analysis examines the underlying signal structure of an image or video frame. AI-generated faces leave statistical traces in the frequency domain that genuine photographs do not carry. This layer catches attack types that look flawless to the human eye. Paired with it is 3D depth analysis, which checks whether the submitted face has the spatial properties of a real human head. A printed photo, replay video, or injected stream fails this test because it lacks the depth signature of a live person. Artefact and texture analysis adds a third layer, identifying blending irregularities, edge inconsistencies, and boundary anomalies that synthesis introduces. Together, these three approaches create overlapping coverage, each targeting a different class of attack.

How Does Deepfake Detection Software Detect GAN-Generated Faces?

GAN-generated faces carry statistical regularities in the frequency domain that real photographs do not. Detection models trained on GAN output learn to identify these signal-level artefacts even when the synthetic face is visually convincing. This is why frequency-domain analysis is particularly effective against GAN synthesis. Regular model retraining against new GAN architectures is what keeps detection reliable as generation methods evolve.

What Features to Look for in Deepfake Detection Software?

Attack Vector Coverage

Ask directly whether the platform detects both presentation attacks and injection attacks. Request a written breakdown of which attack categories fall within the detection scope, and ask specifically how the system handles virtual camera input. A platform that monitors only the camera-facing signal path will miss injection attacks entirely. Most vendors in this category market their presentation-attack benchmarks because those certifications are more standardised. Injection-attack coverage is more recent and less uniformly tested, which is precisely where the fraud migration has been happening.

Deployment Options and Data Residency

Many regulated businesses cannot send biometric data to a third-party cloud. Vendors offering only cloud-based deployment are disqualified for organisations in banking, government, defence, and healthcare where data residency requirements apply. Verify that the vendor supports on-premises, hybrid, or edge deployment, and confirm that the on-premises version includes the full detection capability rather than a reduced feature set.

Independent Certifications and Benchmarks

Vendor-reported accuracy figures are not externally audited. The standard that carries real weight in regulated sectors is iBeta Level 1 and Level 2, which tests anti-spoofing performance against a defined and repeatable set of attack scenarios. Government validation programmes add a second independent verification layer. Ask for the specific scope of any certification, when it was last renewed, and whether demographic diversity was part of the test set. When a regulator requests evidence of anti-spoofing performance, an externally audited benchmark carries more weight than a vendor claim.

Integration into Existing Verification Stacks

Standalone deepfake detection that sits outside the broader verification workflow adds latency and creates a data handoff between two systems your team manages separately. Evaluate whether the solution ships via a unified API or SDK that connects with your document verification, AML screening, or onboarding workflow rather than requiring a separate integration contract for each component.

Hybrid AI and Human Review

Automated models return ambiguous results on edge cases. Ask what the system does when confidence falls below threshold. A platform that routes low-confidence cases through the same model for a second pass will produce the same result. Ask how escalations are handled, how long resolution typically takes, and whether specialist review is part of the standard workflow or an additional service tier.

Integrating Deepfake Detection into Your Verification Workflow

Check whether the vendor provides native SDKs for iOS, Android, and web. SDKs handle auto-capture quality guidance, which reduces failure rates for users on mid-range devices or in variable lighting. The API should support both onsite (live camera) and offsite (image upload) flows, since many businesses need both in the same product.

For real-time onboarding, ask for production latency figures rather than benchmark numbers from optimal conditions. A face verification round-trip that takes more than three seconds creates measurable friction and increases drop-off.

Compressed video inputs are a real-world challenge that separates lab performance from production performance. Some frequency-domain detection methods degrade when codec compression removes the artefacts the model was trained to detect. Ask vendors specifically how their system performs on compressed inputs and whether their training data includes real-world capture conditions.

Injection attacks, where a fraudster routes a pre-recorded synthetic stream directly into the API without using the device camera, are a separate class of threat from presentation attacks. Confirm that the vendor’s anti-spoofing coverage extends to injection vectors. A full overview of deepfake attack types and how detection addresses each is available in what deepfakes are and how to detect them.

What Are the Leading Capabilities to Look for in Enterprise Deepfake Detection Tools?

Enterprise buyers should look beyond headline accuracy numbers. The capabilities that separate production-grade solutions are: multi-vector detection covering both presentation and injection attacks; iBeta Level 1 and 2 certification as a minimum independent benchmark; on-premises or hybrid deployment for data residency compliance; a unified API or SDK connecting with existing verification and AML workflows; and a documented escalation path with human specialist review. Vendors that can demonstrate all five in a live environment are the ones worth shortlisting.

Experience Deepfake Detection That Matters

Deepfakes are now a production-grade threat reaching live onboarding pipelines, and a detection layer built for an earlier generation of attacks will not hold against GAN-synthesised faces and injection fraud. Shufti’s deepfake detection covers 56+ anti-spoofing attack vectors, holds iBeta Level 1 and 2 certification and DHS RIVR 2025 validation, and supports cloud, on-premises, and hybrid deployment through a single SDK. Request a demo to run a deepfake sample through the pipeline and review the detection output before you commit.

Frequently Asked Questions

What is deepfake detection software?

Deepfake detection software is a tool that analyses images, videos, or live streams to identify whether the content has been synthetically generated or manipulated using AI.

How is deepfake detection software different from liveness detection?

Liveness detection confirms a real person is present. Deepfake detection identifies AI-generated or manipulated media. Production systems combine both, since a deepfake can pass a basic presence check if only liveness is in scope.

Who needs deepfake detection software?

Any business that onboards users remotely using facial verification, including financial services, fintech, crypto exchanges, gaming, and healthcare operators where FATF guidance and the EU AI Act create formal detection expectations.

Can deepfake detection software be bypassed?

Multi-layer systems with regular model retraining and human review on flagged cases are substantially harder to bypass than single-method approaches, though no detection system offers unconditional guarantees.

Does deepfake detection software work on compressed videos?

Performance varies by vendor. Some frequency-domain methods degrade under codec compression. Ask vendors for performance data on compressed inputs and confirm their model training covers real-world capture conditions.

Which Deepfake Detection Software Works Best for Banks During Customer Onboarding?

Banks need software that covers both presentation and injection attacks, supports on-premises deployment for data residency, and carries independent certifications. Shufti addresses all three requirements with 56+ anti-spoofing attack vectors, iBeta Level 1, 2 & 3 certification, DHS RIVR 2025 validation, and a unified SDK that integrates directly with document verification and AML workflows.