How Deepfake Detection Secures Financial Services

In November 2024, the U.S. Treasury’s Financial Crimes Enforcement Network issued its first alert specifically about deepfake-driven fraud schemes targeting financial institutions. The alert told banks, credit unions, and money services businesses to start filing suspicious activity reports with a new key term, FIN-2024-DEEPFAKEFRAUD. FinCEN had been watching this category grow since 2023, and the filings showed a clear pattern. Fraudsters were generating fake IDs, manipulated selfies, and synthetic video that walked past identity checks built for an earlier era of fraud.

A deepfake, in this context, is a piece of AI-generated or AI-manipulated media used to impersonate a real person or fabricate one. In financial services the payload is usually a fake government ID image, a spoofed selfie, or a video designed to defeat a liveness check.

That matters because the identity layer is how the money moves. If a fraudster can convince an IDV stack that they are someone else, the downstream controls, transaction monitoring, behavioural analytics, and sanctions screening, are working with a false premise. This guide walks through how modern deepfake detection works in financial services, what regulators are now expecting, and what to put in front of every new-account flow.

Why Legacy Identity Checks Struggle with Deepfakes

Traditional onboarding stacks were built to catch humans attempting fraud with physical documents and their own faces. The assumption was that the person on the camera was real, even if their claim was false. Generative AI has broken that assumption, and injection attacks have made the camera itself an unreliable input. Detection has to start treating every new face and every new document as potentially synthetic until the stack proves otherwise.

RGB-only Liveness is Not Enough

Older liveness checks work on pixel-level signals. A user holds up a face, moves slightly, and the model decides whether that sequence is a living human. These checks hold up against printed photos and static screen replays. A deepfake rendered in real time and injected into the camera feed often defeats them. The U.S. Treasury’s March 2024 report on AI-specific cybersecurity risks in the financial services sector called out this exact gap. The report warns that AI now lets bad actors impersonate employees and customers in ways that used to be far harder.

Injection attacks make this worse. A fraudster does not need to hold up a phone showing a fake. They can feed the deepfake directly into the video stream before it reaches the liveness model, and skip the camera entirely.

Document Forensics Alone Leaves a Gap

A clean OCR read, a matching MRZ, and an intact hologram image no longer prove a document is genuine. Generative models produce template-perfect IDs, and image editors can reproduce the security features that used to give a forgery away. Document checks and face checks need to talk to each other, with the biometric confirming that the live person actually matches the photo on the document. Modern face verification workflows run that cross-check automatically, before a decision is made.

What Regulators are Telling You to Do About It

The policy signal is getting louder across jurisdictions, and financial services teams can expect examiners to ask what they have done in response.

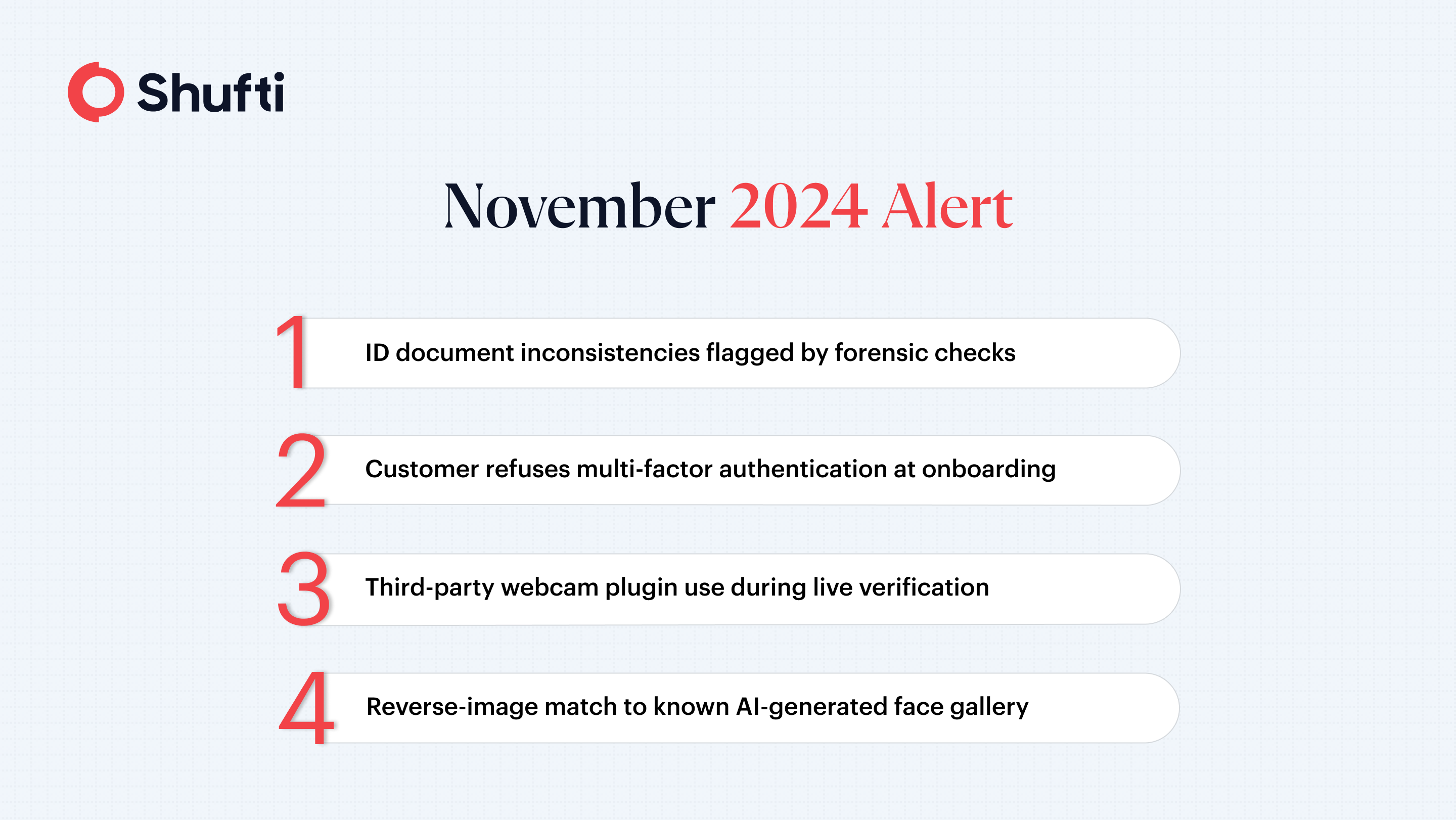

FinCEN’s Red Flags for Suspected Deepfake SARs

The FinCEN alert lays out nine red flag indicators that institutions should surface through their SAR workflows, including inconsistencies within a submitted ID, customers refusing multi-factor authentication, the use of third-party webcam plugins during a live verification, and reverse image searches that match a customer photo to a gallery of AI-generated faces. The SAR filing is a compliance requirement on its own, and the onboarding stack needs to generate these signals in the first place. A bank that identifies a deepfake attempt but cannot show examiners the audit trail of the detection is in a weaker position than one that flagged, recorded, and reported the event in sequence.

European Supervisors are Converging on The Same Message

The Bank of England’s April 2025 Financial Stability in Focus paper on AI warned that AI models producing deepfakes and highly personalised text could raise the ability of fraudsters to manipulate both employees and retail customers. Across the Channel, the European Central Bank has flagged similar concerns, and has named AI-enabled deception and fraud as a euro-area-level risk alongside broader operational concerns. Supervised entities in the EU should expect deepfake-readiness to appear in operational resilience dialogues.

What a Modern Deepfake Detection Stack Looks Like

The industry association FS-ISAC published a deepfake taxonomy for the financial sector in October 2024, mapping nine distinct threat types across customer fraud, executive impersonation, and social engineering. That taxonomy organises attacks by intent and by the technical vector they exploit, which means each category maps to a named control on the defender side. Controls that hold up against all nine tend to share a common shape, built around cross-layer evidence rather than any single detection model.

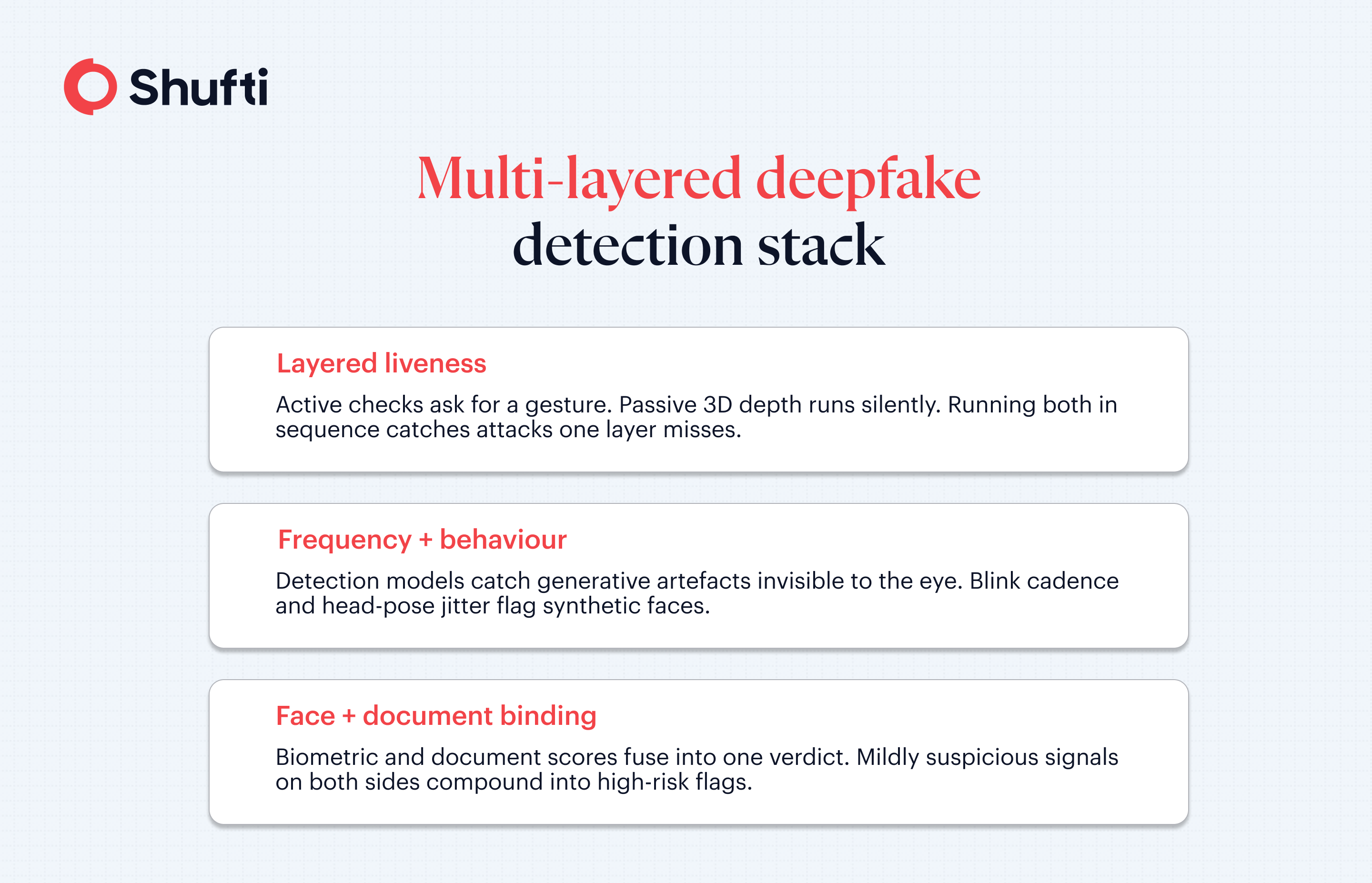

Layered Liveness, Active and Passive

Active liveness asks the user to perform a small gesture, a blink, a head turn, or a slight expression change, and verifies the motion in real time. The passive variant does the same job silently in the background, using 3D depth analysis, texture cues, and ambient lighting to confirm a real person is present. A stack that runs both in the right sequence catches attacks that defeat one layer but not the other.

Frequency-Domain and Behavioural Analysis

Generative models tend to leave artefacts that are invisible to the eye but visible in the frequency domain. A detection model trained on these artefacts can catch synthetic media even when the pixel output looks clean. Behavioural signals add a second overlay that catches micro-movements and timing patterns AI-generated faces still struggle to reproduce. Blink cadence, head-pose jitter, and subtle asymmetries between the left and right sides of the face tend to give current-generation synthetic content away.

Cross-Evidence Fusion Between Face and Document

Face detection alone flags the deepfake. Document forensics alone flags the forged ID. Neither catches the synthetic-identity combination, where a real document has been paired with a fabricated face, unless the two signals feed into a single decision. The strongest pipelines fuse both streams, so a mildly suspicious biometric paired with a mildly suspicious document still flags as high-risk. Cross-evidence fusion needs access to the raw scores from each sub-model, not just the pass or fail verdicts, so a late-stage fusion model can reason about agreement across layers.

Hybrid AI and Human Review on Edge Cases

No detection stack hits 100%, and the marginal cases are exactly where a human forensic analyst pays for themselves. A good pipeline routes borderline decisions to human reviewers with the underlying evidence, the frequency-domain score, the biometric match result, and the document integrity findings, already surfaced. That keeps false positives low without letting edge-case fraud through. The same pipeline is also where analyst feedback loops pick up new attack patterns, feeding labelled examples back into the detection models so tomorrow’s stack catches what today’s lets through.

Practical Next Steps for Financial Services Teams

Three moves typically deliver the most in the shortest window.

Start with a pressure test of your current onboarding flow using real deepfake samples. A slide deck review is not enough. Most stacks that were not designed with generative AI in mind fail the first injection test, and finding that out in a controlled environment beats finding it out from a regulator.

The next move is mapping control coverage against the FS-ISAC taxonomy. If your stack does not have a named control for each of the nine threat categories, you have a visible gap. That map becomes your next-twelve-months roadmap and a useful artefact in supervisory conversations. Industry-specific guidance for banks can help frame the control mapping against existing regulatory obligations.

There is also a reporting loop to tighten. FinCEN’s red flags are only useful if the onboarding system actually emits them to the compliance team. If a frozen application, a declined MFA attempt, or a reverse-image hit does not automatically become a SAR candidate, you are catching fraud and losing the reporting credit.

Validate Your Defence Against Deepfake Fraud

Deepfake fraud now sits alongside sanctions screening and PEP checks as a standard onboarding concern, and the teams that treat it that way are the ones examiners have stopped asking hard questions of.

Deepfakes are landing in live onboarding flows and slipping past liveness checks designed for an earlier generation of fraud. Shufti’s deepfake detection combines multi-layered anti-spoofing, government-validated accuracy, and hybrid AI plus human review, so the stack flags synthetic identities before they open an account. Request a demo to run a deepfake sample through the pipeline and see the result.

Frequently Asked Questions

What are the warning signs of deepfake financial fraud?

FinCEN's alert lists nine, including inconsistencies within a submitted ID, refusal of multi-factor authentication, third-party webcam plugin use during live verification, and reverse-image matches to AI-generated face galleries.

How do financial services detect deepfakes?

Modern stacks combine active and passive liveness, frequency-domain artefact analysis, document-to-face biometric binding, and human analyst review on borderline cases, so no single-layer bypass is enough to get through.

Can real-time monitoring stop deepfake attacks in banking?

Real-time checks at onboarding stop most attempts before an account opens, and continuous re-verification at high-risk events such as payouts and beneficiary changes catches the ones that slip past the first gate.

What security measures protect financial institutions from AI fraud?

Layered liveness, multi-factor authentication, independent document forensics, behavioural session signals, and analyst review on borderline cases are the core controls supervisors now expect to see working in combination.

Are deepfake attacks becoming the next major banking risk?

FinCEN, the U.S. Treasury, the Bank of England, and the European Central Bank have all flagged deepfake-driven fraud as a material and growing risk to the financial system.