Fighting Deepfakes with Fool-Proofed Identity Verification Systems: How Shufti Can Help

Undoubtedly, 21st century is the most innovative time period in human history. With every passing day, new inventions and technologies are surfacing, creating more opportunities for businesses to thrive in a high-paced environment. Deepfake is one of the innovations that gained popularity back in 2017 due to its usage in making fake videos. In simple terms, deepfakes are created using Artificial Intelligence (AI) by feeding real images into the system trained in two parts – one which creates fake images and the other which makes sure there is no difference between original and false.

One of the most famous cases of using deepfake technology was observed during shooting of movie “Furious 7” when “Paul Walker,” the man in leading role, died before completion. Directors using deepfake technology cast Paul’s brother, who completed the final scenes and surprised the audience with his extreme familiarity to original character. Deepfake technology was initially used for entertainment purposes, but now it has broadened its scope, and criminals are abusing it, inflicting financial damage on online businesses.

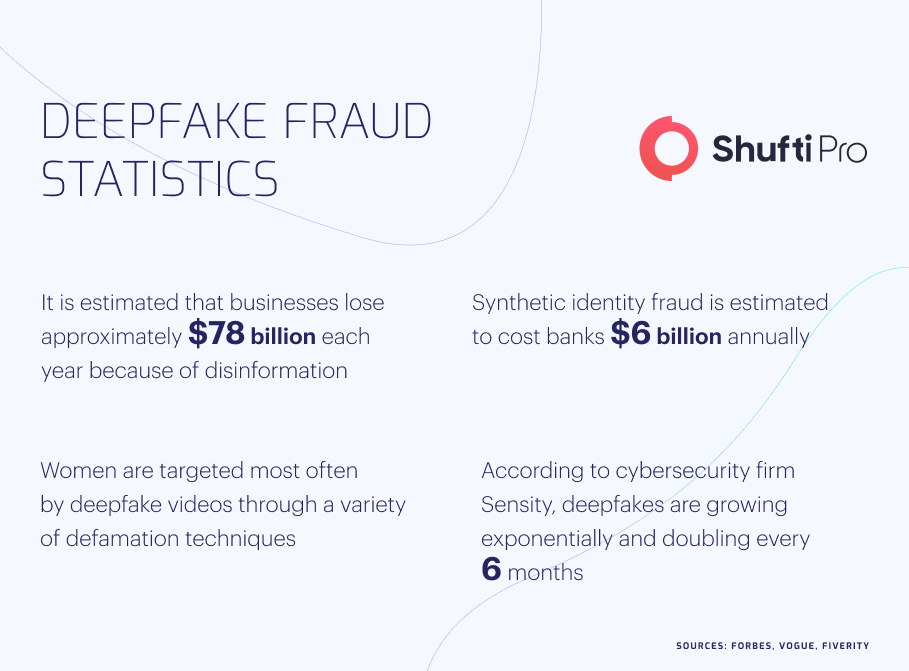

The Looming Threats of Deepfakes to Financial Industry

All financial organizations, including banks and insurance companies, are on high alert due to the threats posed by deepfake technology. The criminals impersonate the identities of business owners and executives to facilitate fraud resulting in financial losses to companies. There was a time when only the expert forger could create realistic fake media, but now everyone is capable of doing this using various smartphone apps. Most digital financial businesses rely on biometric authentication for their security, particularly thumb impression, facial recognition, and voice authentication. The deepfake technology can create realistic-looking identities that can easily bypass companies’ authentication checks, leading to several crimes, mainly identity theft, payment fraud, and account takeovers.

The criminals use stolen information to create synthetic identities combining fake and true identities, further presenting themselves as CEO or key member of company. Using deep-faked images, audio, and videos, they successfully access the database of businesses further executing monetary crimes. All these scams not only result in financial losses to businesses but several instances have been observed in the recent past where scammers try to defame companies’ or CEOs’ names using different deepfake techniques. All financial organizations must implement stringent biometric authentication measures to identify criminals and restrict fraudulent activities.

Deepfake Technology and Effects on Financial Sector

Although AI has eased a lot of operations, experts are predicting that deepfake can pose serious threats to individuals and businesses. There are a variety of smartphone apps, mainly Faceapp and Wombo which have made deepfake technology accessible to every internet user. Let’s have a look at some of the major cybercrimes which are becoming popular due to abuse of deepfake.

Identity Theft

Identity theft is one of the cybercrimes which have raised huge concerns for online businesses. In the US alone, there are 63% more instances of identity stealing in 2021 as compared to 2019, and Federal Trade Commission (FTC) has warned all digital service providers to ensure security for their users.

Fraudsters are using deepfake technology, pretending to be someone else, and getting access to accounts, products, or services. Deepfakes are quite harder to catch as criminals use victim’s voice or image, bypassing the verification measures. The cybercriminals primarily target identities of executives to claim complete command over whole system.

Payment Fraud

Using stolen identities through deepfake, criminals get involved in various financial scams resulting in losses to businesses. Scammers request payments through fake images, audio, and videos, particularly from banks. As they use the identities of original users, it becomes pretty challenging for security systems to counter them, ultimately leading to losing monetary assets of sophisticated customers. It is estimated that 61% of verification checks could not distinguish fake actors from real ones.

Stock Manipulation

The deepfakes also have huge potential to attack stock market, manipulating overall share prices. In the past, criminals have used fake videos of Donald Trump promising to lift bans on certain items in America, which eventually caused a huge surge in revenue for specific companies. The scammers also use several techniques to damage some company’s reputation by gaining access to social media accounts of CEOs and making false statements through them, ultimately distracting investors.

Recent Fraud Cases Through Deepfake Technology

With growing digitization in financial sector, criminals are also using advanced techniques which have increased crime cases through deepfake. The experts have termed deepfake technology a potential threat to existing financial systems and highlighted need for robust verification measures which can eliminate criminals, ensuring transparency in transactions.

Fraudsters Cloned Company Director’s Voice in $35 Million Fraud

UAE highlighted a gang of at least 17 individuals who were involved in a payment fraud of $35 million. The criminals use AI voice cloning to represent themselves as a director of UAE-based company. While talking to the bank manager, they promised a huge business for which they needed a loan, and surprisingly, scammers use official email accounts for communication. As soon as the bank transferred the amount, criminals left without leaving any trail for law-enforcement authorities. UAE Police is still investigating the matter to find whereabouts of criminals and bring them under law.

Fraud via Deepfake Audio Steals $243,000 From UK Company

Here again, the criminals used AI-generated audio and represented themselves as a CEO of a German-based company. The scammers convinced CEO of an English company to make an urgent wire transfer of $243,000 to their account and assured him of reimbursement. The victim could not identify criminals due to perfect voice cloning and transferred money, which criminals moved to Mexico.

Regulatory Authorities Monitoring Deepfake Abuse

Most jurisdictions still lack effective laws against deepfake abuse, which has led criminals to exploit loopholes and get away without penalties. Some countries have recently enacted regulations to monitor suspicious deepfake activities prevailing in the financial sector.

US

National Defense Authorization Act (NDAA) has broadened its scope and covers deepfake frauds under its domain. As per the act, it has been instructed to cybercrime investigation departments to monitor all the cases of deepfake in past five years and present a detailed report to Congress. It has also been stated by the Act to legislate stringent laws against all those involved in creating fake videos of politicians, military personnel, and their families.

Canada

Although the Canadian government is yet to legislate laws for deepfake fraud, there are certain contemporary acts, particularly Copyright Act and Electronic Documents Law that governs frauds committed using deepfake technology. The Canadian legal community is working efficiently to address the malicious use of deepfake and other financial fraud linked to it.

How Shufti Can Help

The scams related to deepfake are elevating, posing a huge threat to financial sectors. The only viable option to counter criminals is efficient facial recognition which verifies users’ identities in real-time. It is imperative for businesses to incorporate the latest security systems of biometric authentication and stay a step ahead of fraudsters through a tech-powered mechanism.

Shufti is offering a viable facial recognition solution that can omit payment fraud, account takeovers, and identity theft. Powered by thousands of AI algorithms, Shufti’s identity verification services offer the most secure and selfie-based onboarding method which provides results in five seconds with 98.67% accuracy.

Want to know more about how facial recognition services can counter deepfake scams?

![Anti-Money Laundering Compliance for Crypto Exchanges [2021 Update] Anti-Money Laundering Compliance for Crypto Exchanges [2021 Update]](https://shuftipro.com/wp-content/uploads/onsite-blog-Anti-Money-Laundering-Compliance-for-Crypto-Exchanges.jpg)