Age Verification for Online Dating Apps: Minor Protection, Catfishing Prevention and GDPR Compliance

Ofcom’s January 2025 guidance formally classified dating apps as “user-to-user” services under the Online Safety Act (OSA) 2023, setting a July 2025 deadline for highly effective age assurance. The ruling followed 130 police reports linking child sexual exploitation to underage users of dating apps since 2019. Dating platforms now face two compounding obligations: keeping minors out and stopping adults from hiding behind fabricated identities.

Age verification on a dating app is the process of confirming that a user has reached the platform’s minimum age using methods that go beyond self-declaration of a date of birth.

Both pressures land on the same engineering decision. A verification method needs to be accurate enough to meet the regulator’s definition of “highly effective,” private enough to comply with GDPR’s biometric data rules, and fast enough to avoid killing your onboarding conversion rate.

Why dating apps face a dual verification problem

Dating platforms carry two distinct verification failures that traditional onboarding tools never resolved. On one side, minors navigate onto platforms designed for adults, gaining access to content and contact they cannot legally consent to. On the other side, adults construct false identities using other people’s photos or stolen credentials to deceive genuine users.

The financial scale of the trust failure is documented in the US Federal Trade Commission’s Consumer Sentinel Data Book: romance scam losses reached $1.14 billion in 2023, with a median individual loss of $2,000, the highest median loss of any imposter scam category. These scams begin with a credible false identity on a dating or social platform. The same fraud vectors that enable romance scams also enable underage access: a user who can claim to be someone else can just as easily claim to be an adult.

Standard gatekeeping tools, including self-declared date of birth, email verification, and Facebook login, do not distinguish between a legitimate adult and a child who entered a false birth year. The UK’s Online Safety Act changed the regulatory requirement, not the underlying technology. What changed is that regulators now require a method that actually works.

The challenge is compounded by AI. Deepfake-generated profile photos and voice-cloning tools have made it easier to sustain a false identity across text and video interactions, raising the bar for what a credible verification flow needs to catch.

What the UK Online Safety Act requires of dating platforms

Dating platforms in the UK must meet the Ofcom “highly effective age assurance” standard under the Online Safety Act or face fines of up to 10% of global revenue or £18 million, whichever is greater. Ofcom’s January 2025 guidance set the compliance clock running, and the July 2025 deadline applied to child sexual exploitation and abuse (CSEA) reporting duties that placed dating apps squarely within scope.

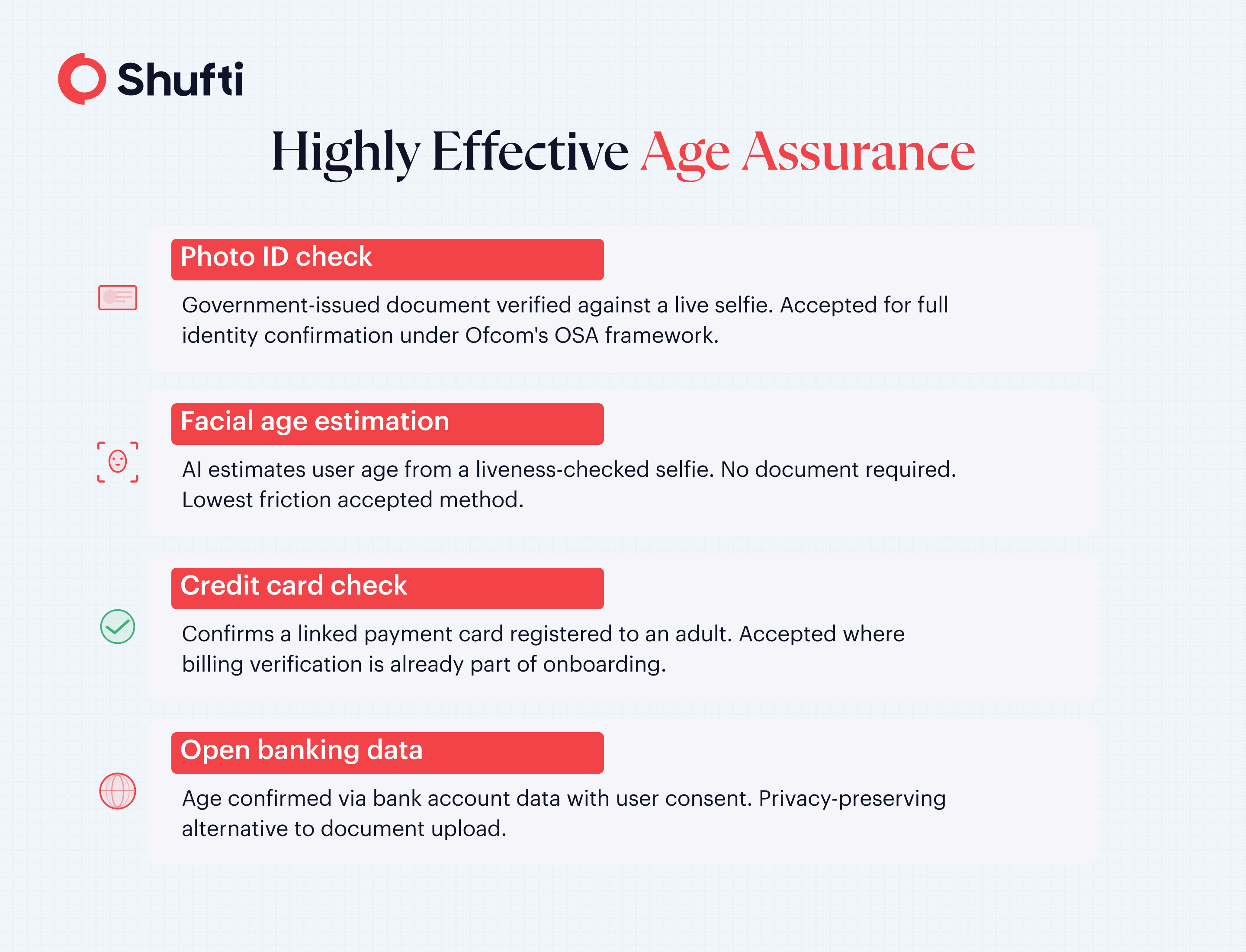

The “highly effective” standard rules out anything a motivated minor can bypass. Acceptable methods under Ofcom’s framework include photo-ID verification, facial age estimation, credit card checks, and open banking data. Self-declaration, where a user simply confirms they are over 18, is no longer a defensible approach for any platform classified as one that children are likely to access.

Ofcom also required all in-scope user-to-user services to complete children’s access assessments by April 2025, identifying whether children are likely to access the platform and what protective measures are needed. Dating apps, by their nature, meet that threshold without ambiguity.

The US regulatory picture is less consolidated. No federal law mandates age verification specifically for dating platforms. State-level online safety legislation in California, Texas, and other states imposes obligations on some social media services but does not target dating apps with the same specificity as the OSA. US-headquartered platforms serving UK users are fully within OSA scope regardless of where they are incorporated.

How does age verification prevent catfishing and identity fraud?

Identity fraud on dating platforms extends beyond minors misrepresenting their age. Adults build profiles using stolen photos, fabricated names, or credentials belonging to someone else, then use those profiles to defraud or harm genuine users. When a platform binds an account to a confirmed identity through biometric verification, the account can no longer be freely transferred or faked without triggering a mismatch at the next authentication point.

The most practical verification architecture for dating platforms works in two stages. The first is facial age estimation at onboarding, where a liveness-checked selfie runs through an AI model that determines whether the person is above the platform’s minimum age threshold. No government-issued document is required at this stage, which keeps the onboarding flow short. The second stage is face matching, where the live selfie is compared against the profile image captured at registration. Any subsequent login or account interaction can be re-verified against the enrolled face, catching account transfers and borrowed credentials that passed the initial check.

Dating platforms that carry the highest abuse risk benefit from periodic re-verification, rather than treating the signup check as a one-time clearance. This is the same principle that financial services firms apply to ongoing customer due diligence. Social media platforms face comparable challenges, explored in detail in the context of age verification for social media and the ways minors attempt to bypass standard controls.

Tinder, operated by Match Group, began expanding its ID verification feature to users in the US, UK, Brazil, and Mexico in early 2024, requiring a video selfie and a valid government-issued ID matched to the profile. By 2025, new users in some regions were required to complete face liveness checks before using the app. Document verification combined with biometric matching is becoming the baseline expectation for major dating platforms. The direction is set.

What GDPR means for biometric data on dating apps

Selfies and face templates used for age estimation and face matching fall under Article 9 of the General Data Protection Regulation (GDPR), enforceable since May 2018, as a special category of personal data. Special category data carries stricter processing conditions than standard personal data. For dating platforms serving users in the European Union, this creates concrete obligations that sit alongside, not beneath, the Ofcom age assurance requirement.

Processing biometric data for verification requires explicit, informed consent. The user must know what data is being collected, for what purpose, and for how long it will be retained. Facial age estimation reduces the privacy footprint of this step: the model estimates age from the image and the image can be discarded immediately after the check, without storing a face template. This approach satisfies the GDPR data minimisation principle directly.

Platforms that retain biometric data beyond the verification decision carry a substantially higher exposure. Enforcement under Article 9 can result in fines of up to €20 million or 4% of annual global turnover. Building a verification flow that is private by design, with configurable retention and clear consent architecture, is the more defensible approach for any platform already navigating Ofcom’s OSA requirements. The two frameworks are not in conflict when the verification method is chosen correctly.

How Shufti helps dating platforms verify age and identity

Dating platforms need verification that clears a regulatory bar without creating friction that drives users away before they complete onboarding. Shufti’s age verification solution addresses both sides of that trade-off through a staged approach that starts with the lowest-friction method and escalates only when risk warrants it.

Shufti’s facial age estimation runs a liveness-checked selfie through its proprietary biometric AI stack, confirming whether a user is above the minimum age threshold without requiring a government-issued document at the first step. Where the platform’s risk profile demands stronger assurance, document verification layers in as a second check. This waterfall approach keeps onboarding completion rates high while meeting the “highly effective age assurance” standard Ofcom requires.

For catfishing prevention, Shufti’s face verification matches a live selfie against the enrolled profile image, confirming the person logging in is the same person who registered. The check runs in under 15 seconds, built on 100% proprietary biometric models with no third-party processing layer touching user data. Shufti holds iBeta Level 1 and Level 2 and recently achieved iBeta PAD Level 3 certification for passive liveness on both Android and iOS, meaning the stack is independently tested against the deepfake, mask, and injection attacks increasingly targeting platform onboarding flows. Platforms can configure data retention settings to discard the biometric image after the verification decision is recorded, keeping the architecture GDPR-compliant from the start.

|

Dating platforms face a regulatory and reputational obligation to verify who their users are, from the first signup screen through to ongoing account activity. Shufti’s age estimation and face verification work together to meet that obligation without turning onboarding into a compliance form. Book a demo to see how the verification flow performs against a real dating platform onboarding sequence. |

Frequently Asked Questions

Do dating apps need age verification?

Yes, in regulated markets. The UK’s Online Safety Act 2023 requires dating platforms to implement highly effective age assurance, and the EU’s Digital Services Act imposes risk-based obligations on large online platforms. In the US, no federal mandate targets dating apps specifically, though state-level online safety laws are expanding.

What is the UK Online Safety Act requirement for dating apps?

Ofcom’s guidance requires dating apps classified as user-to-user services to implement highly effective age assurance, ruling out self-declaration. Acceptable methods include photo-ID verification, facial age estimation, and banking data checks. Non-compliance can result in fines of up to 10% of global revenue or £18 million.

Can dating apps use facial age estimation instead of ID?

Yes. Facial age estimation is accepted under Ofcom’s highly effective age assurance framework. It estimates a user’s age from a liveness-verified selfie without requiring a document upload, making it a lower-friction option for the initial onboarding screen, with document verification available as an escalation step.

How does age verification prevent catfishing?

When a platform binds an account to a verified biometric identity at signup, the account cannot be transferred to a different person without triggering a face-match failure. Periodic re-verification checks reinforce this by confirming that the same person who registered is the same person logging in.

What is the GDPR impact on age verification for dating apps?

Selfies and biometric templates used in age verification are classified as special category personal data under Article 9 of the GDPR. Platforms must obtain explicit, informed consent before processing, apply data minimisation, and define a lawful retention period. Non-compliance can result in fines of up to €20 million or 4% of annual global turnover.