Generative AI Deepfake Detection: Why Legal and Technical Layers Must Work Together

In early 2024 an employee at engineering firm Arup approved a $25 million transfer after a video call with people she believed were senior colleagues. None of them were real. Every face, every voice on that call was a generative AI deepfake, and the bank wire went through before anyone realised the meeting had never happened.

If you run fraud, KYC, or onboarding at a regulated business, the Arup case is not an outlier story. It is a preview of what generative AI does to identity evidence when detection tools lag the models producing the attacks.

A generative AI deepfake is synthetic audio, image, or video produced by modern diffusion or multimodal models, designed to look like a specific real person. Unlike the GAN-era fakes of 2019, these outputs carry almost none of the visible tells that early detectors were trained on. Combine that with cheap cloud inference and consumer-grade face-swap tools, and the detection problem is no longer a lab exercise.

The regulatory side is moving too. Article 50 of the EU AI Act, which enters into force on 2 August 2026, requires deployers of AI systems generating deepfake content to disclose that the content has been artificially generated (EU AI Act Article 50, Future of Life Institute). Technical detection alone is not going to be enough. What teams actually need is a layered framework that pairs runtime biometric signals with content provenance at source and a legal disclosure backstop. This article walks through how that framework works and what it means for the way you evaluate identity verification today.

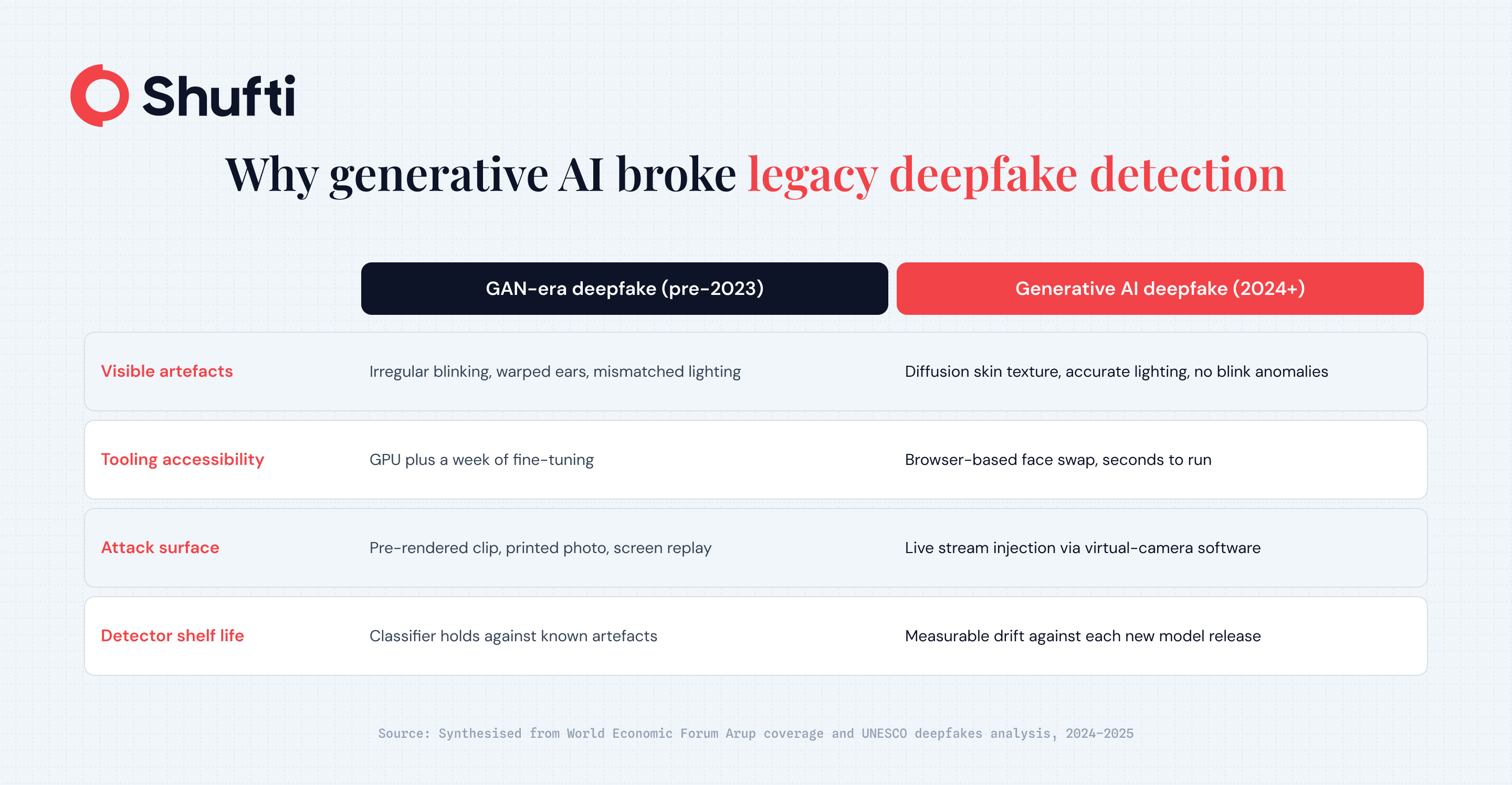

Why Generative AI broke the Old Detection Playbook

For most of the last decade, deepfake detectors looked for small artefacts that generative models left behind. Irregular blinking, inconsistent skin texture, mismatched lighting across a face, warped earlobes where the training data had been thin. When someone on your fraud team said a selfie “looked off”, they were usually picking up one of those tells without having to name it.

Modern generative AI erased most of them. Diffusion models produce skin texture that reads as authentic under pixel-level inspection. Voice-cloning systems capture cadence and breathing from a few seconds of audio. Face-swap pipelines now run in real time during a video stream, not as a pre-rendered clip. The UNESCO paper on synthetic media calls this shift a “crisis of knowing”, because the artefacts we used to trust are gone.

Three specific changes matter for KYC and fraud teams.

First, accessibility. Face-swap tooling that once required a GPU and a week of fine-tuning now runs in a browser. A fraudster does not need machine-learning expertise to produce a convincing video of someone else’s face.

Second, the attack surface moved. Virtual-camera software lets an attacker inject a pre-recorded or live-generated stream into a verification flow, bypassing the phone’s actual camera altogether. This is an injection attack, and it does not trigger RGB-only liveness checks that assume the pixels came from a real lens.

Third, the detection shelf life shrank. A classifier trained on last year’s deepfakes is measurably weaker against this year’s models. The 2024 NIST GenAI pilot study showed detection accuracy holding up against summarisation-style attacks, but flagged model drift as a standing risk for any deployed detector.

The Emerging Legal Framework is Rights-Based, Not Just Technical

Regulators have stopped treating deepfakes purely as a fraud typology. The newer framing sits closer to a human-rights problem, where synthetic media threatens identity, reputation, and informed consent. A recent peer-reviewed paper in the International Journal of Law, Crime and Justice argues for exactly that shift, proposing a legal framework that treats deepfake detection as a rights-protection duty rather than a platform feature.

Article 50 of the EU AI Act is the first hard deadline. From 2 August 2026, deployers of AI systems that generate or manipulate image, audio, or video content constituting a deepfake must disclose that the content has been artificially generated. The same provision requires machine-readable marking of synthetic outputs and informs users when they are interacting with an AI system. The exemptions are narrow, covering lawful criminal investigation and evidently artistic or satirical work.

The European Commission has been working on implementation detail through its Code of Practice on marking and labelling AI-generated content, with a final version expected in June 2026 ahead of Article 50 entering into force. For a fraud or compliance team, the practical implication is that “we could not tell it was fake” stops being a usable defence in regulated onboarding flows from that date.

The other piece of the puzzle is content provenance. The C2PA standard, developed by the Coalition for Content Provenance and Authenticity, defines how media files can carry tamper-evident metadata about who created them, with what tool, and how they were edited. C2PA is not a deepfake detector. It records history. Paired with detection, it gives teams two independent signals to cross-check when a submission arrives through a verification flow.

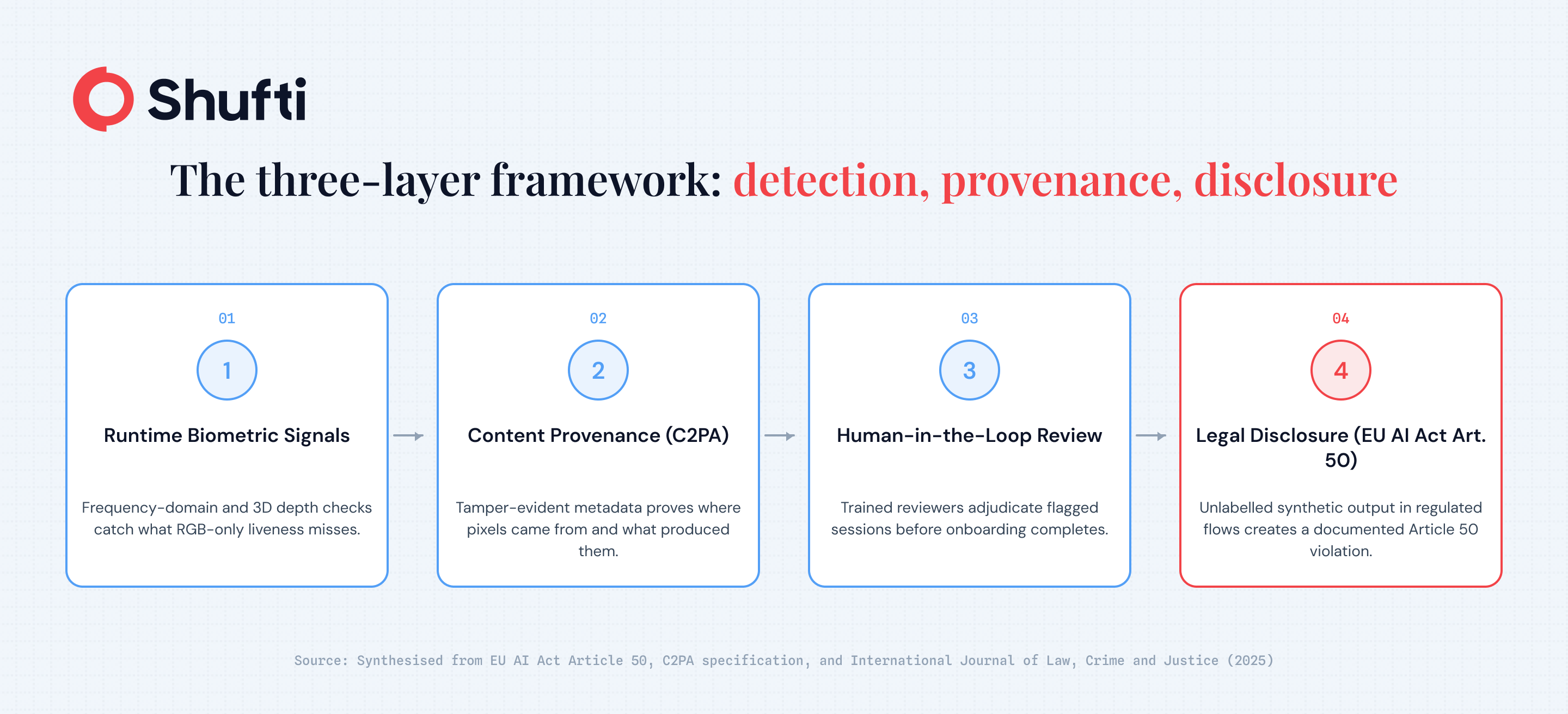

A Three-Layer Detection Model for KYC and Fraud teams

The durable answer to generative AI deepfakes is not a single smarter classifier. It is layered defence, because no layer survives on its own.

Layer one is runtime biometric detection. This covers the signals a camera can produce in real time during verification. Frequency-domain analysis catches artefacts that are invisible in the RGB view but show up when the image is transformed. 3D depth and parallax checks confirm a three-dimensional face is actually present. Behavioural cues, including micro-movements and response to passive prompts, separate a live person from a replayed or injected stream. A well-designed liveness layer also screens for injection attacks from virtual cameras, not only printed photos and screen replays.

Layer two is content provenance. When a submission carries C2PA credentials, a verification system can check who signed the content, which camera or editing tool was used, and whether metadata has been altered since creation. Leica, Samsung, and Google have started shipping native C2PA signing on consumer hardware, so credentialed media is no longer theoretical. A submission without credentials is not automatically fraudulent, but the absence is a signal worth routing into risk scoring.

Layer three is human-in-the-loop review plus the legal disclosure backstop. Hybrid AI and human review handles edge cases where automated confidence is low, and routes ambiguous sessions to trained reviewers rather than forcing a binary accept or reject. Article 50 disclosure duties, once in force, turn the legal layer into an enforceable part of the architecture. Content that should have been labelled and was not creates a documented violation, which strengthens the position of businesses that applied reasonable diligence.

Practical Next Steps for Compliance and Fraud Teams

A few checks are worth running on your current identity verification stack before Article 50 enters into force.

Ask your vendor whether their liveness model screens for virtual-camera injection, not only printed and screen-replay attacks. Ask whether detection is validated by a government or independent body rather than the vendor’s own lab, because self-reported accuracy numbers have a poor track record against current attack vectors. Confirm there is a review path for sessions where automated confidence is low, because fully automated decisioning on generative AI attacks is how legitimate fraud gets through.

Align your onboarding disclosure language with the Article 50 duty now, rather than retrofitting in July 2026. The Code of Practice text that lands in June will specify the detail, so leave room in the copy review cycle to incorporate it.

Generative AI deepfakes are already inside live verification flows, and the gap between “our liveness passes” and “we verified a real person” is where losses like the Arup case now sit. Shufti’s deepfake detection combines multi-layered anti-spoofing across 56+ attack vectors with hybrid AI and human review, active and passive liveness, and on-premises, cloud, or hybrid deployment. Request a demo to run a generative AI deepfake sample through the pipeline and see the verdict.

Frequently Asked Questions

What detection methods are most effective against generative AI deepfakes?

Layering runtime biometric signals, C2PA content provenance, and ensemble models combining frequency-domain and behavioural checks consistently outperforms any single-method approach.

What are the ethical implications of generative AI and deepfake detection?

Balancing fraud prevention with GDPR consent obligations, demographic bias in detection models, and EU AI Act Article 50 disclosure requirements demands transparent governance at every layer.

How does generative AI affect deepfake detection?

It shortens detector shelf life, introduces injection attacks via virtual cameras, and forces defence to combine runtime biometrics, content provenance, and legal disclosure instead of single-model classification.

How is the generative AI industry responding to deepfake misuse?

Through open standards like C2PA for content provenance, voluntary AI labelling commitments, and participation in the European Commission’s Code of Practice on marking AI-generated content.

Will AI-generated deepfakes become undetectable in the future?

Possibly against any single detector, which is why provenance-at-source and legal disclosure under Article 50 are designed to work alongside detection rather than rely on it alone.

How does the C2PA standard help fight AI-generated deepfakes?

C2PA attaches tamper-evident metadata to media at creation, recording who made it and how, so verification systems can cross-check provenance independently of whether the content itself looks real.