Deepfake Detection System: How It Protects Digital Identity from AI-Generated Fraud?

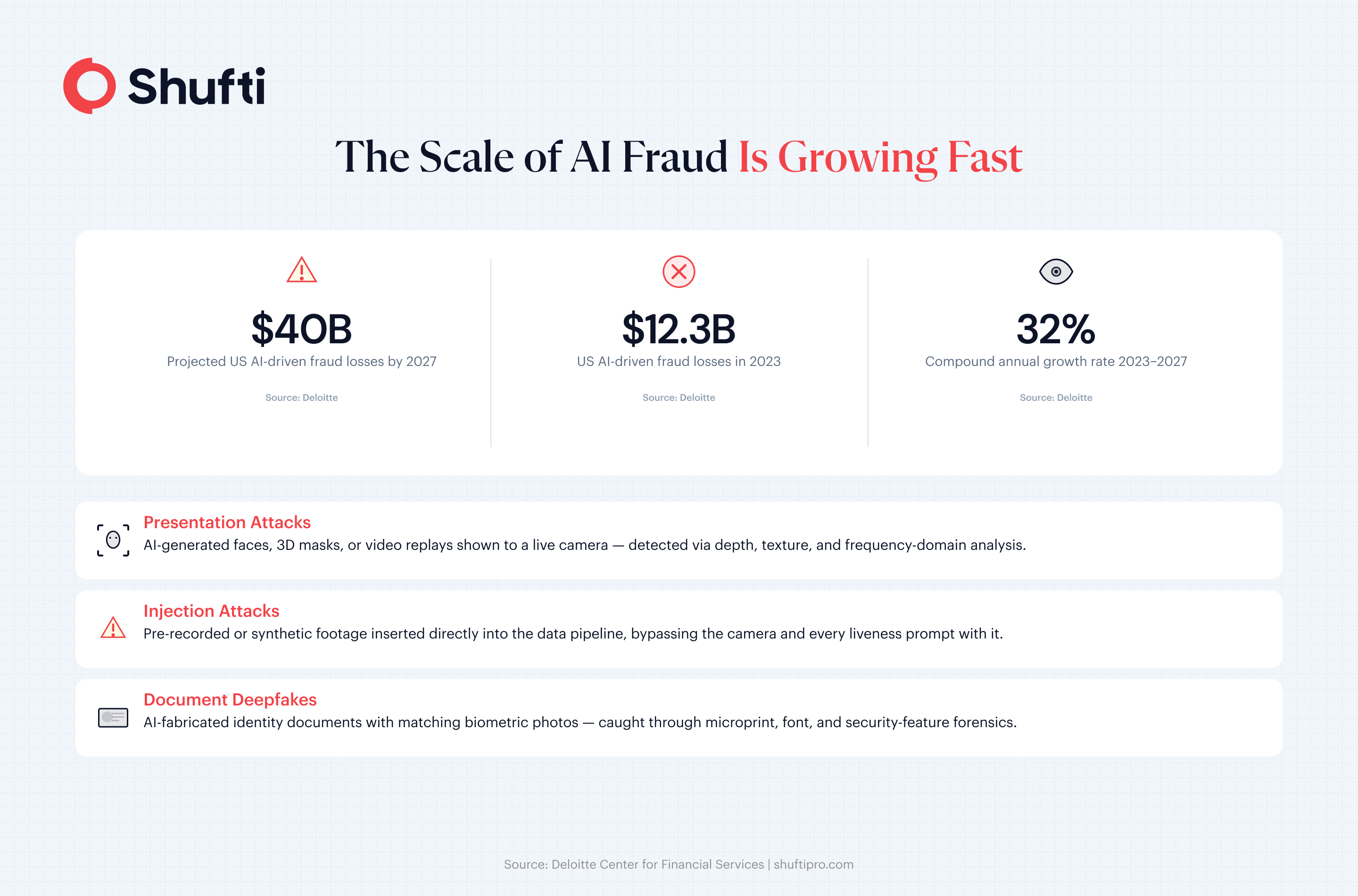

In January 2024, a finance worker at a Hong Kong company transferred $25 million to fraudsters after a video call with what appeared to be the firm’s CFO and colleagues. Every person on that call was AI-generated. Deloitte’s Center for Financial Services forecasts that generative AI could drive fraud losses in the United States to $40 billion by 2027, up from $12.3 billion in 2023 a 32% compound annual growth rate.

Regulators responded quickly. In November 2024, the US Treasury’s Financial Crimes Enforcement Network issued alert FIN-2024-Alert004, reporting a rise in suspicious activity filings from financial institutions describing suspected deepfake media used to bypass identity verification and authentication controls. Document checks and basic liveness prompts, it found, were no longer enough on their own.

A deepfake detection system is the technical response to that gap. This article covers what one actually is, the attack types it must address, how it handles real-time processing at scale, and what to look for when selecting and deploying one.

A Detection System Is a Pipeline, Not a Single Check

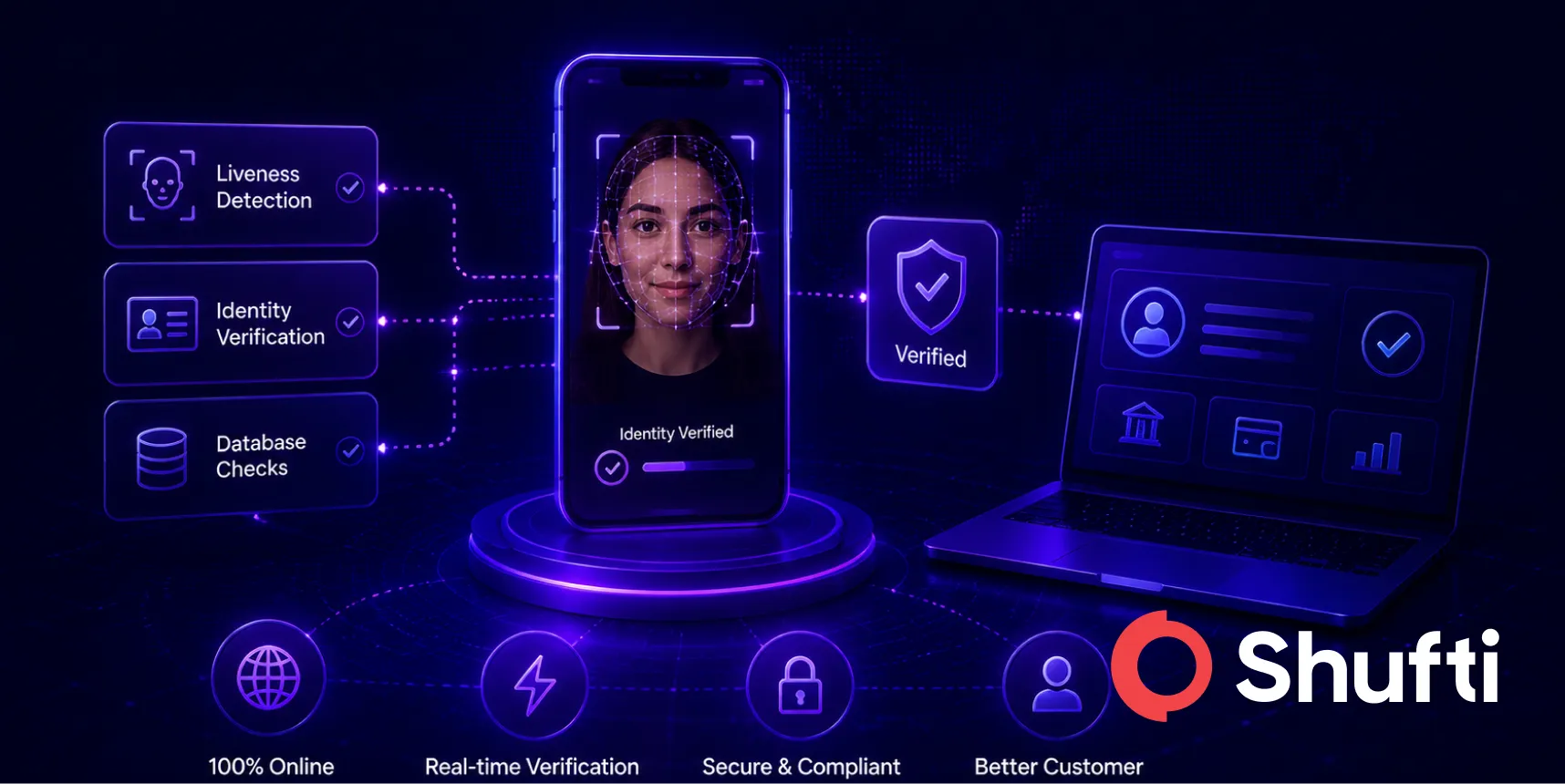

Most coverage treats deepfake detection as a single AI model that flags synthetic faces. In production, it is an integrated analysis pipeline covering multiple attack families across multiple media types at once.

Three distinct fraud scenarios require separate detection approaches. First, someone presents an AI-generated or manipulated face to a live camera, a face swap, a reenactment model, or a fully synthetic identity rendered in real time.

Second, someone bypasses the camera by injecting a pre-recorded video stream directly into the data pipeline; the verification system never sees a real camera feed. Third, the identity document itself is AI-fabricated, a passport or driver’s license generated to match the synthetic persona, complete with consistent personal data and biometric photos.

Each attack type needs a different technical response. A system that only analyzes facial video will miss injection attacks. A system that only validates document forensics will miss a live face swap. Production-grade deepfake detection systems run all three layers in parallel to close the gap between them rather than leaving each layer siloed. For a detailed breakdown of what makes liveness detection vulnerable, see our analysis of face spoofing and liveness bypass attacks.

Attack Vectors a Modern System Must Cover

Sophisticated fraud campaigns typically combine several techniques based on what the target system does and does not catch.

Presentation attacks include everything presented to a real camera: printed photos, 2D and 3D masks, video replays of a genuine person’s face, and AI-generated images or live synthetic faces. Detection at this layer relies on analyzing depth, skin texture, light reflection patterns, blood flow signals, and frequency-domain anomaly artifacts in pixel data that are invisible to a human reviewer but detectable by trained models. Systems relying only on RGB-channel pixel analysis are exposed to frequency-domain attacks that pass the visible check while failing under spectral inspection.

Injection attacks operate at the network layer rather than the camera layer. The attacker intercepts the data stream between the camera and the verification system, substituting pre-recorded or AI-generated footage for the real feed. No liveness prompt stops this, because the prompt never reaches a real person. Detection requires verifying data transport integrity, not just analyzing what the feed shows.

Document deepfakes are AI-generated identity documents with consistent biometric photos and personal data. Basic OCR cannot catch them, because the text is typographically correct. Forensic detection looks at font consistency, microprint patterns, security feature placement, and compression artifacts specific to genuine documents from each country and issuer, as well as patterns that generative models reproduce imperfectly at the pixel level, even when the top-level content appears clean.

Real-Time Processing Under Volume

An AI deepfake detection system deployed at scale has to reach a decision in seconds, not minutes. That is a real engineering constraint, because the analysis techniques have the highest accuracy. Multi-stream RGB and frequency-domain processing, depth estimation, behavioral micro-expression tracking are computationally expensive at session volume.

Production systems address this through tiered processing. A fast initial pass using lightweight models resolves the majority of sessions within two to three seconds. Sessions that return ambiguous or borderline signals escalate automatically to deeper multi-layer analysis. The most ambiguous cases or those exceeding a configured risk threshold route to expert human review without blocking the pipeline for other sessions.

This tiered structure keeps average time-to-decision low, even when the underlying models are complex and expensive, computation only runs for the fraction of sessions that need it. Active liveness asks the user for a micro-gesture that confirms physical presence; passive liveness captures the same confirmation signals in the background without any user instruction. Supporting both lets your compliance team tune friction by risk profile without losing coverage.

For a technical comparison of detection approaches currently in use, see deepfake detection methods for 2026.

Handling Edge Cases[c]

Production systems account for poor lighting, low-resolution devices, partial facial occlusions, and cross-border document variations. Sessions that fail to capture cleanly are flagged for retry or routed to human review rather than auto-rejected, preserving genuine user acceptance rates without lowering the fraud threshold for ambiguous inputs.

How Enterprises Deploy Deepfake Detection for Fraud Prevention?

Large enterprises integrate deepfake detection across onboarding, step-up authentication, and account recovery workflows. Fraud, compliance, and engineering teams configure separate risk thresholds by business unit, with higher friction for high-value transactions and passive liveness for low-risk flows. Detection outputs feed directly into AML screening and KYC orchestration layers for a unified risk verdict.

On-premise, cloud, or hybrid. Many regulated enterprises, particularly in financial services and government cannot route biometric data to third-party cloud infrastructure. An on-premise deployment keeps all biometric processing within the organization’s own environment. Cloud deployment allows faster model updates. Hybrid architecture separates sensitive biometric processing (on-premise) from non-sensitive metadata (cloud). Your data residency obligations, not architectural preference, should determine which model applies.

Regulatory context. The FinCEN FIN-2024-Alert004 alert identifies deepfake-circumvented identity verification as a Bank Secrecy Act reporting trigger. FATF guidance on AI and synthetic identity fraud ties biometric-bound customer due diligence to anti-money laundering obligations. In the EU, the AI Act imposes specific requirements on high-risk biometric systems used in identity decisions.

Knowing which frameworks govern your vertical is the starting point for configuring detection thresholds, audit trail depth, and escalation policies.

Integration architecture. A deepfake detection system does not replace identity verification instead it runs inside it. The detection layer sits between data capture (camera or document upload) and the identity decision engine. Detection outputs feed into the overall risk verdict alongside document authenticity signals, database checks, and AML screening results. Systems that pass all signals through a shared decision engine produce more defensible compliance records than standalone detection sidecars. For context on compliance alignment, see remote identity verification and deepfake challenges.

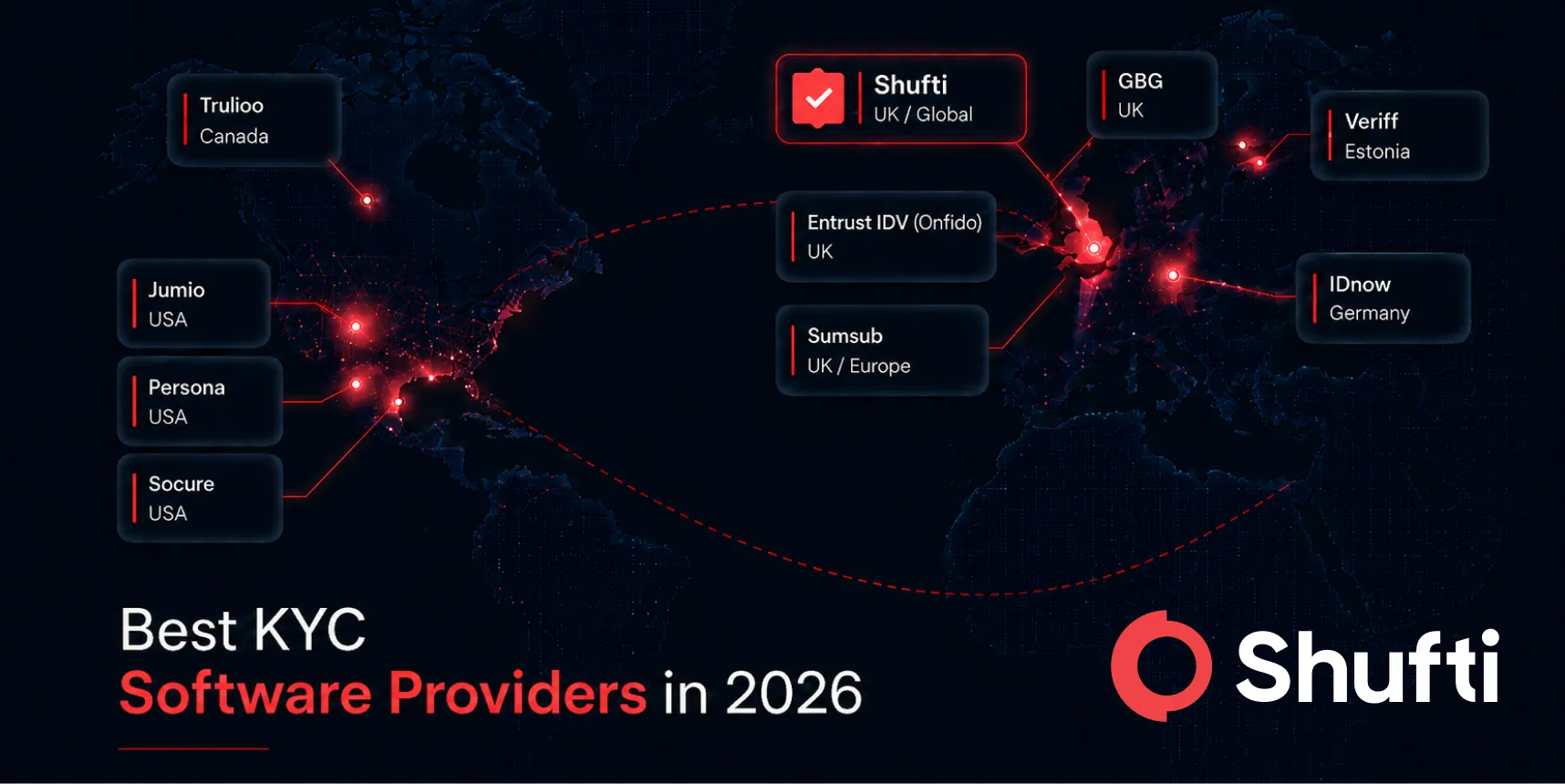

How to Evaluate the Best Deepfake Detection System?

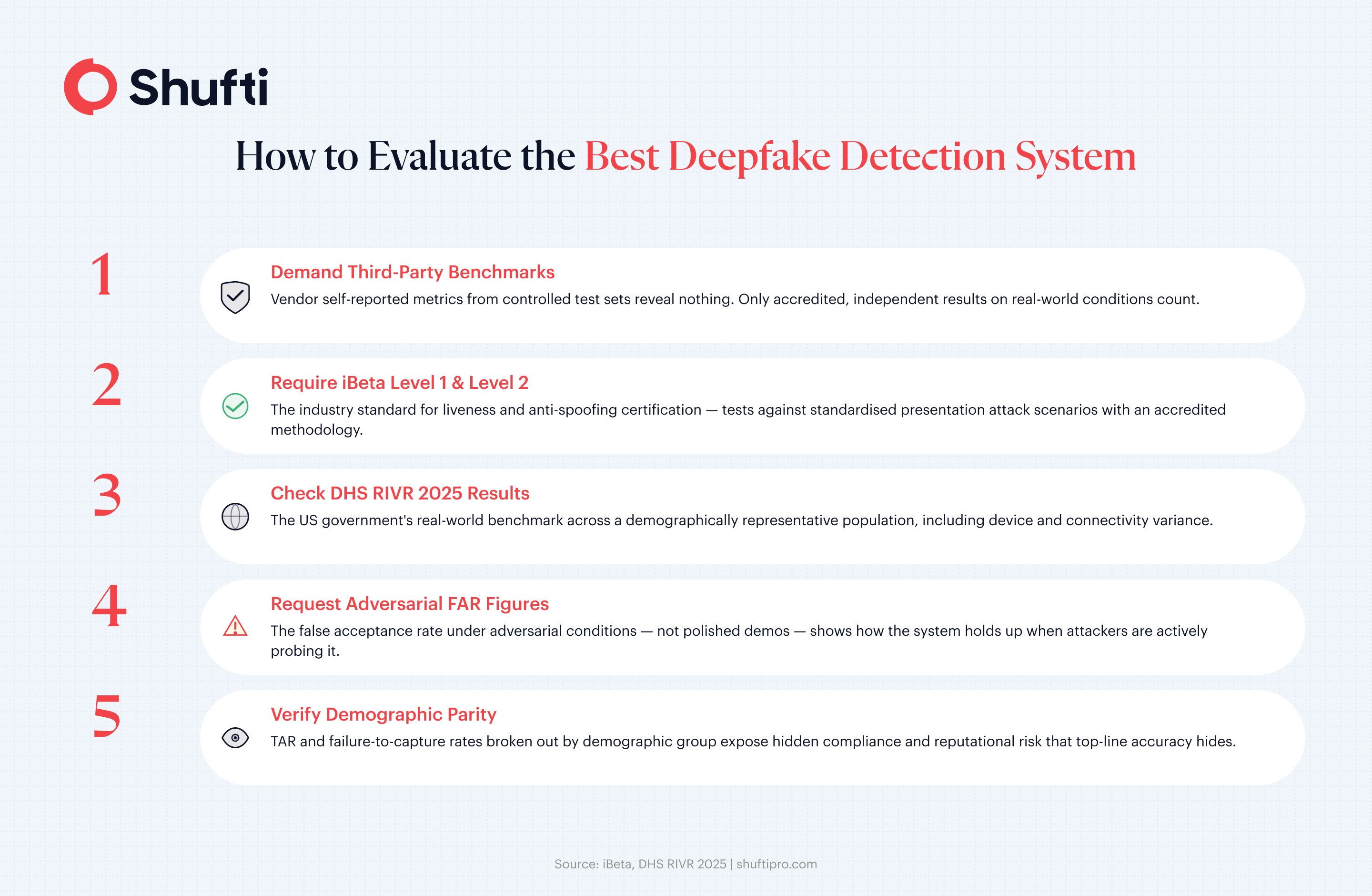

Independent benchmark results are the most defensible basis for vendor selection. Vendor self-reported metrics measured on controlled internal test sets tell you little about how a system performs under real-world conditions, with variable device quality, lighting, demographics, and adversarial inputs.

Two third-party frameworks matter most. iBeta Level 1 and Level 2 certification tests a system against standardized presentation attack scenarios with an accredited methodology. The US Department of Homeland Security’s Remote Identity Validation Technology Demonstration (RIVR 2025) evaluates performance across a demographically representative population under real-world conditions, including device and connectivity variance. DHS RIVR results are conducted independently at scale and measure demographic parity, a system that performs well overall, but fails specific demographic groups, is a compliance and reputational risk.

When reviewing any vendor’s claims, ask for the false acceptance rate under adversarial test conditions, the true acceptance rate for legitimate users, and failure-to-capture rates broken out by device type and demographic group. Those three figures tell you more than any marketing summary.

Keeping AI-generated fraud out of your verification flow requires a system built for the adversarial conditions real attackers deploy today. Shufti’s deepfake detection covers 56+ anti-spoofing attack vectors, presentation attacks, injection attacks, and document deepfakes with active and passive liveness, hybrid AI and human review, and flexible deployment across cloud, on-premise, and hybrid infrastructure, independently validated at DHS RIVR 2025 with 100% of government goals met. Request a demo to see how it integrates with your existing identity verification stack.

Frequently Asked Questions

What types of attacks does a deepfake detection system defend against?

The three main categories are presentation attacks (AI-generated faces shown to a live camera), injection attacks (pre-recorded video inserted directly into the data pipeline), and document deepfakes (AI-fabricated identity documents with matching biometric photos).

How does a deepfake detection system handle real-time processing?

A tiered approach runs a fast initial pass that resolves most sessions in two to three seconds, escalating only ambiguous results to deeper analysis or expert human review.

Can a deepfake detection system be deployed on-premise?

Yes, on-premise deployment keeps all biometric processing within your own infrastructure, and hybrid configurations that separate biometric data from non-sensitive metadata are also available.

What compliance standards apply to deepfake detection systems?

Relevant frameworks include FinCEN FIN-2024-Alert004, FATF guidance on AI-enabled synthetic identity fraud, the EU AI Act for high-risk biometric deployments, and iBeta Level 1 and Level 2 certification for liveness and anti-spoofing performance.

How are deepfake detection systems evaluated and benchmarked?

iBeta Level 1 and Level 2 certification and the US DHS RIVR program are the most widely recognised independent benchmarks; third-party results on diverse, real-world populations are more reliable than vendor self-reported figures.