2026 Fraud Trends and Threats: What Compliance Leaders Need to Know

- 01 What Are Fraud Trends in 2026?

- 02 The Scale of Fraud in 2026

- 03 How is AI and Deepfake Technology Rewriting Fraud in 2026?

- 04 Which Industries Face the Highest Fraud Risk in 2026?

- 05 What Are the Most Common Payment Fraud Trends in 2026?

- 06 How Shufti helps compliance teams get ahead of evolving fraud

The problem is not just volume. AI-generated campaigns, deepfake identity spoofing, and synthetic identities built from breached data are changing how fraud works at a fundamental level.

For compliance and fraud teams, the question is no longer whether an attack will arrive. It is whether your current controls were built to handle these specific attack types.

What Are Fraud Trends in 2026?

Fraud trends in 2026 describe the evolving tactics, tools, and attack patterns fraudsters are using to steal money and identities from businesses and consumers across every region.

The defining shift is the use of AI in fraud operations. Attacks are faster to run and harder to catch with conventional identity verification and monitoring tools.

The Scale of Fraud in 2026

Fraud losses in 2025 were larger and more geographically spread than most compliance forecasts anticipated. INTERPOL’s 2026 findingsrecorded $442 billion in direct fraud losses covering investment scams, payment fraud, business email compromise, and identity-based attacks across member countries.

Europe recorded the steepest increase at 69% year-on-year, while Asia-Pacific saw a 47% rise driven largely by organised online scam networks.

What the Numbers Look Like in the US

In the United States, the Federal Trade Commission (FTC) reported $12.5 billion in consumer fraud losses in 2024, a 25% increase from the prior year. Investment scams accounted for the largest share at $5.7 billion. These figures cover reported incidents only. Law enforcement agencies consistently estimate that actual losses run several multiples higher, particularly in business-to-business contexts where fraud typically goes unreported.

How is AI and Deepfake Technology Rewriting Fraud in 2026?

AI-generated fraud is more accessible and more profitable than any previous form of financial crime.

INTERPOL’s 2026 report found that AI-enhanced financial fraud is 4.5 times more profitable than traditional methods. It is powered by “agentic AI” systems that can plan and execute complete fraud campaigns without human oversight at each step.

What previously required a skilled criminal operation now runs on commercially available tools at a fraction of the cost.

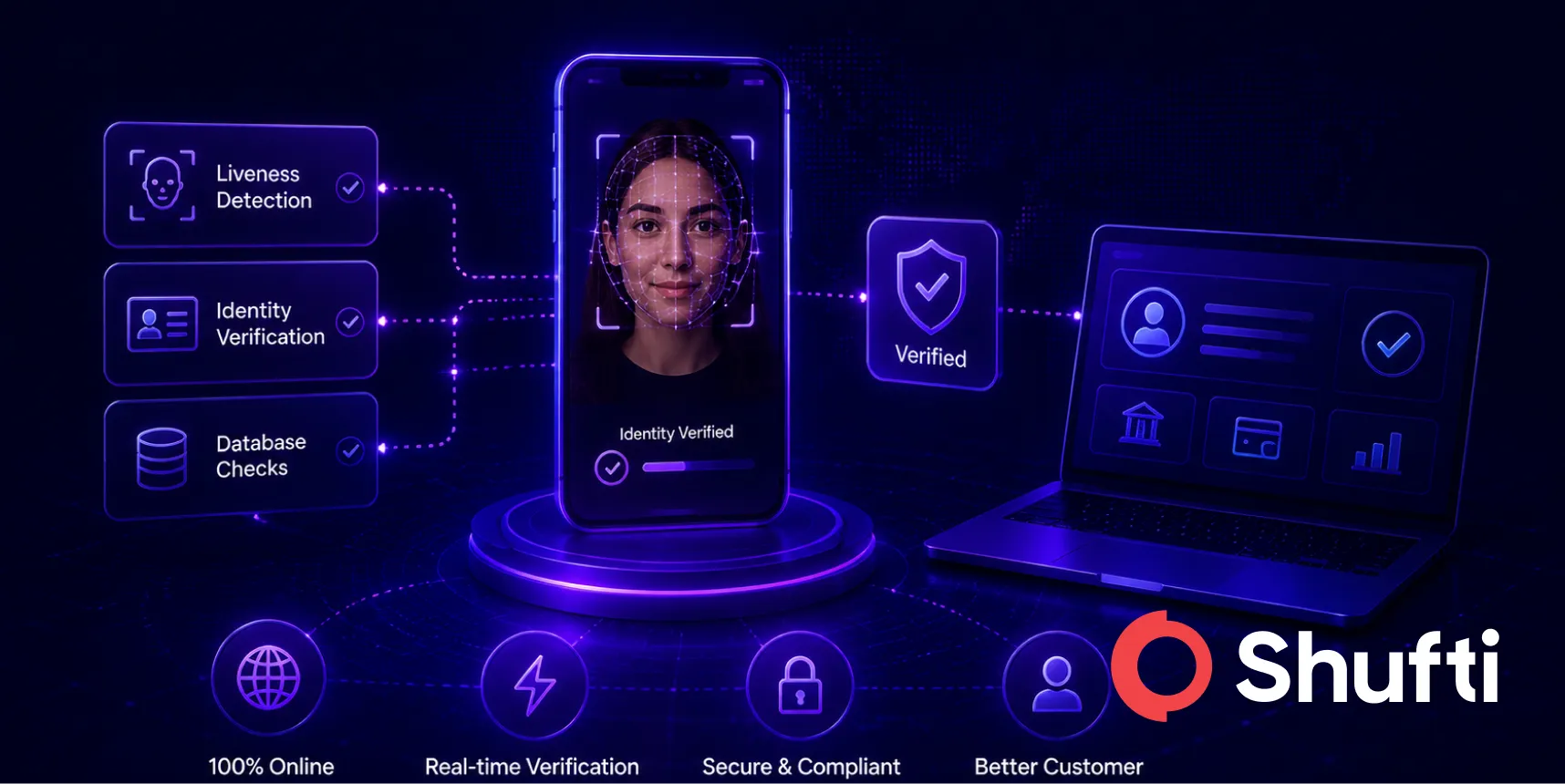

Deepfakes are the most visible expression of this shift. Fraudsters use AI-generated face swaps, voice cloning, and synthetic video to bypass identity verification during account opening, payment authorisation, and customer service interactions. Voice deepfakes rose 680% year-on-year in recent reporting periods, with some tools capable of cloning a voice from under three seconds of source audio.

Gartner forecast in February 2024 that by 2026, 30% of enterprises would no longer consider standalone identity verification solutions reliable due to AI-generated deepfakes. That prediction has become present tense. Platforms relying on a single liveness check or a single document scan are absorbing the most fraud events. Layered detection combining passive liveness, deepfake detection technology, and document forensics is what the current attack surface demands.

For a closer look at how deepfake-as-a-service has changed the economics of identity fraud, read the breakdown of deepfake-as-a-service threats to identity verification.

Which Industries Face the Highest Fraud Risk in 2026?

Risk Is Spreading Across Sectors

Financial services carries the largest fraud exposure by volume, but the risk is broader than in previous years.

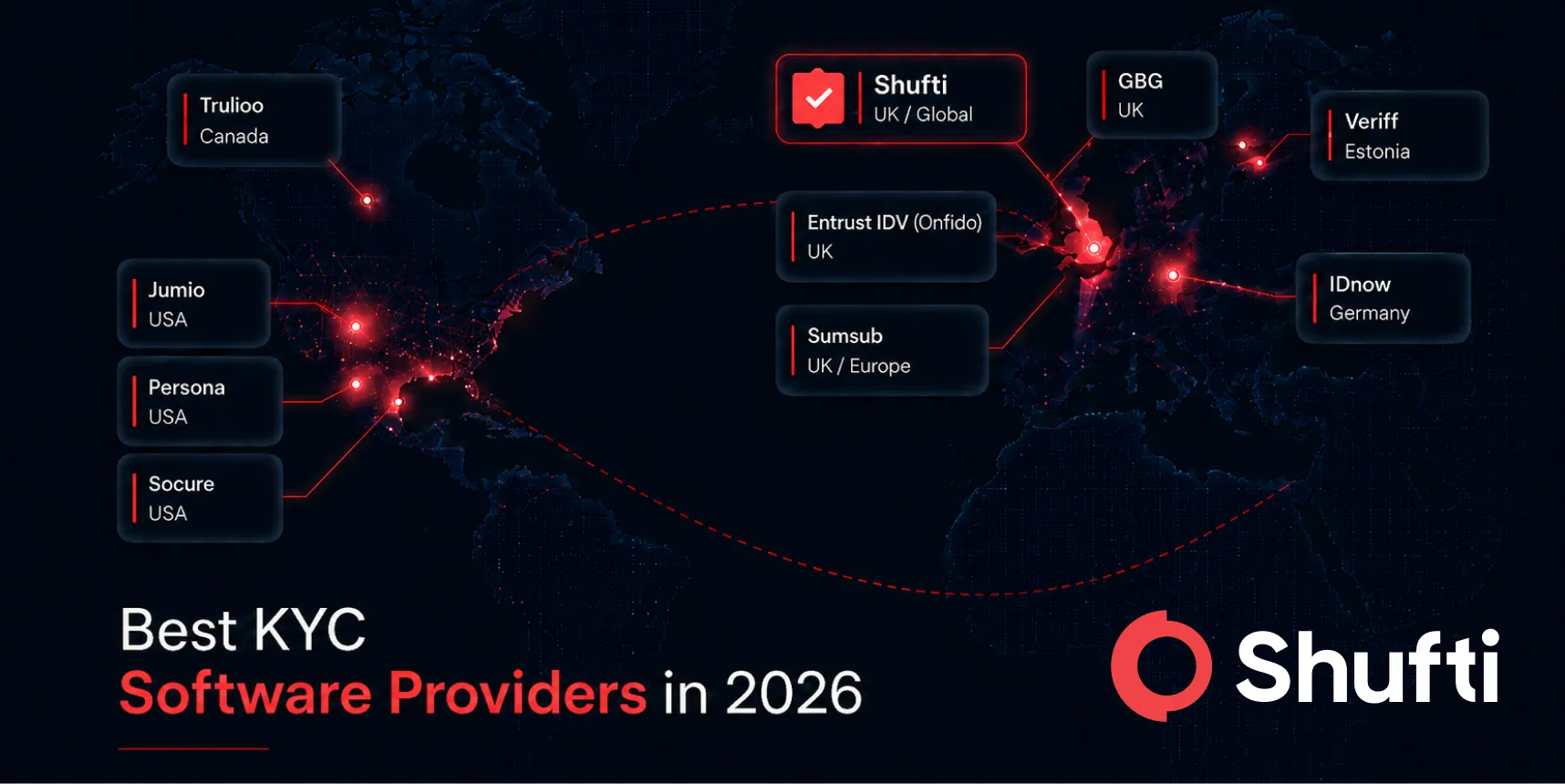

INTERPOL’s global fraud analysis identifies five sectors where fraud attempts concentrate and the economics favour attackers: financial services, fintech and payments, gaming and gambling, crypto and digital assets, and e-commerce.

What Each Sector Is Dealing With

Banking and fintech face account takeover fraud, synthetic identity applications, and payment authorisation fraud.

Crypto exchanges and digital asset platforms contend with transaction laundering, wallet takeover, and investment scam networks recruiting victims through social platforms.

Gaming operators and e-commerce platforms encounter bonus abuse, age fraud, and return fraud as fraud rings cycle through multiple accounts in coordinated campaigns.

The Data Problem Behind It All

The Europol Internet Organised Crime Threat Assessment (IOCTA) 2025 found that stolen personal data has become the primary commodity in criminal markets.

A single breach can supply raw materials for synthetic identity creation, account takeover, and social engineering attacks across multiple sectors simultaneously.

What Are the Most Common Payment Fraud Trends in 2026?

Three Dominant Patterns

Payment fraud now runs across multiple channels, and the shift to real-time payment rails has made recovery harder once a transaction clears.

The Bank for International Settlements (BIS) CPMI identified three predominant patterns in its 2026 cross-border payments fraud report:

- Authorised push payment (APP) fraud — victims are socially engineered into initiating transfers themselves

- Account takeover — fraudsters access a legitimate account and push fraudulent outbound payments

- Card-not-present fraud — stolen credentials are used to make purchases without a physical card

Why APP Fraud Is Growing the Fastest

APP fraud is particularly hard to stop because the payment is technically legitimate from the system’s perspective. The bank sees a valid instruction from a verified account holder. The fraud happens on the human side of the transaction, not inside the payment infrastructure.

As of 2026, regulators in the UK and EU are advancing mandatory reimbursement rules that shift liability to payment service providers. That makes fraud prevention a direct balance-sheet priority for banks.

Business Email Compromise Is Getting Harder to Catch

Business email compromise (BEC) sits at the intersection of social engineering and payment fraud. Fraudsters impersonate executives or suppliers to redirect payment instructions.

AI-generated voice calls and deepfake video have made these attacks much harder to catch with standard internal verification procedures.

For teams building a fraud detection framework, the FATF financial crime guidance provides the regulatory baseline. For a direct look at how FATF assessed deepfake risk, read the FATF deepfakes horizon scan analysis.

How Shufti helps compliance teams get ahead of evolving fraud

The fraud trends in this article share a structural vulnerability they exploit. A liveness check that operates independently of document forensics, or a sanctions screen disconnected from identity verification, leaves a gap that organised fraud operations have learned to target. The architecture of the verification stack matters as much as the strength of any individual check.

Shufti’s face verification covers 56+ anti-spoofing attack vectors, including AI-generated deepfakes, 3D masks, and injection attacks, validated by the Department of Homeland Security (DHS) RIVR 2025 programme as a top performer with 100% of DHS goals met across diverse demographics. Passive and active liveness operate in the same pipeline as document forensics, so a live person and a genuine document are confirmed through one connected decision rather than two separate checks.

Shufti’s AML screening runs continuous checks against 3,500+ global watchlists with data refreshed every 15 minutes, surfacing risk signals that emerge after onboarding rather than only at the point of application. Both capabilities connect through a single API, so the fraud signal from identity verification is visible in the same workflow as the AML screening output.

Fraud teams in 2026 are finding that the attacks they face were built for a different generation of detection tools, and that gap is where losses accumulate. Shufti’s fraud prevention platform combines DHS-validated face verification with real-time AML screening across 3,500+ watchlists, closing the architecture gap between identity verification and ongoing risk monitoring. Request ademo to see how the combined pipeline handles current attack vectors on your actual onboarding volumes.

Frequently Asked Questions

What are the biggest fraud trends in 2026?

AI-generated deepfakes, synthetic identity fraud, authorised push payment scams, and account takeover are the dominant fraud trends in 2026, driven by accessible AI tools that lower the cost and complexity of organised fraud operations.

How is AI changing fraud trends?

AI allows fraudsters to automate complete campaigns, generate synthetic identities, clone voices and faces from minimal source material, and run social engineering at a scale previously impossible without large criminal networks.

What industries are most affected by rising fraud trends?

Financial services, fintech, gaming and gambling, crypto exchanges, and e-commerce carry the highest fraud exposure in 2026, with financial services absorbing the largest volume of attacks and crypto platforms seeing the fastest growth in attempts.

How are fraudsters using deepfakes in scams?

Fraudsters use AI-generated deepfakes to bypass liveness checks during account opening, impersonate executives in business email compromise calls, and clone customer voices to authorise fraudulent transactions.

What are the most common payment fraud trends in 2026?

Authorised push payment fraud, account takeover leading to fraudulent transfers, and business email compromise are the three most prevalent payment fraud types in 2026, with APP fraud growing fastest due to the shift to real-time payment rails.