Facial Liveness Detection: How It Works and Why It Matters

- 01 What is Facial Liveness Detection?

- 02 What is Anti-spoofing in Facial Liveness Detection?

- 03 How a Live Face Is Distinguished From a Spoof?

- 04 The Attack Types Liveness Needs to Defeat

- 05 How Does Facial Liveness Detection Prevent Photo Spoofing?

- 06 What are The Latest Attack Vectors Against Facial Liveness Detection?

- 07 How to Evaluate Facial Liveness Detection in Remote Onboarding?

- 08 Practical Next Steps For Your Evaluation

- 09 Take the Next Step in Your Liveness Evaluation

Fraud teams keep asking the same question after a deepfake-driven onboarding incident. How did a face that looked real to the system turn out to belong to nobody?

The answer usually comes back to the same weak link. A biometric check confirmed a face was present, but not that the face was alive, in front of the camera, at that moment. That gap is what facial liveness detection is meant to close, and the gap has widened fast.

The FBI Internet Crime Complaint Center’s 2024 report logged a record $16.6 billion in reported losses, with AI-facilitated fraud flagged as a rising vector. Any identity stack that does not answer “is this person real and present right now” is exposed.

What is Facial Liveness Detection?

Facial liveness detection is the set of biometric checks that confirm a human face in front of the camera belongs to a live, physically present person, not a photo, a video replay, a 3D mask, or a synthetic video stream. It sits between face capture and face matching, and it is the layer most often bypassed when onboarding fraud succeeds. The reference standard here is ISO/IEC 30107-3:2023, which defines how presentation attack detection is tested and reported across the industry.

Two practical modes exist. Active liveness asks the user to blink, turn, or smile so a change in the feed can be measured. With a passive mode, the system analyses a single selfie or short video in the background, without any gesture. Most modern deployments run both, and risk policy decides how much friction the user sees.

The reason this layer has moved from nice-to-have to baseline is the shape of remote fraud. Face matching on its own answers a narrow question. Does this selfie match the document photo. It does not answer whether the selfie came from a real person in front of the lens, or from a video streamed in through a virtual camera. Liveness is the piece that closes that second question, and without it a face match is only half a verification.

What is Anti-spoofing in Facial Liveness Detection?

Anti-spoofing is the technical capability within liveness detection that identifies and rejects fraudulent face inputs, such as photos, replays, masks, or injected video streams, before they can pass as a legitimate live user.

How a Live Face Is Distinguished From a Spoof?

Active liveness, user action as signal

With active liveness, the user performs a small on-screen action. The system looks for motion consistent with a real person responding in real time. Micro-movements in the face, eyes, and jaw are hard to fake with a printed photo or a paused video. The trade-off is friction. Users on slow connections or in poor light can fail on the first try, and that rejection is expensive.

Passive liveness, Background Signals

Passive liveness does more work behind the scenes. Texture analysis looks for paper grain, screen moiré, and print patterns that do not exist on real skin. Frequency-domain cues are the strongest defence against digital replays because compression artefacts from re-encoded video leave a fingerprint that a live camera feed does not produce. Depth reconstruction then confirms the face has real geometry rather than a flat surface pointed at the camera.

A well-configured flow combines both. That is also what the federal-level evaluation work suggests. The NIST Face Analysis Technology Evaluation, Part 10 report on passive software-based PAD algorithms found that no single algorithm caught every attack type across the range of presentation attack instruments tested, and that performance varied sharply by attack category.

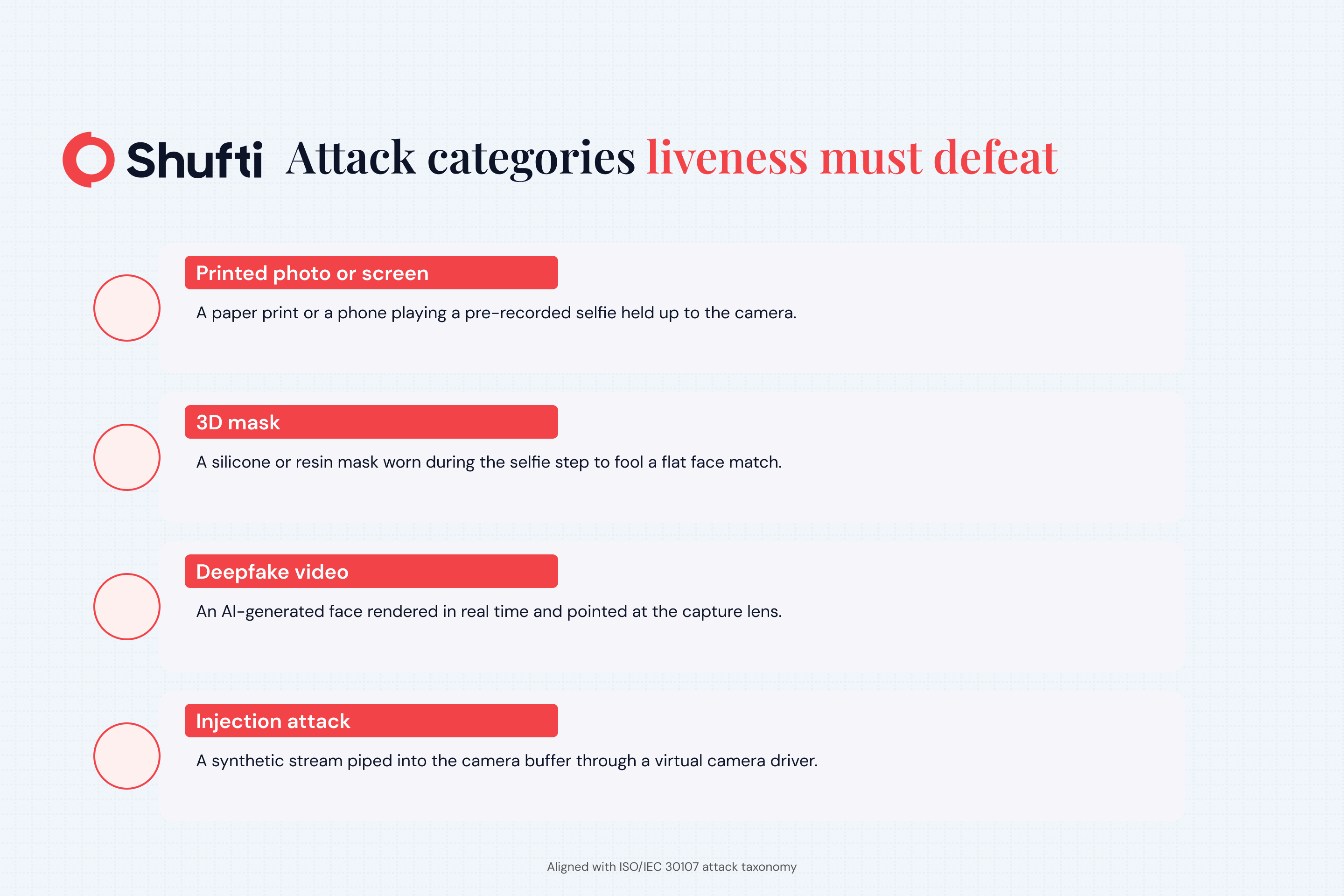

The Attack Types Liveness Needs to Defeat

Practitioners evaluating a biometric stack often start with the wrong question. They ask “how accurate is it”, when the useful question is “which attacks does it actually catch”. The taxonomy breaks into two buckets.

Presentation Attacks

Presentation attacks are physical. Typical examples include a printed photo held to the lens, a laptop screen showing a pre-recorded video, and a silicone mask worn during onboarding. ISO/IEC 30107 treats these as the baseline test surface. A basic 2D RGB liveness model can usually catch a paper photo and a low-quality replay, but it struggles with high-resolution monitors, curved prints, and well-made 3D masks.

How Does Facial Liveness Detection Prevent Photo Spoofing?

Texture analysis detects paper grain and print patterns absent from real skin, while depth reconstruction confirms a three-dimensional face is present rather than a flat printed surface.

Injection and Synthetic Attacks

Injection attacks skip the camera entirely. Fraudsters feed a pre-generated video stream, often a deepfake, straight into the device’s camera buffer through a virtual camera driver. The system sees what looks like a normal feed, but no real face was ever present. Europol’s Innovation Lab has flagged deepfake creation as an emerging staple tool of organised crime. Synthetic identity assembly, where stolen data, a generated face, and a forged document are combined, is the fastest-growing variant.

The defensive problem here is that a good injection attack never touches a physical surface. Texture cues vanish. Depth geometry can be simulated. Frequency-domain artefacts become the strongest remaining signal, alongside device and session telemetry that reveals whether the camera stream matches what the hardware should be producing. Stacks built on 2D RGB alone were trained for a problem that has already moved on.

What are The Latest Attack Vectors Against Facial Liveness Detection?

The fastest-moving threats are deepfake injection attacks via virtual camera drivers and synthetic identity fraud combining AI-generated faces with forged documents both of which bypass physical presentation entirely and require frequency-domain and session-level defences.

A credible liveness stack is tested against all of these, not just the cheap ones.

How to Evaluate Facial Liveness Detection in Remote Onboarding?

Regulated remote identity verification is where liveness has to hold up, and that is where most shortcuts become visible. The patterns below are what separate reliable deployments from checkbox ones.

Independent Benchmarks Matter

Internal test scores are not enough. The two external programmes worth tracking are the NIST FATE PAD evaluations, which score algorithms under a controlled protocol, and the US Department of Homeland Security’s Biometric Technology Rally, which stresses entire identity-verification stacks against realistic attack mixes. Published rally results have shown that a meaningful share of tested systems fails at least one goal. If a provider will not cite independent evaluation data, that silence is the finding.

Where Teams Get it Wrong?

Three mistakes keep showing up in post-incident reviews. The first treats liveness as a single toggle rather than a policy, with no fallback for ambiguous cases. Teams also tend to rely on a single signal type, usually 2D RGB, which is blind to frequency-domain cues.

The last mistake is running liveness only at account opening, so high-value actions like large withdrawals or beneficiary changes never get a fresh check. Mature programmes run liveness at onboarding and re-use the biometric template for step-up checks at risky moments.

One more pattern matters here. Accessibility and demographic performance shape whether a liveness programme is usable at scale. A system that fails more often on darker skin tones, older users, or users wearing glasses will either push those users to a manual channel or lose them outright. Independent evaluations, not internal lab numbers, are the way to check this. Demographic fairness and false-reject rates belong on the same scoreboard as spoof-detection performance.

Practical Next Steps For Your Evaluation

If the last face verification review was written more than 18 months ago, the threat model has changed. A short, practical exercise helps validate the current stack. Start with a red-team sample that feeds the stack a printed photo, a screen replay, a simple deepfake clip, and, if testing capacity exists, an injection attempt via a virtual camera.

Compare pass rates against ISO/IEC 30107-3 attack categories rather than vendor marketing claims. False-reject rates on legitimate users matter too, especially with glasses, beards, and in low light, because rejections kill revenue and push users to abandon legitimate sign-ups.

Match the evidence to the provider’s documentation next. A credible provider publishes PAD test levels aligned with ISO/IEC 30107-3, submits algorithms to NIST FATE or a DHS rally, and offers on-premises deployment for regulated environments that cannot send biometric data to a third party. Clear, verifiable answers here matter far more than any single accuracy number.

Take the Next Step in Your Liveness Evaluation

Remote onboarding cannot stand up to the current generation of presentation and injection attacks when a single, older liveness signal is doing all the work. Shufti’s face verification combines active and passive liveness, frequency-domain artefact detection, and injection attack screening across 56+ anti-spoofing vectors, independently validated at iBeta Level 1 and Level 2 and DHS RIVR 2025. Request a demo to run your current stack against a real attack sample and see where the gaps are.

Frequently Asked Questions

Does facial liveness detection affect user drop-off rates?

Yes. Active liveness adds friction that raises abandonment, particularly on mobile and slow connections. Passive liveness reduces this significantly. Drop-off impact should be tracked separately from spoof-detection performance when choosing between the two modes.

Is biometric data from liveness checks subject to privacy regulations?

In most jurisdictions, yes. Regulations such as GDPR, CCPA, and Illinois BIPA classify facial biometric data as sensitive personal data with strict rules on storage, consent, and retention. On-premises deployment options exist specifically for regulated environments that cannot transfer this data to third-party servers.

Does facial liveness detection work with medical face masks or head coverings?

Partial occlusion is a known edge case. Modern systems handle light coverings reasonably well using periocular signals around the eyes, but full-face coverings degrade accuracy. Providers should publish false-reject rates specifically for occluded-face scenarios rather than only full-face test conditions.

How is facial liveness detection tested and certified?

The primary independent frameworks are ISO/IEC 30107-3 presentation attack detection testing, iBeta Level 1 and Level 2 certification, and the US Department of Homeland Security's Biometric Technology Rally. Vendor self-reported accuracy figures are not a substitute for results from these programmes.